Not sure on hours how do I find out? Its the oled55b7v model

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Threadmarks

View all 9 threadmarks

Reader mode

Reader mode

Recent threadmarks

Changelog & News How presets work for each Input/Signal + 1 time Setup Why RGB Limited + Black Level: Low (instead of Full/Auto)? 2023 HDR Games - A complete analysis (HGIG vs DTM) LG G3 Final Review 2023+ DTM in FMM is very good now, and the way to go. How to do a "Short Break in process" as soon as you buy your LG OLED Miscellaneuous Gaming Suggestions for Best Visuals & ClaritySupport > TV information

Cant see it there

Hmm it seems the 1.06 update for CyberPunk 2077 reset all in-game HDR Settings to default . Running on a C7 OLED (calibrated "A la P4OLO" for 2017 C7 Oled - Running XBOX Series X.)

The changelog does not mention any HDR changes. Anybody seen the same, or seen any difference ?

P4AOLO : You might want to look into it :)

The changelog does not mention any HDR changes. Anybody seen the same, or seen any difference ?

P4AOLO : You might want to look into it :)

Last edited:

Weird, I never got anything reset since 1.02, but I'll check it later if I can.Hmm it seems the 1.06 update for CyberPunk 2077 reset all in-game HDR Settings to default . Running on a C7 OLED (calibrated "A la P4OLO" for 2017 C7 Oled - Running XBOX Series X.)

The changelog does not mention any HDR changes. Anybody seen the same, or seen any difference ?

P4AOLO : You might want to look into it :)

Meanwhile: Merry Christmas to all! 🎅

B7 owner here. I just hooked up my PS5 and am using the same HDR settings that I used for my PS4 which always looked great. I have completed the HDR test patterns/setup process. I'm noticing the PS5 dashboard seems very dim though, anyone else experiencing this? Game logos/pictures of characters seem dim and don't pop. It's like a form of menu shadowing/dimming is overlaid on everything if that makes sense? I booted up Star Wars Squadrons though and it looked good (about how I remember it looking in HDR on PS4) so not sure if it's just a PS5 dashboard issue?

I'm in HDR Game Mode with dynamic contrast off (it's my understanding that setting this to "low" activates "Active HDR" on B7s but not in Game Mode so it's recommended to be off) and when I switch to Technicolor Expert HDR with dynamic contrast set to "low" it seems to fix the issue and the dashboard looks lively. Setting Dynamic Contrast to off in Technicolor Expert give a similar dim look though.

I'm in HDR Game Mode with dynamic contrast off (it's my understanding that setting this to "low" activates "Active HDR" on B7s but not in Game Mode so it's recommended to be off) and when I switch to Technicolor Expert HDR with dynamic contrast set to "low" it seems to fix the issue and the dashboard looks lively. Setting Dynamic Contrast to off in Technicolor Expert give a similar dim look though.

Hmm it seems the 1.06 update for CyberPunk 2077 reset all in-game HDR Settings to default . Running on a C7 OLED (calibrated "A la P4OLO" for 2017 C7 Oled - Running XBOX Series X.)

The changelog does not mention any HDR changes. Anybody seen the same, or seen any difference ?

P4AOLO : You might want to look into it :)

Reporting back after 2 hours of Cyberpunk v1.06 testing on Series X:Weird, I never got anything reset since 1.02, but I'll check it later if I can.

Meanwhile: Merry Christmas to all! 🎅

1) HDR settings were actually reset for the first time. I did notice that their defaults now changed a bit, as Peak HDR Luminance now will grab the system HDR Calibration app results as, in my case, it defaulted to 3.600 nits (B7 using Option 1b preset in the OP) which is correct. Same thing now happens with patched Gears 5 (3.600 nits as Peak HDR new default) but I still needed to change back Paper White to 200 nits and Midtone to 0.95;

2) Both Quality and Performance framerate targets now seem much more stable;

3) Performance Mode IQ vastly improved compared to launch, and is now my preferred mode (just remember to disable Film Grain, Chromatic Aberration and also Motion Blur which is useless at almost locked 60fps).

Great stream of updates and actual improvements by CDPR so far, and, even if I'm sorry for old hardware as One S and PS4 Fat, I'm really REALLY enjoying this game on my Series X now.

It's perfectly normal to have dimmer menus when using HDR Game without Dynamic Tone Mapping (or Active HDR).B7 owner here. I just hooked up my PS5 and am using the same HDR settings that I used for my PS4 which always looked great. I have completed the HDR test patterns/setup process. I'm noticing the PS5 dashboard seems very dim though, anyone else experiencing this? Game logos/pictures of characters seem dim and don't pop. It's like a form of menu shadowing/dimming is overlaid on everything if that makes sense? I booted up Star Wars Squadrons though and it looked good (about how I remember it looking in HDR on PS4) so not sure if it's just a PS5 dashboard issue?

I'm in HDR Game Mode with dynamic contrast off (it's my understanding that setting this to "low" activates "Active HDR" on B7s but not in Game Mode so it's recommended to be off) and when I switch to Technicolor Expert HDR with dynamic contrast set to "low" it seems to fix the issue and the dashboard looks lively. Setting Dynamic Contrast to off in Technicolor Expert give a similar dim look though.

It you have a B7 (like me) go the OP and apply Option 1 preset for SDR and Option 1b preset for HDR, both for the TV and PS5.

Then remember to set in-game Peak HDR Luminance to 4.000 nits (or 3.600 nits if you want to be even more accurate) and Paper White to 200, when games let you do so.

Also do the PS5 system HDR Calibration (after you setup both TV and Console) for all the newer games which won't even ask you in-game HDR settings, but will just pick them from the system once you finish that.

Thanks for the reply. So the dashboard is just going to be dim overall but the games won't be? I'm confused why the dashboard is so dim.It's perfectly normal to have dimmer menus when using HDR Game without Dynamic Tone Mapping (or Active HDR).

It you have a B7 (like me) go the OP and apply Option 1 preset for SDR and Option 1b preset for HDR, both for the TV and PS5.

Then remember to set in-game Peak HDR Luminance to 4.000 nits (or 3.600 nits if you want to be even more accurate) and Paper White to 200, when games let you do so.

Also do the PS5 system HDR Calibration (after you setup both TV and Console) for all the newer games which won't even ask you in-game HDR settings, but will just pick them from the system once you finish that.

Yes.Thanks for the reply. So the dashboard is just going to be dim overall but the games won't be? I'm confused why the dashboard is so dim.

The reason is that usually HDR UI elements intentionally have a dimmer luminance to avoid image retention or permanent burn in on OLEDs, but Dynamic Tone Mapping will just read those as "dimmer than it should" and raise it again.

Also keep in mind that PS5 will also take anything SDR and just "contain" it into an always on HDR signal, often degrading its quality.

Dynamic Contrast at Low in HDR Game, even if it's just regular DC, will improve HDR dynamics with no negative effects at all, so it's still recommended over than having it totally Off.

See Option 1b doc for all the details... ;)

Does enabling VRR add any sort of input lag? Im on a CX and Series X. I haven't touched FreeSync but currently have VRR on my Xbox. I can see the difference in some games in terms of smoothness, but was wondering if there was any draw backs. Im mostly aiming towards the lowest input lag possible.

No, input lag should be the same (as long as you're using SDR/HDR Game modes).Does enabling VRR add any sort of input lag? Im on a CX and Series X. I haven't touched FreeSync but currently have VRR on my Xbox. I can see the difference in some games in terms of smoothness, but was wondering if there was any draw backs. Im mostly aiming towards the lowest input lag possible.

Good to know. But HDR does add some correct? Its hard to go back to SDR at this point.No, input lag should be the same (as long as you're using SDR/HDR Game modes).

Appreciate it.

Neither VRR nor HDR add input lag. Theoretically, VRR would improve it.Good to know. But HDR does add some correct? Its hard to go back to SDR at this point.

Appreciate it.

B7 owner here. I just hooked up my PS5 and am using the same HDR settings that I used for my PS4 which always looked great. I have completed the HDR test patterns/setup process. I'm noticing the PS5 dashboard seems very dim though, anyone else experiencing this? Game logos/pictures of characters seem dim and don't pop. It's like a form of menu shadowing/dimming is overlaid on everything if that makes sense? I booted up Star Wars Squadrons though and it looked good (about how I remember it looking in HDR on PS4) so not sure if it's just a PS5 dashboard issue?

I'm in HDR Game Mode with dynamic contrast off (it's my understanding that setting this to "low" activates "Active HDR" on B7s but not in Game Mode so it's recommended to be off) and when I switch to Technicolor Expert HDR with dynamic contrast set to "low" it seems to fix the issue and the dashboard looks lively. Setting Dynamic Contrast to off in Technicolor Expert give a similar dim look though.

There is some kind of bug where occasionally the dashboard is dim and washed out. This is fixed by restarting the console. It happened to me once a few days after launch. Maybe this is the issue? Cause I don't find the dashboard to be dim.

So I got the 48" CX for my PC monitor, but, it might be a tad too big even if I'd mount it on the wall or get a bigger desk. I do appreciate the size when doing photo edits, but playing Battlefield V its kinda disorienting. Came to this from Asus ultrawide 34". I'm sure its great with slower paced games, or even faster games with less details. Like, Overwatch?

The only gripe I have is that my 1080 ti isn't supported by the TV's VRR. I guess this is something I should've known before buying the telly. Chose a stupid time to get the telly because I can't find a PS5 nor 3080.

The only gripe I have is that my 1080 ti isn't supported by the TV's VRR. I guess this is something I should've known before buying the telly. Chose a stupid time to get the telly because I can't find a PS5 nor 3080.

Last edited:

Thank you so much. VRR is extremely noticeable in MW so id prefer to keep it on.Neither VRR nor HDR add input lag. Theoretically, VRR would improve it.

Does anyone with a B/C7 also have a Sonos Arc soundbar? Is there anything I should be aware of compatability-wise? I have both a Series X and a PS5 and will have them connected to the TV, with the TV using ARC to the Sonos Arc (the 7 series doesn't support eARC, I think?).

God, the 30fps stutter/judder on my C9 is making me go crazy. I had no idea this was an issue with OLEDs, I would've just stuck with LEDs.

This happens even in game mode?

Minor update:

-All previous in-game Peak HDR Brightness recommendation to 4.000 nits (for Dynamic Tone Mapping or Dynamic Contrast usage) is now tweaked to 3.600 nits for even more accuracy and to match system-wide/console HDR Calibration results;

-For example: games like Gears 5 and Cyberpunk 2077 were recently patched to auto-set their Peak HDR Brightness following the system HDR Calibration on console, therefore auto changing it to 3.600 nits ;)

-All previous in-game Peak HDR Brightness recommendation to 4.000 nits (for Dynamic Tone Mapping or Dynamic Contrast usage) is now tweaked to 3.600 nits for even more accuracy and to match system-wide/console HDR Calibration results;

-For example: games like Gears 5 and Cyberpunk 2077 were recently patched to auto-set their Peak HDR Brightness following the system HDR Calibration on console, therefore auto changing it to 3.600 nits ;)

Yes. It might not be an issue for a lot of people but I have super sensitive eyes and it's killing me.

Yes. It might not be an issue for a lot of people but I have super sensitive eyes and it's killing me.

Yeah, this is the first thing I noticed going from my Samsung LED to the LG OLED. Downside of having near instant pixel response time, as in how long it takes a pixel to switch colours. On LEDs, this takes longer and causes motion blur. Which I think is one of the reasons developers tend to pump up motion blur in games targetting 30Hz.

In addition, the LG OLEDs with VRR, which may help compensate for this somewhat, only activate it above 40Hz I think. So once you hit 40 in games, it'll start feeling much smoother. Obviously, if gaming on console, this is not really doable for every game if there's no performance modes or what not.

For consoles, for now, in 30Hz games, the only real "bandaid" is to use the TVs motion smoothing processing. For those games, take the TV out of PC Mode, don't use Game Mode, calibrate the Standard Mode settings to proper Cinema-mode-like levels, but keep the motion smoothing stuff on - I can't even recall which ones.

It's not ideal. But it is what it is.

I actually tried doing this, but the artifacts that comes with it got just as distracting as the stuttering, unfortunately. I'm primarily a console gamer, so it's mostly going to be 30FPS gaming for me (we're in a good spot right now where almost every single game launching has a 60FPS mode, but that's absolutely not going to continue). Know about this would have definitely influenced my decision, but I know I'm a special case.Yeah, this is the first thing I noticed going from my Samsung LED to the LG OLED. Downside of having near instant pixel response time, as in how long it takes a pixel to switch colours. On LEDs, this takes longer and causes motion blur. Which I think is one of the reasons developers tend to pump up motion blur in games targetting 30Hz.

In addition, the LG OLEDs with VRR, which may help compensate for this somewhat, only activate it above 40Hz I think. So once you hit 40 in games, it'll start feeling much smoother. Obviously, if gaming on console, this is not really doable for every game if there's no performance modes or what not.

For consoles, for now, in 30Hz games, the only real "bandaid" is to use the TVs motion smoothing processing. For those games, take the TV out of PC Mode, don't use Game Mode, calibrate the Standard Mode settings to proper Cinema-mode-like levels, but keep the motion smoothing stuff on - I can't even recall which ones.

It's not ideal. But it is what it is.

I wouldn't be so negative.right now where almost every single game launching has a 60FPS mode, but that's absolutely not going to continue).

Microsoft really pushed hard for 60fps as the new standard for next gen, with 120fps as an optional/performance Mode and Sony followed quickly since D1.

Going back to 30fps after millions of gamers now adapting to 60fps as the new normal with all console games could generate an uproar among online communities even bigger than the current one for Cyberpunk performance on older consoles.

Devs and Publisher won't allow that, especially now seeing how much CDPR dropped in stocks in a very short time.

I actually tried doing this, but the artifacts that comes with it got just as distracting as the stuttering, unfortunately. I'm primarily a console gamer, so it's mostly going to be 30FPS gaming for me (we're in a good spot right now where almost every single game launching has a 60FPS mode, but that's absolutely not going to continue). Know about this would have definitely influenced my decision, but I know I'm a special case.

I have a B9, rather than a C9, but are you able to enabled the BFI/Black Frame Insertion? I seem to remember this may only work in 60Hz mode on the C9. It'll reduce brightness a bit, and it takes a little bit for eyes to adjust, but I think it can also help and doesn't seem to cause any visual artifacting.

I actually tried doing this, but the artifacts that comes with it got just as distracting as the stuttering, unfortunately. I'm primarily a console gamer, so it's mostly going to be 30FPS gaming for me (we're in a good spot right now where almost every single game launching has a 60FPS mode, but that's absolutely not going to continue). Know about this would have definitely influenced my decision, but I know I'm a special case.

Worth noting that I've played a few games that are setup in such a way that playing at 120hz is worse than having it at 60hz.

If you are finding a 30fps game to be worse than you remember, try 60hz instead

I certainly hope so, but I honestly doubt that something like Naughty Dog's next game (not factions) is going to have a 60FPS mode, nor do I think the general public is going to care unfortunately.I wouldn't be so negative.

Microsoft really pushed hard for 60fps as the new standard for next gen, with 120fps as an optional/performance Mode and Sony followed quickly since D1.

Going back to 30fps after millions of gamers now adapting to 60fps as the new normal with all console games could generate an uproar among online communities even bigger than the current one for Cyberpunk performance on older consoles.

Devs and Publisher won't allow that, especially now seeing how much CDPR dropped in stocks in a very short time.

I tried turning it on and unfortunately it didn't do much, if at all.I have a B9, rather than a C9, but are you able to enabled the BFI/Black Frame Insertion? I seem to remember this may only work in 60Hz mode on the C9. It'll reduce brightness a bit, and it takes a little bit for eyes to adjust, but I think it can also help and doesn't seem to cause any visual artifacting.

I restarted and it still looks the same. To clarify, the dashboard doesn't look super dim or anything, it's just that a lot of the imagery doesn't really pop. I watched several YouTube dashboard overview videos though and it looks the same as mine in most of those.There is some kind of bug where occasionally the dashboard is dim and washed out. This is fixed by restarting the console. It happened to me once a few days after launch. Maybe this is the issue? Cause I don't find the dashboard to be dim.

I wouldn't be so negative.

Microsoft really pushed hard for 60fps as the new standard for next gen, with 120fps as an optional/performance Mode and Sony followed quickly since D1.

Going back to 30fps after millions of gamers now adapting to 60fps as the new normal with all console games could generate an uproar among online communities even bigger than the current one for Cyberpunk performance on older consoles.

Devs and Publisher won't allow that, especially now seeing how much CDPR dropped in stocks in a very short time.

I was playing Miles and Demon's Souls at 60 fps, then went to play Gears 5 DLC on PC with the CX as the monitor at 30FPS, then Doom Eternal on my laptop at 144fps, then Doom Eternal at 60fps on the TV.....

the 30FPS was noticeably slow in Gears, the 144FPS Doom was crazy, and then going back to 60FPS for Doom at 4k was so noticeable

I used to barely be able to notice framerate....having it displayed on the screen does play a big part in that of course

Hi there - has anyone used the secret menu to enable BT 2020 color profiling? It really changes the LG CX's colors to be dramatically better (closer to the HDR BT 2020 profile). I have been using it on HDR Game modes for Series X and PS5 (as everything is HDR, essentially even though faked in the menu).

My question is - if you do use it, for non HDR sources like the Series X menu (or cable TV) do you use it there too?

My question is - if you do use it, for non HDR sources like the Series X menu (or cable TV) do you use it there too?

I would personally not touch anything like that, as CX should already offer the best Color Space for SDR (REC. 709) and HDR (BT2020) automatically, even in SDR/HDR Game modes as long as you select Color Gamut: Auto for both.Hi there - has anyone used the secret menu to enable BT 2020 color profiling? It really changes the LG CX's colors to be dramatically better (closer to the HDR BT 2020 profile). I have been using it on HDR Game modes for Series X and PS5 (as everything is HDR, essentially even though faked in the menu).

My question is - if you do use it, for non HDR sources like the Series X menu (or cable TV) do you use it there too?

I followed the settings in OP for my 65" lgcx, but Ghost of Tsushima is a little too dark for my taste. The Six Blades of Kojiro's final duel in a cave was so dark, I couldn't see anything other than the lanterns.

Hello!

Have a weird one for you guys, sorry if it's been mentioned before.

I have an LG C9, ps5 and xbox series x plugged in. On my ps5 I've been having a lot of issues with certain things, like when I go into the YouTube app on the ps5, I get a flickering on the sides and bottom like the screen isn't positioned correctly but I'm not finding any way of adjusting.

Also (and this is the biggest one for me) my soundbar is having a heckuva time. The sound is majorly tinny on some items and games, while fine on others... When I loaded up HBO Max last night to watch wonder woman, none of the sound effects on the hbo max app were working, I start up the movie and it's dead silent for a little while. It finally got to a scene and started playing some minor voices and the such, but I had to switch back to my xbox in order to heard all of the talking, music and sound effects.

This is the soundbar I have, if that helps: https://www.bestbuy.com/site/samsun...woofer-charcoal-black/6327857.p?skuId=6327857

I had it originally connected via optical cable but switched it to hdmi arc

Have a weird one for you guys, sorry if it's been mentioned before.

I have an LG C9, ps5 and xbox series x plugged in. On my ps5 I've been having a lot of issues with certain things, like when I go into the YouTube app on the ps5, I get a flickering on the sides and bottom like the screen isn't positioned correctly but I'm not finding any way of adjusting.

Also (and this is the biggest one for me) my soundbar is having a heckuva time. The sound is majorly tinny on some items and games, while fine on others... When I loaded up HBO Max last night to watch wonder woman, none of the sound effects on the hbo max app were working, I start up the movie and it's dead silent for a little while. It finally got to a scene and started playing some minor voices and the such, but I had to switch back to my xbox in order to heard all of the talking, music and sound effects.

This is the soundbar I have, if that helps: https://www.bestbuy.com/site/samsun...woofer-charcoal-black/6327857.p?skuId=6327857

I had it originally connected via optical cable but switched it to hdmi arc

I have a question about the 3600 max luminance as well. For example - when properly using the Dynamic Tone Mapping settings as "On" and doing the calibration on Series X, when I use the recommended 3600 nits max luminance in something like say Gears of War 5 - the sun in the image of the Gears of War HDR brightness screen and clouds lose all the details. Is the screen just not accurate or something?

Basically 3.600 nits max Luminance is the exact point where regular HDR Game (and HDR Cinema) preset and its default tone mapping curve (which the TV is applying) will start clipping highlight details (or losing them).I don't really understand the recommended settings for PS5 Valhalla in the OP. 3.600 max luminance? (It's a CX)

This number seems high but it's correct because most HDR movies and TV shows are mastered with a Peak HDR Luminance target (embedded in their Metadata) of 4.000 nits, so the TV is setup correctly to accommodate them and then scale them down for its actual 750-800 nits real Peak HDR Luminance.

Using Xbox HDR Calibration app we've discovered that highlights actually start to clip from 3.600 nits Luminance onward, therefore being even more precise this way, and as also HDTVTest found this also happens on PS5 HDR Calibration app.

Reference images used as background in those in-game HDR sections are often misleading.I have a question about the 3600 max luminance as well. For example - when properly using the Dynamic Tone Mapping settings as "On" and doing the calibration on Series X, when I use the recommended 3600 nits max luminance in something like say Gears of War 5 - the sun in the image of the Gears of War HDR brightness screen and clouds lose all the details. Is the screen just not accurate or something?

Try to calibrate HDR using Xbox HDR Calibration app: on the second and third pattern you'll need +50 Ticks to the right (starting from all the way to the left) for the pattern in the highlight to start vanishing totally, and Vincent Teoh from HDTVTest measured with its 10.000$ reference HDR Monitor that +50 ticks equals to actual 3.600 nits.

This will apply to both HDR Game and HDR Cinema presets when used with Dynamic Tone Mapping or Dynamic Contrast On or Off.

When using HGIG, the TV will stop doing any Tone Mapping leaving all of this "in the hand" of games, so anything higher than 800 nits will just be cut off from the real capabilities of the TV.

That's also why HGIG should NOT be used for Movies...

Last edited:

So to clarify, is the best picture mode for watching movies/shows via streaming services is Cinema? That is in HDR?

I will be honest, it seemed great at first, but recently I am noticing more grain (picture noise?) with it. And I am talking about content that is in 4K/UHD on Disney+ or Netflix.

I didn't make any changes with my settings, so not sure why I am noticing more grain now. But really concerning me. Should streaming be on HDR effect picture mode instead of Cinema?

I will be honest, it seemed great at first, but recently I am noticing more grain (picture noise?) with it. And I am talking about content that is in 4K/UHD on Disney+ or Netflix.

I didn't make any changes with my settings, so not sure why I am noticing more grain now. But really concerning me. Should streaming be on HDR effect picture mode instead of Cinema?

Yes it is (using recommended settings for webOS HDR in the OP, including Dynamic Tone Mapping: On for it).So to clarify, is the best picture mode for watching movies/shows via streaming services is Cinema? That is in HDR?

The film grain you see is most probably the film grain added by the creators of the movies themselves.

HDR Effect will try to mimic HDR using SDR contents, but mostly destroying picture accuracy compared to original content.

Last edited:

Yes it is (using recommended settings for webOS SDR in the OP, including Dynamic Tone Mapping: On for it).

The film grain you see is most probably the film grain added by the creators of the movies themselves.

HDR Effect will try to mimic HDR using SDR contents, but mostly destroying picture accuracy compared to original content.

Using recommended settings webOS SDR? You mean using the settings for webOS HDR (Cinema), right? Yes, I did go off the recommended settings on the front page for the webOS HDR (Cinema) settings.

Yes, I understand a lot of filmmakers will use grain for films, and when I first saw it last night (American Horror Story) I was certain that is what it was, considering the type of content. But then I also checked Cobra Kai. I watched Cobra Kai previously, without grain (although it was on my E6) and I still saw it here.

Also, checked Disney+. Recently watched Godmothered and don't really remember much in the way of grain for that movie, but did notice it more last night. Really strange, as I didn't notice this recently so not sure why it has changed.

I did make a setting change on my Netflix for Playback bandwith content from 'Auto' to 'High', to assure me it is always doing 4K when the material has it. So maybe that will make a difference. But it doesn't explain Disney+ as I was already set on the highest playback.

Last edited:

Make sure your sharpness is set to 0.Yes, I understand a lot of filmmakers will use grain for films, and when I first saw it last night (American Horror Story) I was certain that is what it was, considering the type of content. But then I also checked Cobra Kai. I watched Cobra Kai previously, without grain (although it was on my E6) and I still saw it here.

Also, checked Disney+. Recently watched Godmothered and don't really remember much in the way of grain for that movie, but did notice it more last night. Really strange, as I didn't notice this recently so not sure why it has changed.

Ok, I will double-check that. (Don't have the TV on now). So just for clarification, the higher the sharpness, the more it brings out imperfections. When you have a lower sharpness, it blurs it more? From what I remember, it might be set at 10, so that might be causing it.

0-10 doesn't seem to add any sharpness, but on resolutions under 4k it adds post-processing anti-aliasing. Vincent Teoh says Sharpness 10 does add sharpening so put it on 0, and while I put my faith in Vincent the results I've seen confuses me as they seem beneficial. It'd be cool if he demonstrated this in a video and acknowledge the edge smoothing of 10 (find it really noticeable on high contrast edges in Animal Crossing on the Switch).Ok, I will double-check that. (Don't have the TV on now). So just for clarification, the higher the sharpness, the more it brings out imperfections. When you have a lower sharpness, it blurs it more? From what I remember, it might be set at 10, so that might be causing it.

Sharpness 0

Sharpness 10

Do you know if this is something Vincent might be interested in taking a look at EvilBoris ? I understand he focuses on 4k material, but this can be a useful thing to know more about for those who play older systems, HD material, and the Switch.

0-10 doesn't seem to add any sharpness, but on resolutions under 4k it adds post-processing anti-aliasing. Vincent Teoh says Sharpness 10 does add sharpening so put it on 0, and while I put my faith in Vincent the results I've seen confuses me as they seem beneficial. It'd be cool if he demonstrated this in a video and acknowledge the edge smoothing of 10 (find it really noticeable on high contrast edges in Animal Crossing on the Switch).

Sharpness 0

Sharpness 10

Do you know if this is something Vincent might be interested in taking a look at EvilBoris ? I understand he focuses on 4k material, but this can be a useful thing to know more about for those who play older systems, HD material, and the Switch.

Well, I do remember setting my Sharpness to a higher number (than 0) as I thought "Why wouldn't you want the picture to be more sharp?" Not understanding the side effects. I don't know for certain if it's set at 10 or not, it might be 15, but I know I had set it within the recommended area on the first page (the grey numbers). Will need to check all my picture settings now, as well.

Yes, I see what you mean about the anti-aliasing. But It's not 'lines' I'm necessarily seeing, it does look more like grain. I guess a better description is if you looked closer could be like small insects moving on the screen in the background. You don't notice it as well sitting back, but I didn't think should notice grain at all when viewing sharp, 4K content. Again, on those exceptions on content it's intentional like with films, but I'm noticing it with everything.

I'll try changing the sharpness and see if that does anything.

Edit: Alright, made some adjustments. I am more confused now than ever before. I didn't know there were different Cinema settings. I have 'Cinema (User)' set up for my streaming. It is the base picture setting for Cinema, the only one showing when flipping through the different picture settings. However, depending on the content I watch, it may flip to Cinema (Dolby Vision) or Cinema (Home) and there may be other Cinema types. I understand the Dolby Vision one, but not really sure what the (Home) one originates from.

I notice that each of those have their own settings, as well. When I went to Netflix (Cinema (User)), the Sharpness was 15. Putting that to 0 helped. Definitely noticed the difference watching Cobra Kai.

However, when I switched to Disney+ and tried Godmothered again, that switched to Cinema (Dolby Vision) and now I had a completely different setting of Sharpness 20.

Before changing, I decided to test another Dolby Vision movie, Avengers: Endgame. Like Godmothered, it of course was Cinema (Dolby Vision) with Sharpness 20. But unlike Godmothered, that background was crystal clear. I stood UP at my screen and didn't notice a hint of grain, even on dark screens.

I understand different movies can have different levels of grain depending on the intent of the movie maker, but with Godmothered being a brand new movie, and grain not being something you would think goes with this type of movie, why would filmmakers make a point it is in that movie and not in Endgame?

I'm not sure if I should set Cinema (Dolby Vision) at 0 or leave it alone. Again, it's at 20 right now.

Last edited:

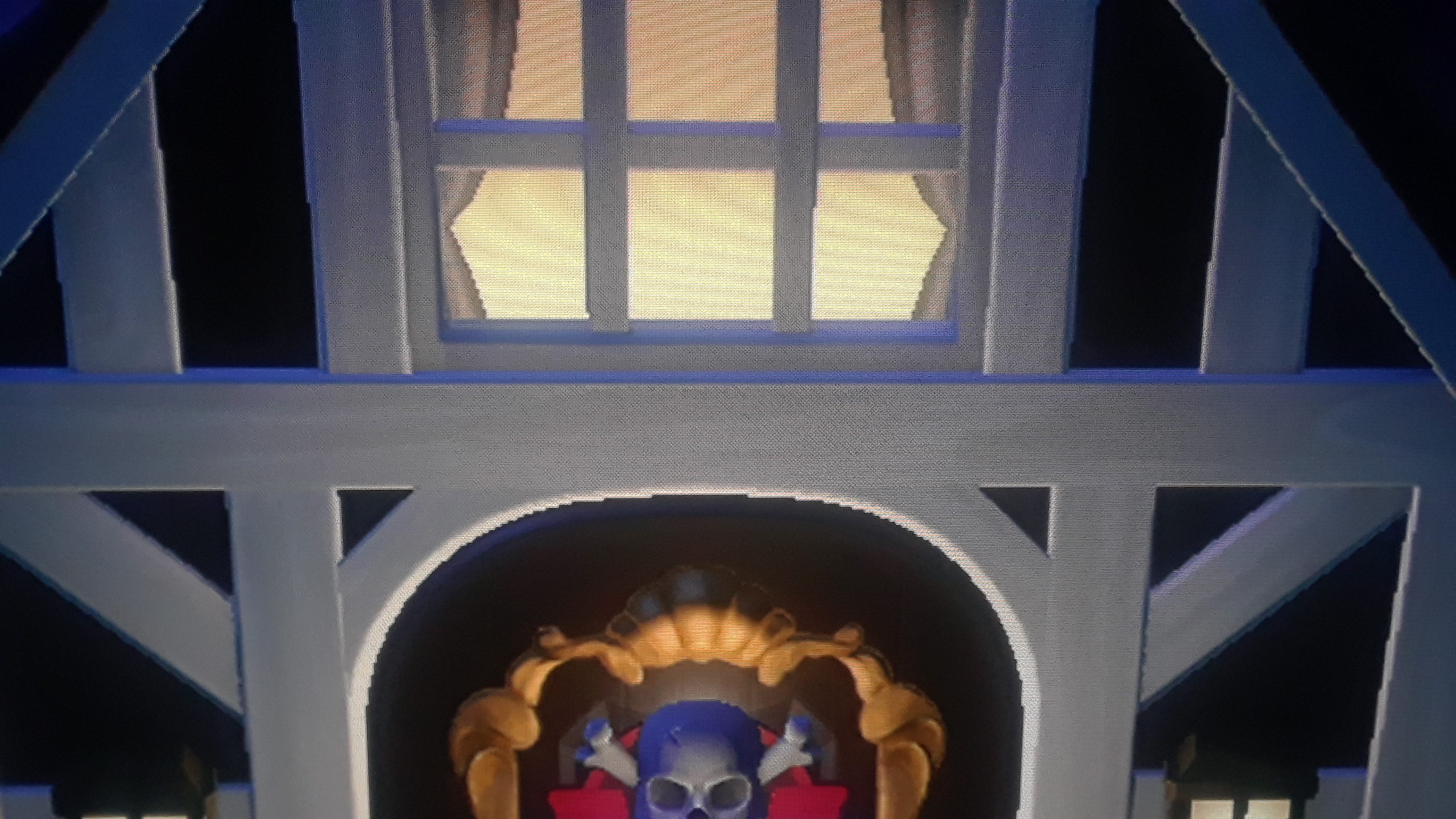

If you have a C9 or CX you only need to setup each preset for each content type (SDR, HDR and DOLBY VISION) for all signals (webOS, Xbox Video, Xbox Games, possible separate Bly Ray player etc) by using the settings below (see each column to know exactly what preset to choose and configure separately for each variant):Well, I do remember setting my Sharpness to a higher number (than 0) as I thought "Why wouldn't you want the picture to be more sharp?" Not understanding the side effects. I don't know for certain if it's set at 10 or not, it might be 15, but I know I had set it within the recommended area on the first page (the grey numbers). Will need to check all my picture settings now, as well.

Yes, I see what you mean about the anti-aliasing. But It's not 'lines' I'm necessarily seeing, it does look more like grain. I guess a better description is if you looked closer could be like small insects moving on the screen in the background. You don't notice it as well sitting back, but I didn't think should notice grain at all when viewing sharp, 4K content. Again, on those exceptions on content it's intentional like with films, but I'm noticing it with everything.

I'll try changing the sharpness and see if that does anything.

Edit: Alright, made some adjustments. I am more confused now than ever before. I didn't know there were different Cinema settings. I have 'Cinema (User)' set up for my streaming. It is the base picture setting for Cinema, the only one showing when flipping through the different picture settings. However, depending on the content I watch, it may flip to Cinema (Dolby Vision) or Cinema (Home) and there may be other Cinema types. I understand the Dolby Vision one, but not really sure what the (Home) one originates from.

I notice that each of those have their own settings, as well. When I went to Netflix (Cinema (User)), the Sharpness was 15. Putting that to 0 helped. Definitely noticed the difference watching Cobra Kai.

However, when I switched to Disney+ and tried Godmothered again, that switched to Cinema (Dolby Vision) and now I had a completely different setting of Sharpness 20.

Before changing, I decided to test another Dolby Vision movie, Avengers: Endgame. Like Godmothered, it of course was Cinema (Dolby Vision) with Sharpness 20. But unlike Godmothered, that background was crystal clear. I stood UP at my screen and didn't notice a hint of grain, even on dark screens.

I understand different movies can have different levels of grain depending on the intent of the movie maker, but with Godmothered being a brand new movie, and grain not being something you would think goes with this type of movie, why would filmmakers make a point it is in that movie and not in Endgame?

I'm not sure if I should set Cinema (Dolby Vision) at 0 or leave it alone. Again, it's at 20 right now.

Universally setting Sharpness to 10 is a good suggestion, as this would basically keep all Native 4K fidelity while improving sub-4K contents upscaling with no negative effects or image degradation.

Also read the notes below the chart, which are very important + remember to also disable Live+ service and AMD Freesync from TV General settings (to avoid issue with VRR and Dolby Vision on Xbox).

Last edited:

Sorry, one more follow up regarding B7 - can you explain a bit more why Dynamic Contrast Low is better than Off for HDR Game Mode?Yes.

The reason is that usually HDR UI elements intentionally have a dimmer luminance to avoid image retention or permanent burn in on OLEDs, but Dynamic Tone Mapping will just read those as "dimmer than it should" and raise it again.

Also keep in mind that PS5 will also take anything SDR and just "contain" it into an always on HDR signal, often degrading its quality.

Dynamic Contrast at Low in HDR Game, even if it's just regular DC, will improve HDR dynamics with no negative effects at all, so it's still recommended over than having it totally Off.

See Option 1b doc for all the details... ;)

Does this Sharpness 10 SDR enhancement work for 2017 models?0-10 doesn't seem to add any sharpness, but on resolutions under 4k it adds post-processing anti-aliasing. Vincent Teoh says Sharpness 10 does add sharpening so put it on 0, and while I put my faith in Vincent the results I've seen confuses me as they seem beneficial. It'd be cool if he demonstrated this in a video and acknowledge the edge smoothing of 10 (find it really noticeable on high contrast edges in Animal Crossing on the Switch).

Sharpness 0

Sharpness 10

Do you know if this is something Vincent might be interested in taking a look at EvilBoris ? I understand he focuses on 4k material, but this can be a useful thing to know more about for those who play older systems, HD material, and the Switch.

I've tested HDR Game preset with DC: Low and Off using HDR test patterns with both 1.000 and 4.000 nits targets and with DC: Low both grayscale and color lumince ranges expanded their visible highlights compared to Off.Sorry, one more follow up regarding B7 - can you explain a bit more why Dynamic Contrast Low is better than Off for HDR Game Mode?

Just increasing DC to Medium, the same patterns already started to clip those expanded highlights a bit, and clipping them a lot using DC: High.

In short: DC Low will just provide a better/more accurate HDR range, while DC Medium and High will further increase Luminance from there, at the cost of diminishing/degrading that range.

Threadmarks

View all 9 threadmarks

Reader mode

Reader mode