Quantum Take

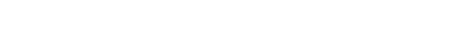

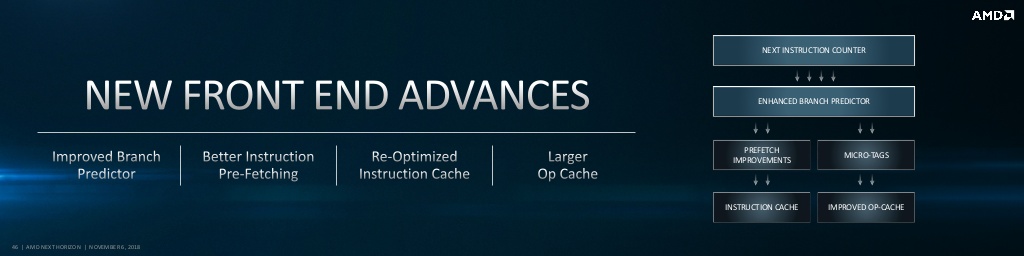

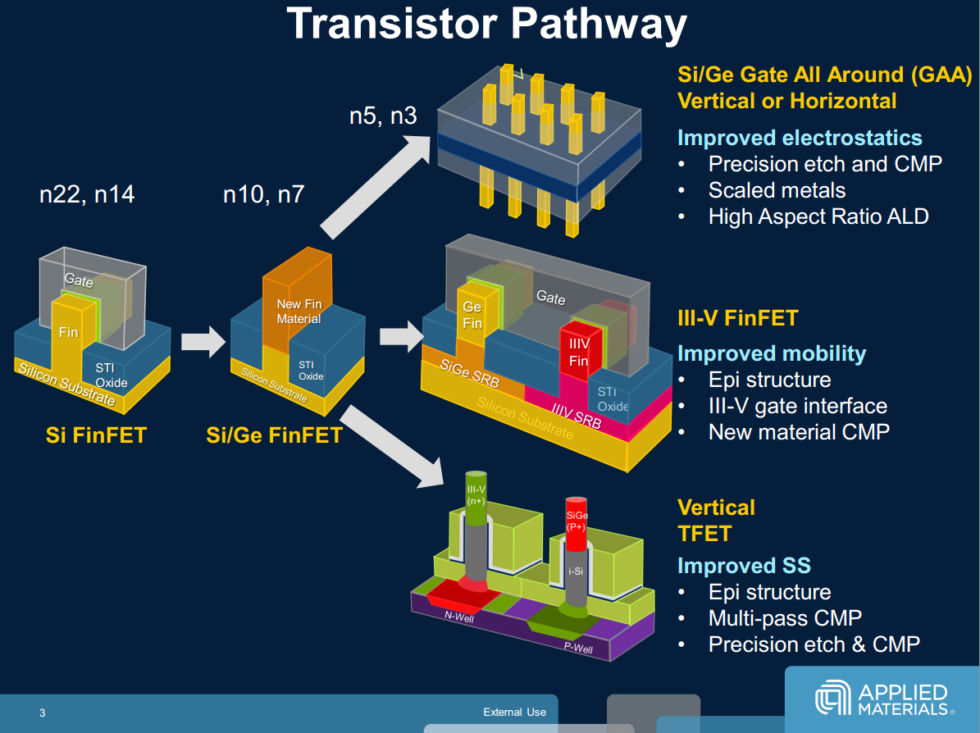

It feels remiss not to talk about the technologies that likely won't be included in next generation consoles given the industry is on the verge of many inflection points. As the industry grapples with the death of traditional node scaling, it is looking to exotic materials, new transistor topologies, quantum devices, innovative cooling techniques[jg], and 3D packaging to keep performance moving ahead[jk].

As it stands now, many new technologies are on the precipice of hitting commercialization. While none of them meet the economics or volume needed for new consoles, by the time we are having this discussion about the successors to new consoles, many of them may be commonplace. Some may be essential to making performance leaps, and perhaps even show up in performance focused revisions this generation.

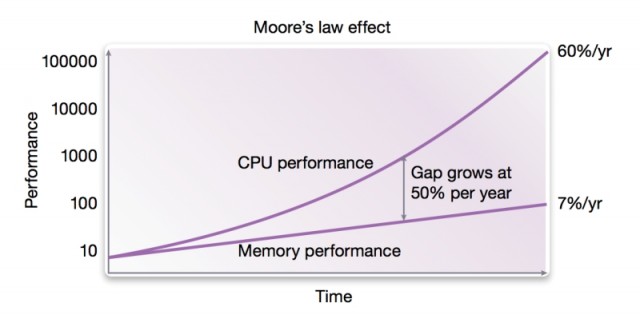

The memory hierarchy as we know it is about to be upended. All major manufacturers plan to implement MRAM in the coming years, and it could begin to replace SRAM for caches, offering similar density and lower power[ib]. Optane DIMMs are filling a speed gap that exists between traditional DRAM and the fastest SSDs[ic]. This is all on top of the disruption that SSDs have wrought over the past decade. These are all necessary changes too, as processor performance increases continue to outpace gains made in memory[id].

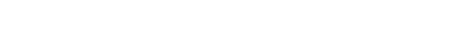

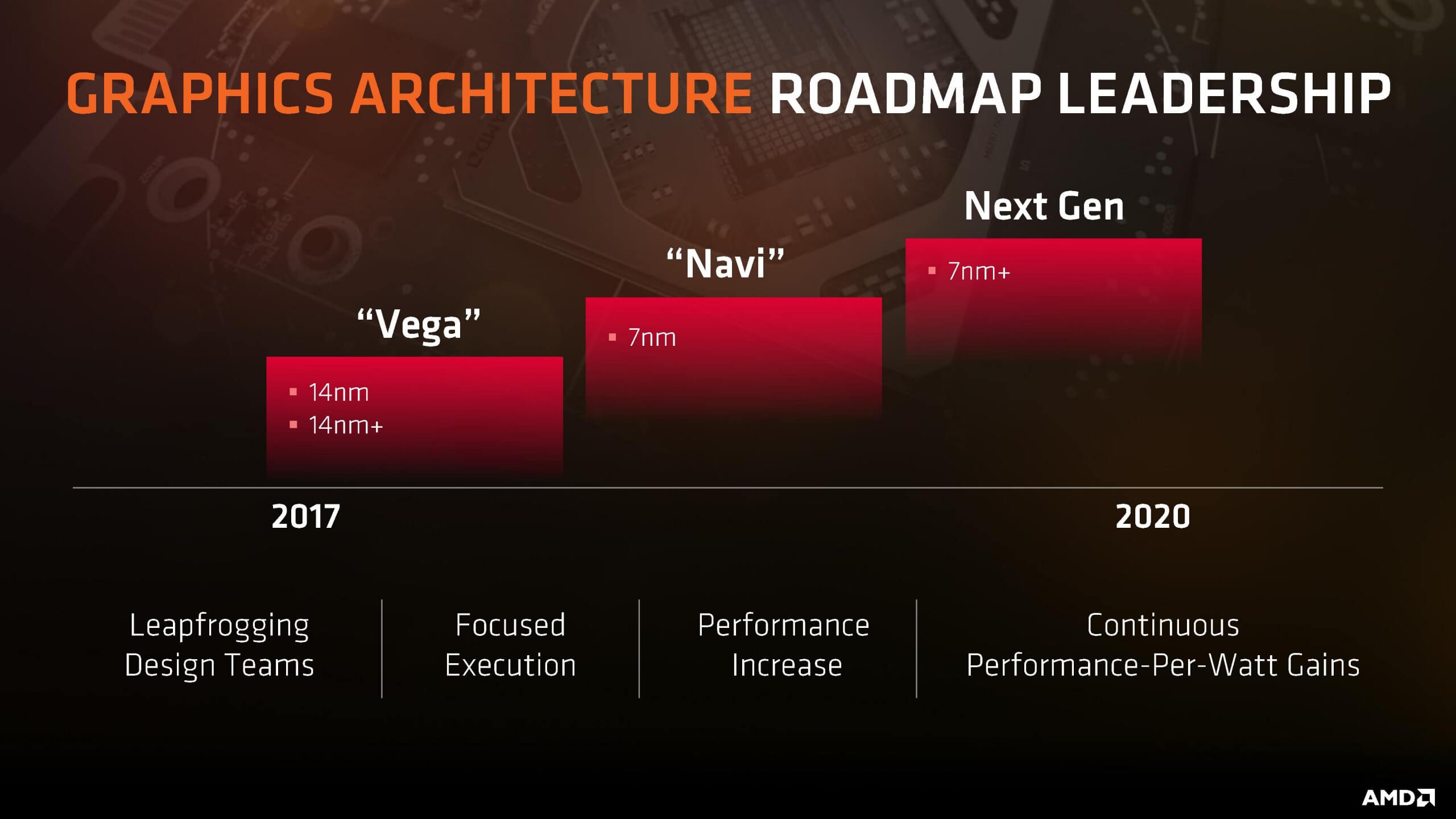

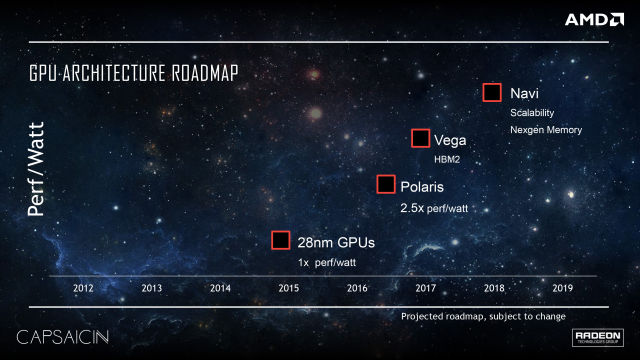

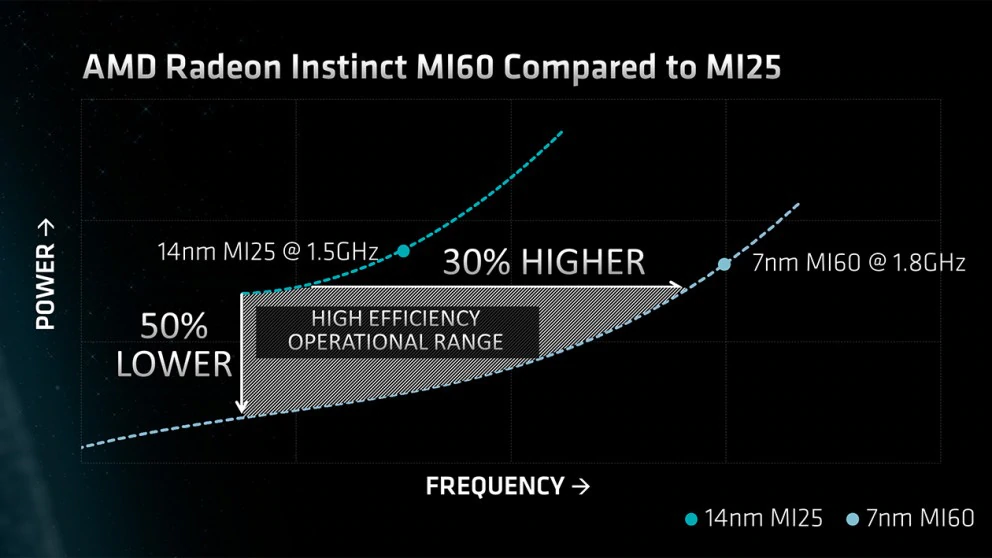

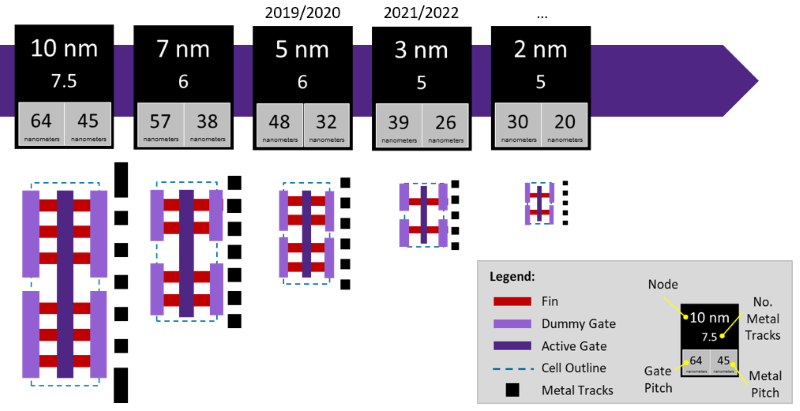

Silicon foundries are currently undergoing a transition to extreme ultraviolet lithography (EUV) that has been anticipated for well over a decade[ij,il]. In addition to enabling further transistor density improvements, it will allow fewer processing steps for each wafer, opening up the possibility of greater throughput and fewer chances to introduce defects. TSMC plans to bring their first EUV process online this year with 7nm+.

Current roadmap of silicon node feature sizes

Foundries are also planning new transistor geometries in order to gain better control of the tiny channels that form when a gate switches on. Gate-All-Around Field Effect Transistors (GAAFETs)[ii] will add a fourth enclosing side to the triangular shaped FinFETs now commonplace at all the major foundries. These shapes can also be stacked, further increasing density and transistor drive capability. While these devices may not be ready for a mid-cycle refresh, expect them to be part of the equation by the time these consoles are in their twilight years.

Silicon is also reaching its end of the road. An atom is only so big, and as you fight quantum effects to push things closer and closer, you still have to reckon with the fact that there are faster semiconductor types than silicon. They may not have reached the scale that silicon has, but many will work diligently to make it so. Quantum effect devices will earn their place in the field as well.

There is a lot happening from the materials side as well. Instead of making a transistor work faster, your alternative is to make its job easier. That means getting more efficient at removing heat, or coming up with ways to make its trace shorter with advanced packaging technologies or less resistive interconnects. TSMC is doing a lot to improve the performance and variety of packaging solutions that will lower the barriers to stacked memory and die solutions[if-ih,ik,je]. One day, solutions like HBM and WideIO won't be niche anymore.

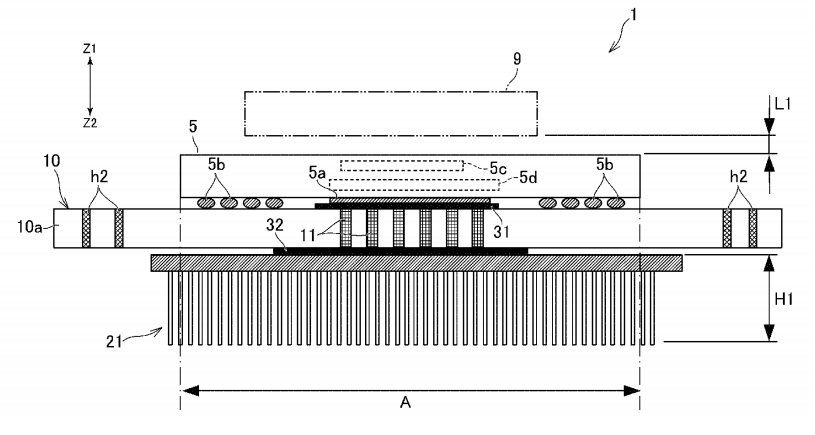

Sony is definitely interested in these new packaging technologies as well. A recent patent describes a System in Package (SiP) solution with stacked memory and a heatsink mounted to the reverse of the PCB, with conductive heat paths through the printed circuit board (PCB)[ie]. The language of the patent mentions proximity to sensors and antennas, so it is potentially part of a PSVR2 device.

There are many more near and mid-term advances that could be covered, but suffice to say that Sony and Microsoft will count on advances like these if they don't want console power trends[im] to flatline.

Split Personalities

Having a balanced console design that is easy to manufacture is key to having a good console launch. There are numerous examples where consoles focusing on the wrong things such as Sega Saturn's 3D power deficit or Nintendo 64's reliance on cartridges created an opportunity for the original PlayStation. Similarly, the PlayStation 3's complicated architecture ceded Sony's crown just one generation after the most successful console ever. Of course, these weren't single-point failures, but they did create windows of significant opportunity for their competitors.

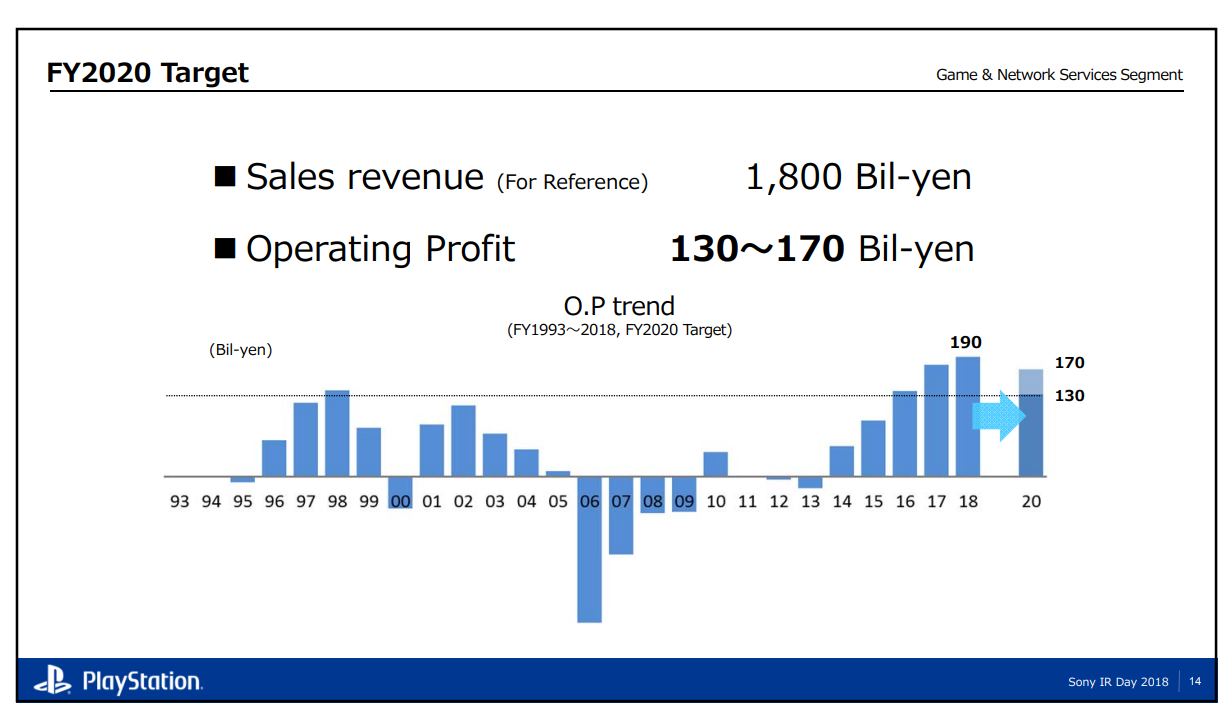

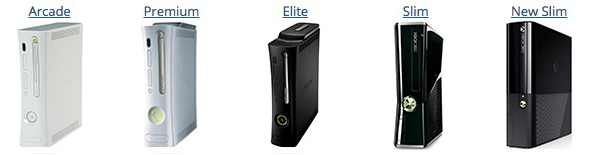

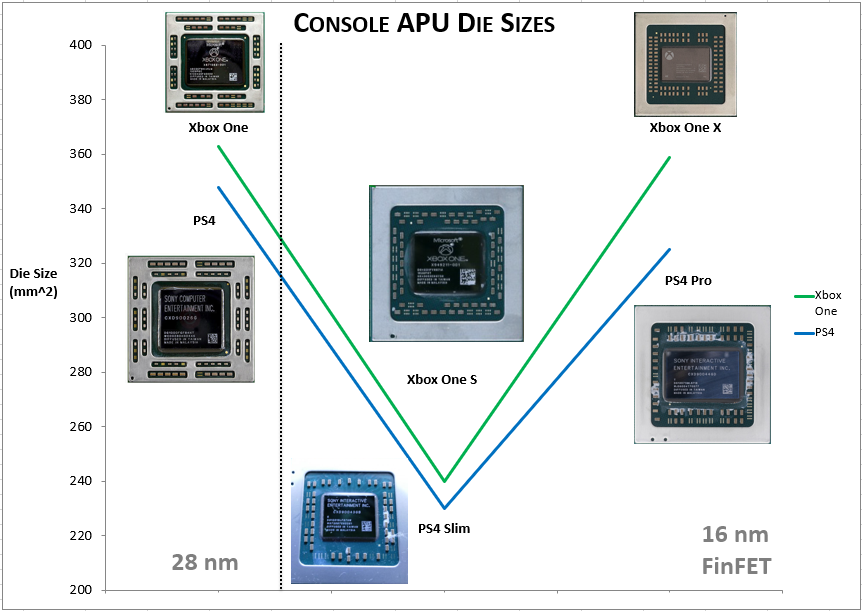

Post-launch console revisions have become increasingly important over the generations as well, as the more opportunities there are to reduce cost, the more flexible the console makers become at being able to respond to the market and create demand. Microsoft reduced the costs of the Xbox 360 by eventually combining the Xenon CPU and Xenos GPU into a single APU[iw].

Sony reduced costs of the PS3 by shrinking Cell and RSX, but also converting their GDDR3 memory to GDDR5 and halving the bus width[io]. They could well do the same by releasing a PS4 super slim revision with GDDR6 on a 128-bit bus, simultaneously allowing them to do a pipecleaner for a GDDR6 controller design on 7nm and increase the order size for GDDR6 memory, which may help them broker a better deal.

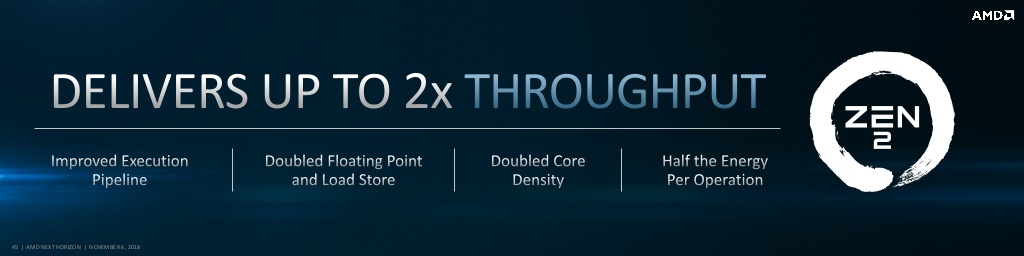

It is not coincidence that both Sony's and Microsoft's consoles this generation started with monolithic APUs and unified memory pools. Doing the same this generation is likely the most cost-effective approach, and unlike previous generations, they may not be able to count on die shrinks to lower the costs of console revisions.

This design mentality also pertains to console revisions. When designing this generation, they should have in mind what a half-step console revision will look like, and if that information can inform the architecture decisions they are making right now. For instance, if they can count on node shrinks to make FLOPs cheaper, they may be forced into a GPU chiplet scenario where they double up on the GPU in the base console design.

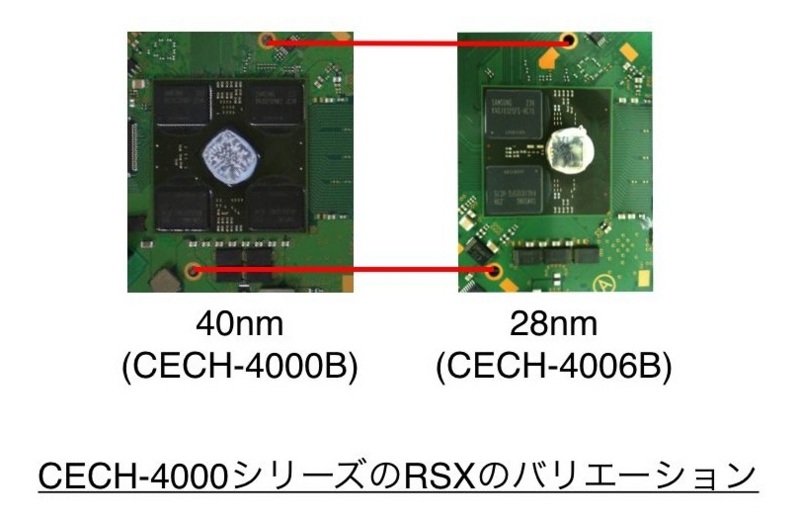

PS3 revision showing the reduction in overall memory package thanks to a GDDR3->GDDR5 conversion

AMD has warned that a GPU chiplet solution may befall the same fate as seen in current SLI/Crossfire setups with pool scaling, microstuttering, or other issues[fo]. However, AMD is also cooking up alternatives to Alternate Frame Rendering (AFR) which is now the standard approach for these setups. Split-frame rendering divides the frame in multiple portions between the available GPUs[ip], and may be a viable and performant alternative[ld]. AMD is also putting significant research and development into interposers and their successors, which should help chiplet-based designs move beyond being a nascent solution[ix-jb].

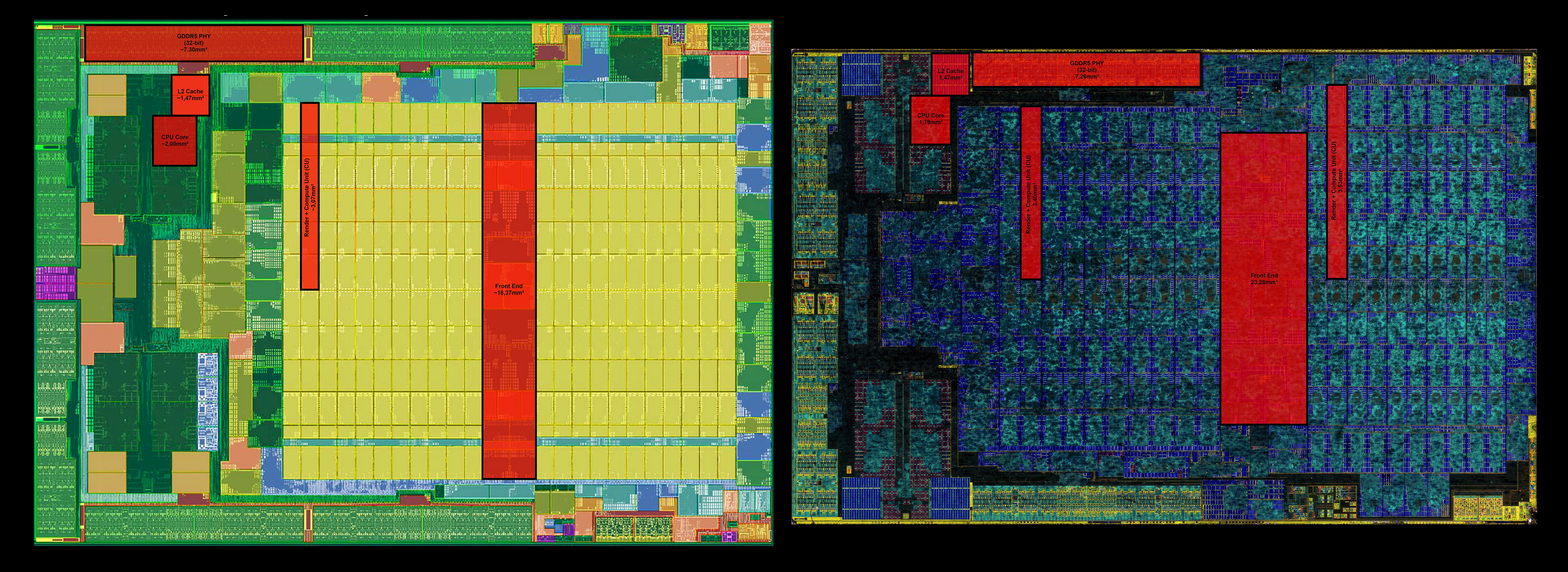

Comparison of the PS4 Pro and Xbox One X APU die

In retrospect, both Sony and Microsoft began signaling the possibility of console revisions fairly early on in the generation[ir,iu-iv,jr]. Seen as a way to prevent migration of users to high-performing PCs mid-generation[it,jm], they've become important steps that have helped bring new users to their respective platforms, and represent a significant portion of the ongoing sales for each platform[iq]. What was seen as novelty may turn into an outright expectation within the span of a generation. Both need to at least prepare a contingency plan for the other doing a mid-cycle refresh.

Holding Mass

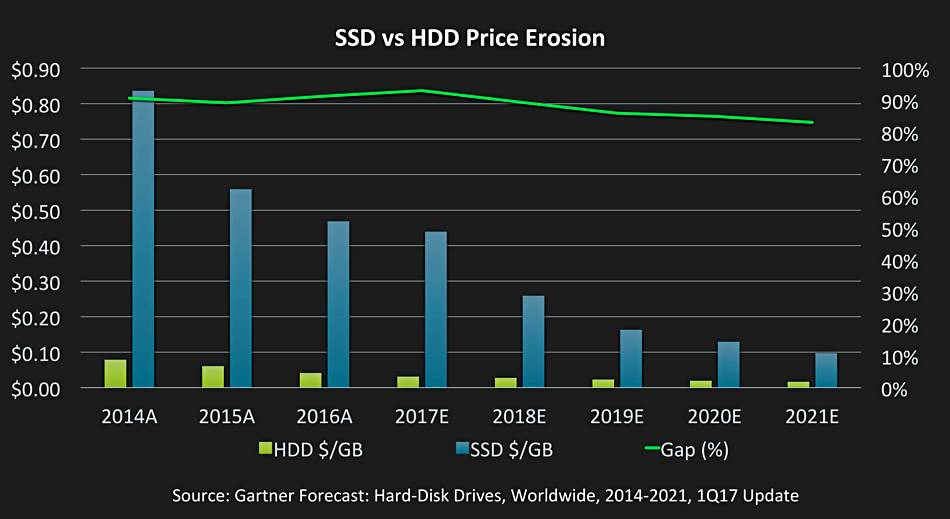

The Playstation 4 and Xbox One both launched with 500GB hard drives. These days, that's barely enough to fit a couple of games[jj], and you can get nearly that much storage for your Nintendo Switch for under $100. While higher layer count blu-ray discs may help keep games to only one disc[jl], clearly the console makers will need to evolve their storage solutions for a new round of consoles.

However, the choice is about more than just increasing storage space. Sony and Microsoft have a real opportunity to drastically cut game load times and change how assets are stored and loaded. Microsoft's Spencer has directly commented these are issues they're actively considering for the next platform[be]. We also know they're investigating these technologies based on job postings[jn]. Current generation consoles do show some benefit to game loading times[jy,kb], even on the antiquated SATA II/III buses inside the consoles.

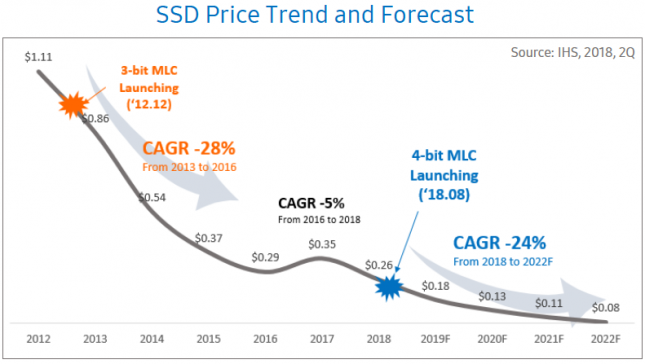

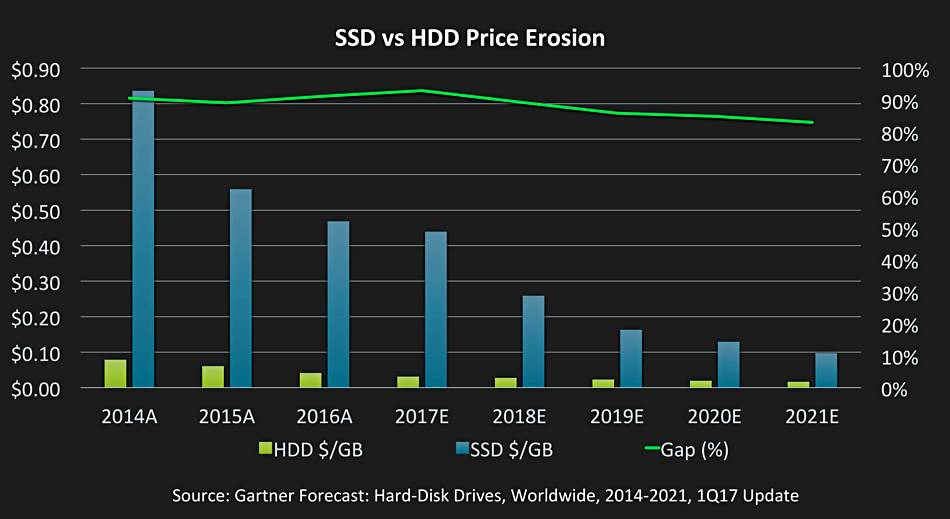

A Microsoft engineer has commented that current generation console designs fundamentally prevent them from being able to take advantage of the high read rates boasted by today's NVMe SSDs[jo]. That needn't be the case with next generation, and with the introduction of QLC NAND[jv] that will soon be pushing 128 layers[jw-jx], it's only going to get more affordable. It's also proving to be a reliable medium outside of the benefits of no moving parts[js-ju]. Even a relatively small allocation of 64GB to 128GB could be used as a scratch pad for games using a combination of AMD's HBCC and StoreMI technologies[fn,jp].

Exchange Rates

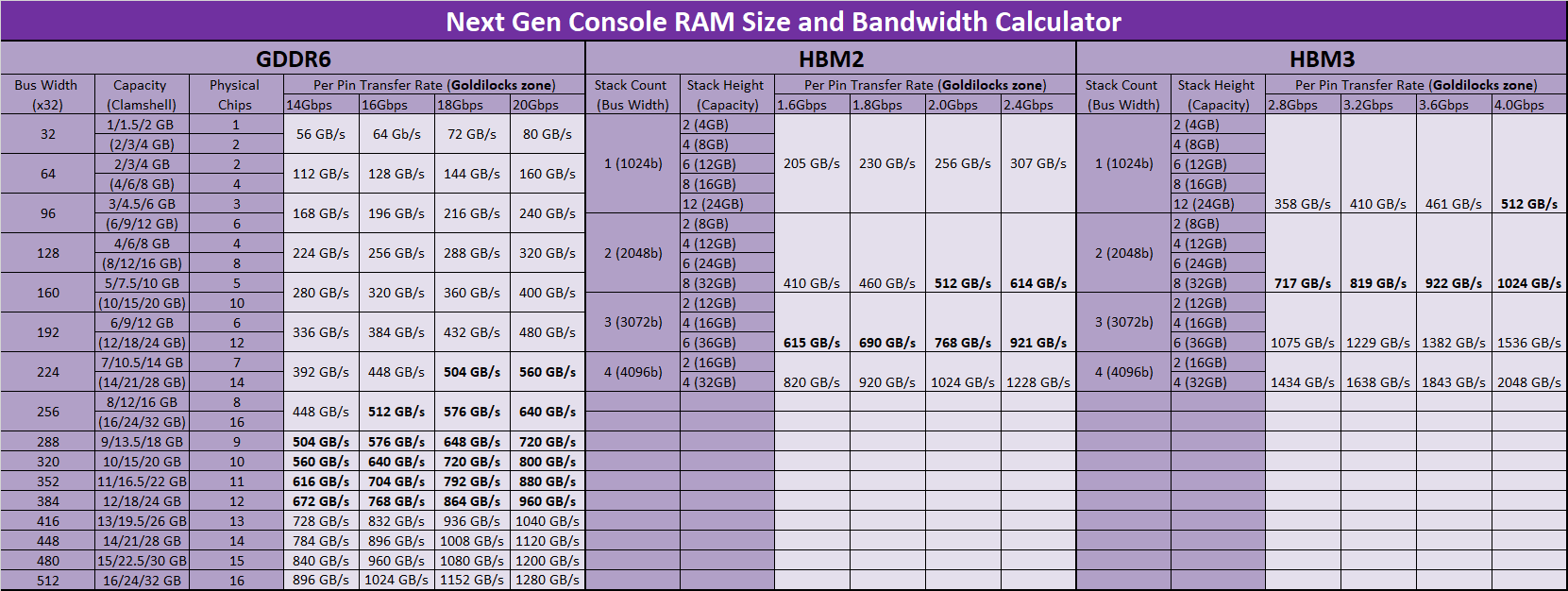

Outside of the CPU and GPU, DRAM technology has been the most intense area of focus around next gen consoles. Many consumers and developers went into last gen thinking the PS4 would have 2-4GB of RAM[la], only to find out it would receive 8GB after intense developer feedback[jy-ka].

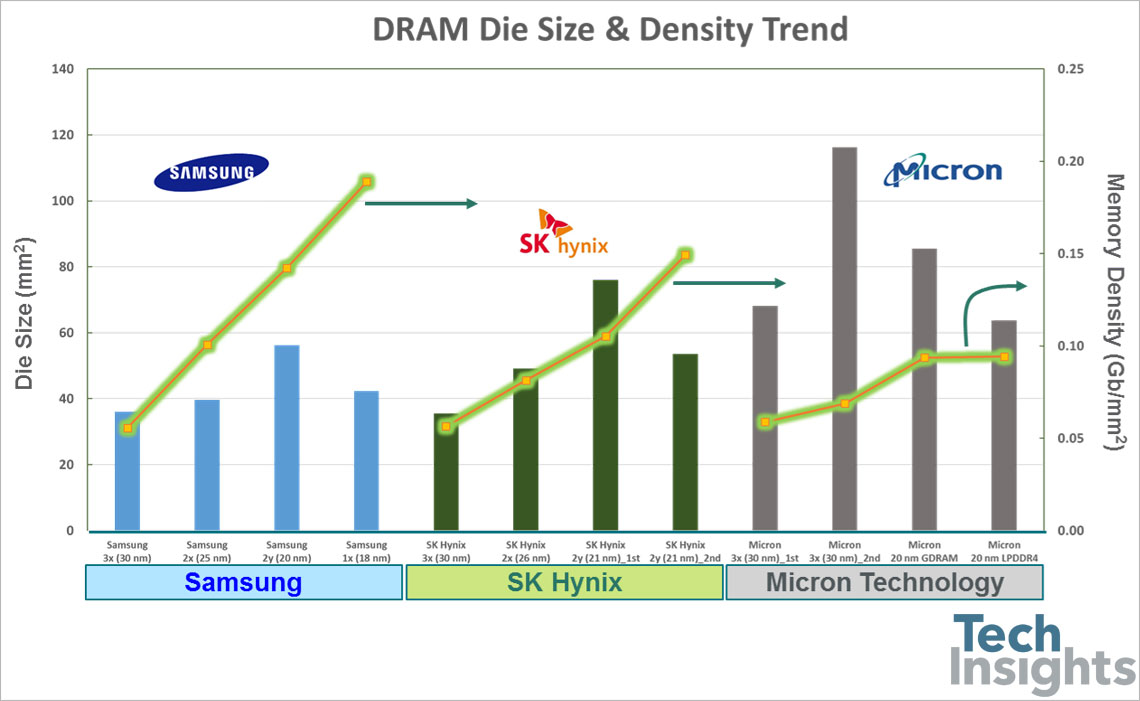

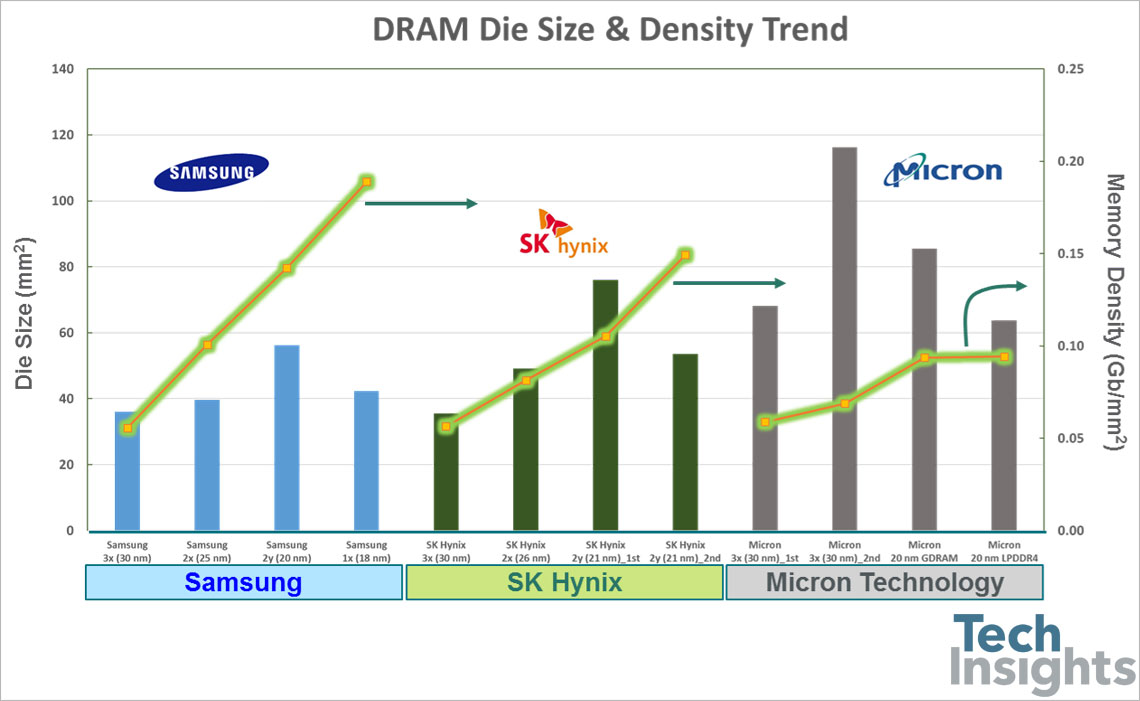

Current estimates for this generation's DRAM allocation range anywhere from 16GB to 32GB, which is probably fair since the most dense DRAM die has increased 4x in capacity since the launch of the PS4, meaning the same number of chips would result in a 32GB solution. Current generation VRAM buffers can eat up the entirety of an Nvidia card's 11GB memory[ha], and some PC benchmarks show that games benefit up until the 16GB of system RAM point[kq]. Thus, one can begin to see the argument for 32GB.

However, with the addition of HBCC and more advanced compression techniques in today's GPUs, 16GB to 24GB seems to feel pretty safe. This is especially true because games likely aren't going to be expected to go past 4K resolution this generation, unless you count VR games. There has been plenty of saber-rattling over GDDR6 being much more expensive than GDDR5, but the reality is much more tame[ke], and while a 10-15% bump isn't insignificant, it's not a doomsday scenario that pencils a console-maker out of a solution because the additive cost is too great[le-lf].

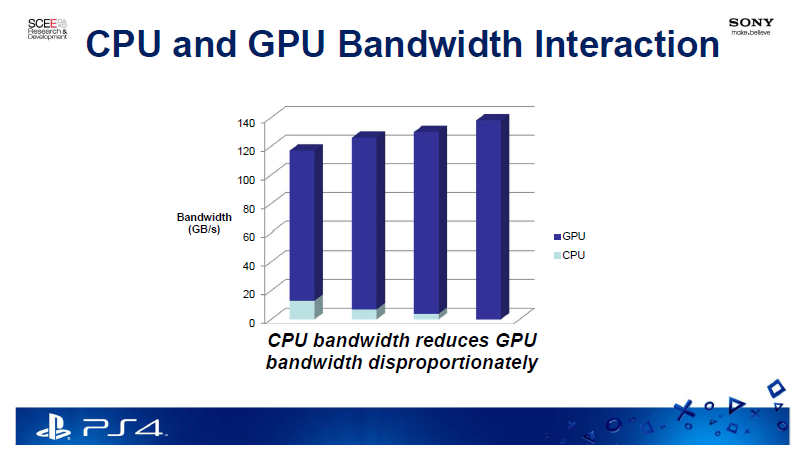

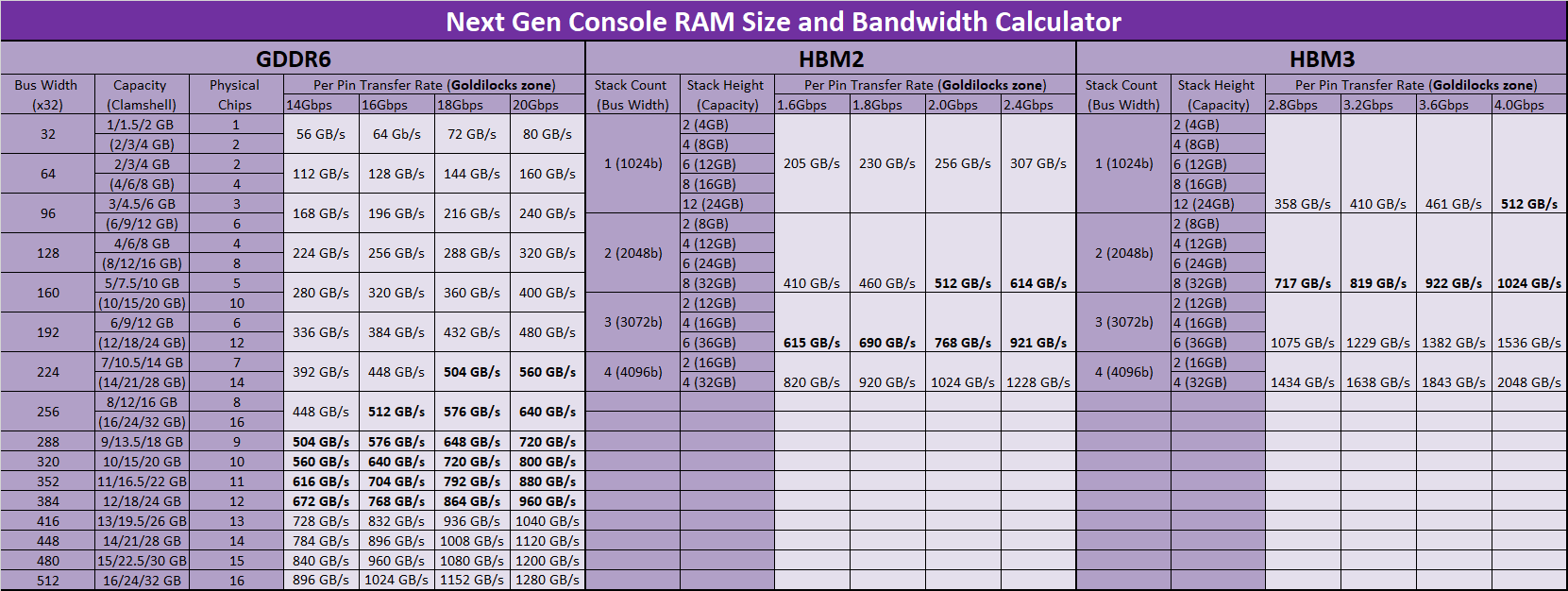

Memory capacity is only part of the story, however. Memory bandwidth matters too, especially when Vega is considered to require all of its near 500GB/s of bandwidth[kx], and a Zen CPU likely wants 50GB/s on its own, plus an overhead tax for a shared pool. This has caused many to hope for a HBM2 or HBM3 solution. However, thanks to GDDR6's enhanced data rates over GDDR5, it can simply wag its tongue at HBM2. GDDR6 can achieve the same bandwidth as HBM2 in a more cost effective manner, perhaps even at 4-stacks.

GDDR6 at a 256-bit to 384-bit interface will equal the data rates HBM2 can achieve in 2 or 3-stack situations, perhaps at as little as one third the cost. You burn more power to run this interface, but only a fraction of that power is actually consumed on the die itself where you care about the heat. HBM3 may change this equation[bl], but it likely won't be ready for even a 2020 console, and neither it nor HBM2 can likely hit the volumes needed for consoles anyway[bk]. It's also important to remember that Microsoft considered HBM for Scorpio and actively rejected it because of perceived drawbacks[kd]. One of the reasons cited was "access granularity" which GDDR6 actually improves on by being able to support two 16-bit channels per chip[kg], which could help resolve some of the CPU/GPU bandwidth interaction issues as well.

Still, Sony is involved with packaging techniques that could improve HBM yield[kl], and HBM2 recently underwent a spec revision that upped its maximum speeds[km]. It's certainly the most power efficient DRAM technology available that can achieve high data rates[ko]. Unfortunately, the base the low cost HBM variant simply eroded[kn].

GDDR6 is a genuine improvement over GDDR5 and GDDR5x, increasing speed, lowering voltages, and improving energy efficiency per bit transferred[kj-kk]. It's already available in 16Gb modules[kh], and the spec allows for up to 32Gb modules[kg]. It may hit up to 20Gbps in the future[ki], and is already under active supply by all three major memory makers. Micron also projects tremendous growth for GDDR6 in game consoles over the next couple of years[kf], likely meaning at least one of the two console makers has committed to using it.

In the end, GDDR6 should not be viewed as a compromise. AMD has committed to using it[gu-gv], Microsoft is actively considering it[kc], and it can provide data rates nearing 900 GB/s in a 384-bit configuration. This is the exact same bus size as the Xbox One X, so we are not talking about uncharted territory for consoles. At 18GB or 24GB with 12Gb or 16Gb chips, it sits perfectly in the range of expected capacity without needing to do a clamshell solution like the original PS4, meaning it would actually have fewer physical die.

Tracing a Path

Nvidia set the graphics world ablaze when they announced their RTX line of graphics cards with circuitry dedicated to real-time ray tracing. Ray tracing has been viewed as the holy grail of real time graphics[kw], and has been the de facto standard of computer animated movies for over a decade. Perpetually dismissed as too computationally expensive, it's finally getting some real consideration, thanks to the help of dedicated "RT" cores and AI assisted denoising techniques[kv-kw], which compensate for the low ray count the hardware is capable of producing.

Microsoft for its part also announced a DXR extension to DirectX, giving developers an API to enable ray-traced graphics in their games. In the immediate aftermath of the announcement, a few prominent developers in the industry immediately pegged it as a "next-next gen" technology[ku], much to the consternation of PS5 and NextBox hopefuls.

We shouldn't be so quick to dismiss it for new consoles, however. Phil Spencer has commented many times, going to 2014[ky], that ray-tracing is something Microsoft is actively looking at[bg], and they have demoed it[kx]. Regardless of dedicated hardware, ray-tracing is something that's already done in games to a limited extent, including by Sony's Polyphony Digital of Gran Turismo fame[kz]. CPUs could also potentially assist in ray-tracing acceleration[ks-kt].

Example ray-traced image from Nvidia demo

AMD has stated they will certainly consider ray-tracing, but his opinion is that game support will lag until the feature is supported in the complete lineup of products[oa]. They already support it in software, both for professional applications[ny] and for real-time end uses[nz]. Additionally, an interesting paper surfaced a few years ago about how one would go about modifying AMD hardware to enhance hardware-enabled ray-tracing[kr]. The author of this paper is from the University of Texas at Austin, but was an active AMD employee at the time of publication. Additionally, the author is credited with a patent on building kd-trees[kp], something essential for ray traversal.

It's important to remind ourselves why ray-tracing is so important. On top of more accurate lighting, it also simplifies game artists' jobs, since they have to put less effort into adjusting the lighting of individual scenes. This goes back to having some sort of global illumination solution. Even if that's not by ray-tracing, next gen illumination methods are on the way. Unreal Engine 4 notably dropped SVOGI from support because PS4 and Xbox One couldn't handle it, but Tim Sweeney went on to explain that better, cheaper methods were available[lb]. Those are now here[lc], and next gen is going to be beautiful.

My CPU is a Neural-Net Processor

GPUs have also been getting attention lately for their ability to accelerate Artificial Intelligence tasks. It's important to distinguish between inference and training in this context[lg], both because they excel on different types of mathematical operations, and they benefit games in different ways. As for game rendering, we are talking about denoising, anti-aliasing, and resolution upscaling. AI is also being used to enhance the visuals of some retro games to remarkable effect[lj].

Game creation stands to benefit from AI as well. Specifically, it will help artists in procedural generation[lh-li], lowering the cost of game development in the long run. The announcement of DirectML as an addition to DirectX is also significant[lj-lk], as the goal will be real-time AI assistance in games[lm-ln]. This is something that Spencer has also talked about[bh], even to the extent that they will bake it into their silicon so that it can be dual-purposed for streaming and Azure tasks for server clients[bf].

Microsoft certainly has the silicon acumen to make an impact. From the numerous optimizations they made in the Scorpio silicon[lo-lp], to the baked in BC circuitry[hy], and even their own custom CPU initiative[lq], we shouldn't dismiss it when they say they'll be adding something to next gen Xbox silicon. Additionally, Navi is also rumored to have AI acceleration[fe]. AI may well play a bigger role in next gen than ray-tracing. DirectML will be featured at GDC 2019, so do pay attention.

Assuming Direct Control

Sony and Microsoft have spent the last three console generations perfecting their respective takes on the gamepad. From the original DualShock controller to the 'S' controller for the Xbox, there's a clear lineage to the DualShock 4 and Xbox One controllers we know and love today. Yet, with the emergence of competitive gaming and special use-cases, we've seen some meaningful variations this generation.

Microsoft created their own take on a professional gaming controller, the Xbox Elite. Sony has also licensed some prominent takes on the same for their PlayStation, the SCUF controller being perhaps the most notable. Microsoft has also created the adaptive controller, to assist those with motor function challenges, giving them a chance to play like everyone else[lr].

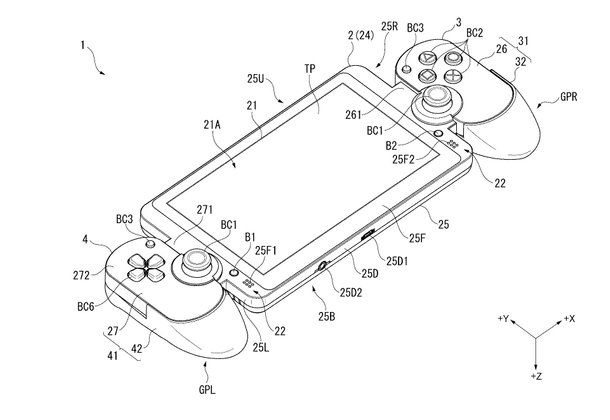

Image from Sony patent depicting a tablet with detachable controller halves

Sony also appears active on the patent front here. They have conceptualized a DualShock controller with a full touchscreen in place of the touchpad[lu]. Another features pressure-sensitive analog sticks[lr]. They also have a design for an attachment that projects on image onto your living room floor[lt], or has a camera inside it for positioning purposes[lv]. They're also working on improving the Move controllers with analog sticks[ls]. Let's not forget the tablet with detachable gamepad halves, their Switch-like solution[ab]. Microsoft has also patented a force feedback system for their analog triggers[ou-ow].

For now, it appears as though the DualShock 5 may provide backwards compatibility with the DualShock 4 while also bringing some new features to users. Sony asked users for DS4 feedback in 2017[on]. This will be an interesting space to watch.

Bloviated Pandering

While it's sales haven't been lighting the world on fire, PSVR has still emerged as the leading VR platform in gaming, and Sony has been giving it some very highly regarded titles lately, including Beat Saber, Astrobot, and Tetris Effect[lw]. If nothing else, PSVR appears to be a labor of love for the company, and the faithful are reaping the benefits.

If the work behind the scenes is any indication, Sony is very committed to PSVR for PS5. They've done several rendering tricks this generation to help improve the visuals[ms], including releasing the PS4 Pro, but there is an extensive patent trail of further tricks using foveated rendering and other optimizations to only render what the user is actually focusing on in the highest fidelity[me-mj]. Naturally, Cerny's name is prominent on these.

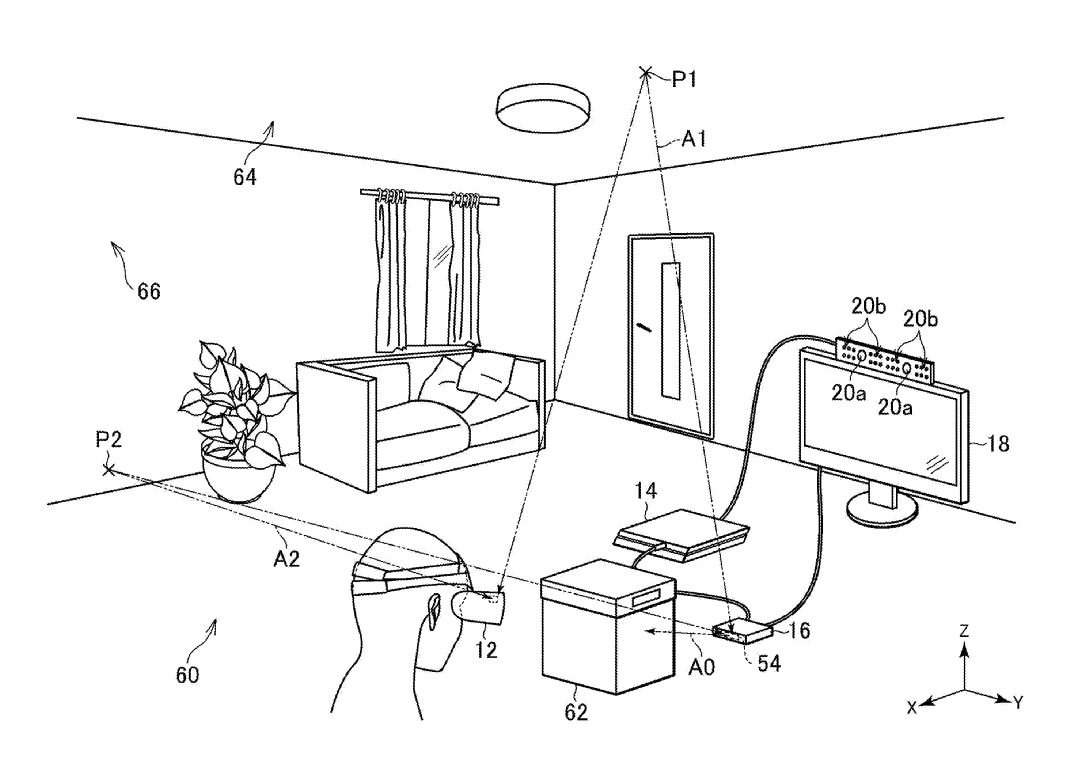

In addition to new Move controllers, they seem to be aiming to make the next headset wireless. There are several patents regarding wireless headset transmissions that deal with antenna arrays[ly], beam-forming[lz], object avoidance, and user location prediction to ensure a connection is maintained[ma-md]. Current VR wireless solutions are not cheap[lx], so Sony has a lot of work to do here to make a product they can actually sell to normal consumers.

Sony patent image depicting a wireless VR transmission box bouncing signals off interior surfaces in the presence of obstructions

Sony also has quite a bit of experience in eye-tracking[ml]. Let's not forget that their image sensors are also the cream of the crop, and they are positioned as leaders in the coming 3D sensing revolution[mm]. Also expect the visuals to be upgraded, with VR screens hitting enough PPI to severely mitigate the screen door effect seen on many VR headsets these days[mk].

The aforementioned heatsink patent[ie] also mentions a SiP in close proximity to an antenna or sensor system, which sounds exactly like as PSVR use case. If this SiP were in the headset, a well-engineered solution to manage heat and overall space consumed would be critically important. It seems safe to say that PSVR2 is not likely to be a minor update. Hopefully Microsoft does not spend this generation on the sidelines, but HoloLens has no clear path to being a consumer device at the moment.

To Make a Long Story Longer

We've come so far together! Just one more technical section, I promise. Despite all the previous sections, there's still a few things I wanted to get out there that I didn't want to shoehorn into other sections. So, stick with me for a bit longer.

The first consideration is not count Samsung out. GlobalFoundries arguably made AMD's life easier when they gave up on their 7nm process[mp], likely getting AMD out of the Wafer Supply Agreement (WSA) they were under and ultimately lowering their costs to make 7nm chips, which they could pass on to Sony and Microsoft. How does Samsung factor in? Well, they already make graphics chips for AMD and Nvidia[mo].

It's not hard to imagine Samsung becoming a supplier of chips for next gen consoles, whether it be a revision of some sort, or a dual-sourcing agreement. It would not be unprecedented, as Xbox 360 and PS3 parts shifted foundries early on in their lifecycles[mr]. Cadence has also taped out GDDR6 controllers for Samsung's 7nm process[ot], so we know that 7nm graphics products from Samsung are likely imminent. IBM also chose them over TSMC for their 7nm HPC needs[mn]. This could be an interesting area to watch, particularly as EUV comes along, and Apple is apparently not happy with TSMC's 7nm+ prices[mt]. TSMC and Samsung aren't all that different, either[mq].

Still, there's a lot of reason to be optimistic about where TSMC is at. They've already taped out a lot of 7nm+ designs[if-ig,il], and it's scheduled to hit volume this year. They entered production for 7nm ahead of schedule[mv] (an earlier point compared to 28nm and last gen console launches, assuming Fall 2020 launches[mu]), and excess 7nm supply due to weak mobile demand could help bring their prices down for Sony and Microsoft[mw].

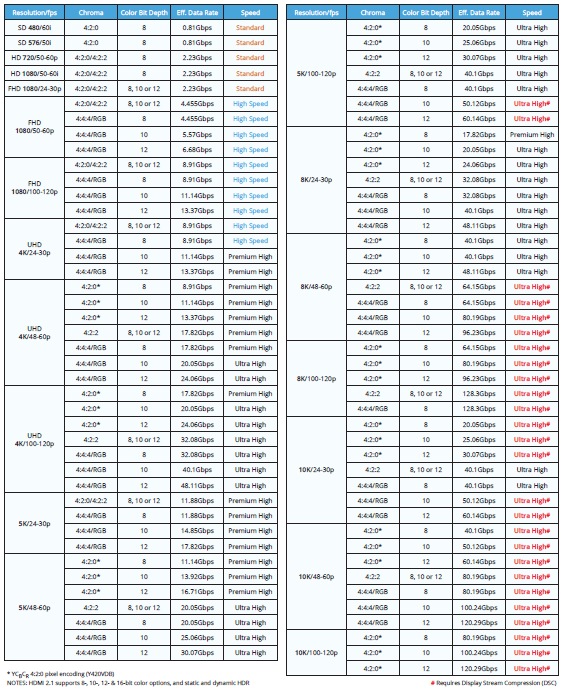

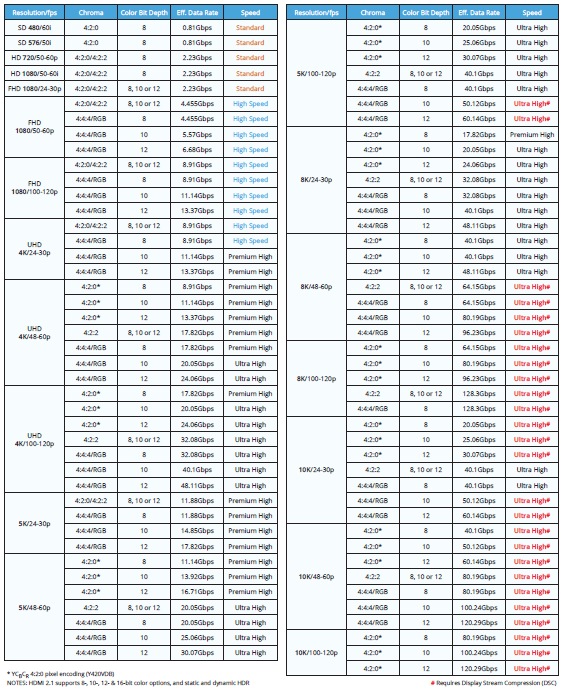

As for what these boxes will plug into, there is more good news, as the first HDMI 2.1 sets are upon us[mx]. In addition to pushing past 4K60 with 10 bit color (which is enough for next gen, so don't worry), they'll have features like VRR, which will function like FreeSync/GSync for your TV, as well as ALLM, which will automatically select the lowest latency connection for your device. Many of these features are also possible without the full 48Gbps bandwidth afforded by the spec as well, and the connections are backwards compatible[my,ng]. Microsoft's early support of VRR should be commended, as well as their 120Hz support[nr], and they're looking at HDMI 2.1, in case you were worried[nu].

HDR in console gaming is also in a healthier place than it is in PC gaming[mz], and people are buying 4K sets much faster than they bought HDTVs. While most agree that this generation will stay at 4K[nf], there are 8K aspirations out there[nb,ne]. There's also temporal upscaling alternatives to checkerboarding if you're sick of hearing of that, or think native 4K is overkill[nc-nd].

Elsewhere, be sure to read/watch the technical predictions for next gen consoles[ni], as well as the comparisons between this gen's consoles[hh,hn,hr,lo-lp,np-nq,ns-nt] to get some more context for the ideas discussed here. Go back and read the original PS4 pastebin[nm] link for giggles. Look at these console and GPU die size charts[nj-nk] to see just how it crazy it is to expect consoles to keep up with enthusiast level graphics cards. Play around with this die yield calculator[nl] - now you're practically AdoredTV! Did you know that Microsoft and Sony make insanely good profiling tools[no]? Now you do, sport!

What about Nintendo you ask? They'll be just fine. Nvidia has a strong roadmap[or], and their new Carmel CPU cores are quite impressive[os]. They can stick with ARM and never look back. Be sure to take a peak at the Miracast Wii U tablet streaming tech to get an idea for what PSVR2 might want to do with their wireless connections (likely at a much higher frequency, though)[oq].

Finally, all signs from AMD are looking good headed into next week's CES appearance. Expect to hear about consumer Zen 2/ Ryzen 3 and consumer Navi[nv]. Zen 2 and Navi samples have been in hand for a while and are up and running[bm,nw]. Vega 7nm went well enough to have its shipping date pulled in[nx].

What Did it Cost You?

Coppers, Coffers, or Coffins?

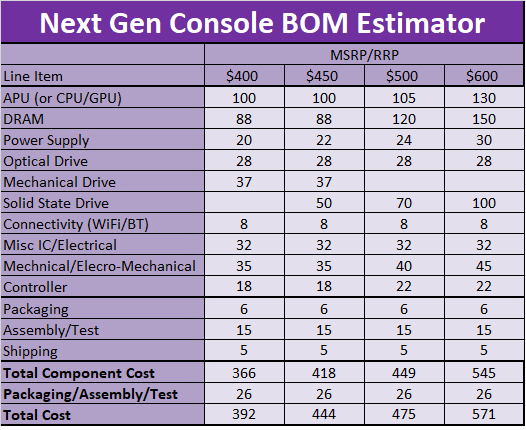

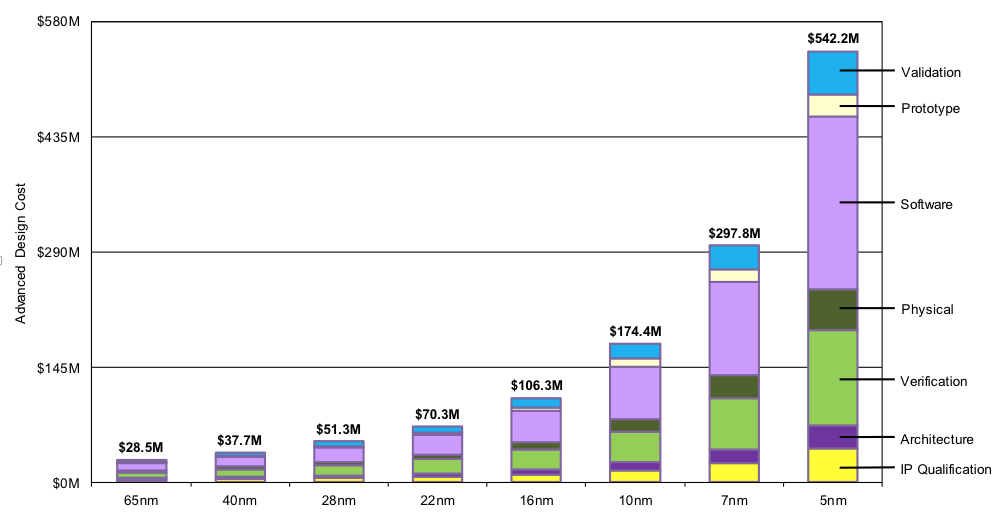

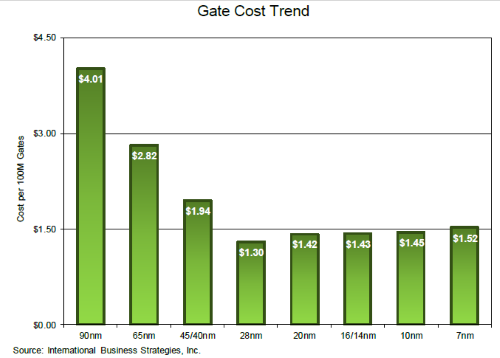

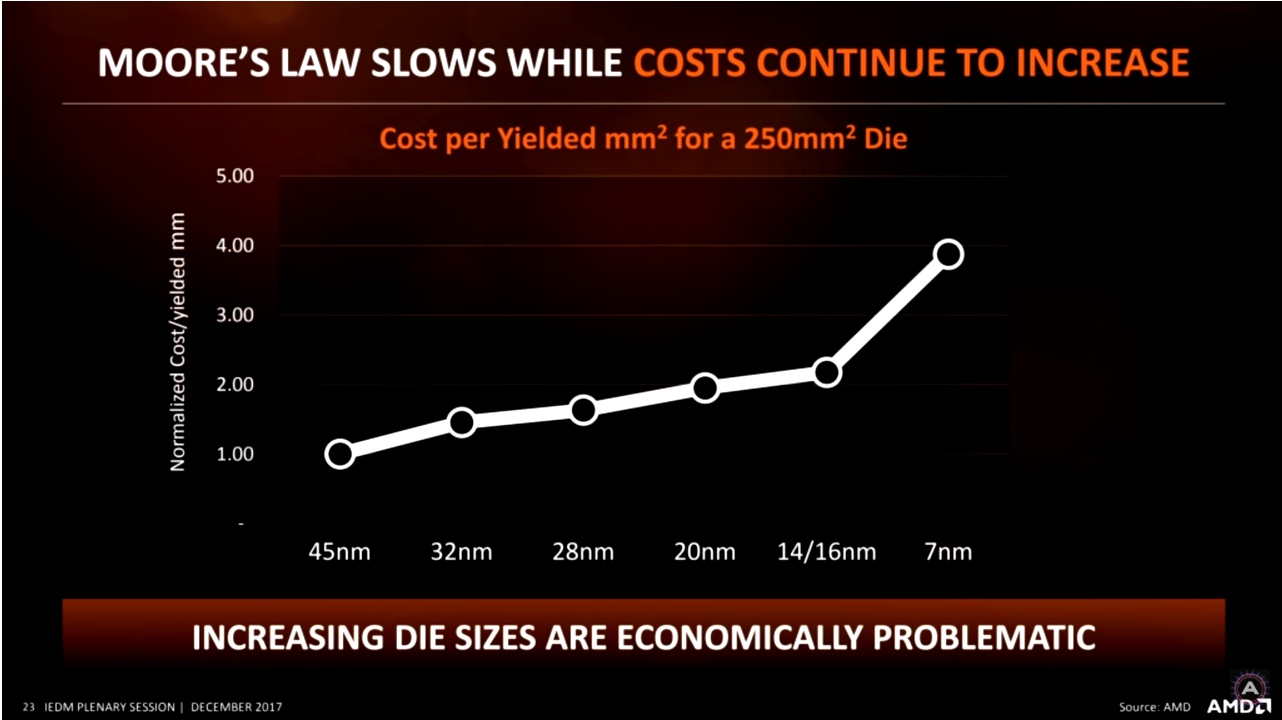

To close out, we'll finish with a costing spreadsheet for next gen consoles with a variety of pricing tiers. A worksheet version of this is linked if you'd like to play around with it as well. The current estimates are assembled from IHS and TechInsights (chipworks) estimates from last generation consoles[ob-od]. It's important to note that the two main cost drivers here are APUs and memory. APUs are going to be more expensive by virtue of 7nm being much more expensive. This will only get worse with future nodes.

https://docs.google.com/spreadsheets/d/1Ogwjrhbl6pgwTUUdDgvr1fTRxX1Avoqmy-G3C2305uM/edit?usp=sharing

Memory is going to be more expensive as well (comparing GDDR6 to GDDR5), but remember that GDDR6 can reach 16GB in half the chips the PS4 used in its launch PS4, so there could actually be a net savings in store. HBM2 is drastically more expensive than GDDR5[of]. The addition of SSD could also factor in negatively, but thanks to market projections and price-fixing investigations[oi], prices for both DRAM and NAND are expected to fall this year[og-oh]. Tarrifs remain a wild card here[oj].

Launch price has been a point of hot debate in this thread. An important point is that no launch price has been repeated for three generations or more since the Gamecube became Nintendo's last $200 home console. Inflation and the gradual inclusion of more varied and expensive functionality has caused console prices to creep up. Microsoft launched both the Xbox One and Xbox One X at $500 this generation. While the former launch was certainly rocky, it wasn't solely because of pricing, and enthusiasts still managed to make sure it was out of stock until the following year. In all, a $400 launch from either vendor shouldn't be assumed to be a given.

For additional context, the PS4 was making a profit within 6 months[ol-om]. The Xbox 360 was sold at a $126 loss initially[op], and the PS3 took 4 years to break even[ok]. Phil Spencer also commented the Xbox One X was not sold at a profit at launch[oe]. That should be your benchmark for what a $500 console may look like.