Oh god - I'd managed to forget about that. I found Berserk to be one of the most repugnant and misogynistic manga I've ever read because of the handling of Casca.Just remember Koei-tecmo were the people who thought "Hey lets give a character the outfit they wore during a rape scene for a preorder bonus" -_- ..... I'm still confused how anyone thought that was a good idea in any way.

-

Ever wanted an RSS feed of all your favorite gaming news sites? Go check out our new Gaming Headlines feed! Read more about it here.

-

We have made minor adjustments to how the search bar works on ResetEra. You can read about the changes here.

Why women criticise sexualised character designs | OT3 | "make her look more...corpulent, more stuffed where the eyes can't escape."

- Thread starter Persephone

- Start date

- OT

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Just remember Koei-tecmo were the people who thought "Hey lets give a character the outfit they wore during a rape scene for a preorder bonus" -_- ..... I'm still confused how anyone thought that was a good idea in any way.

Souls fandom went off the deep end because Dark Souls intentionally marketed itself to inflate the ego's of people who play it. This game is so hard! Prepare to die! You're so tough and a real gamer for playing this game!

Demons' Souls, as I remember it anyway, just kind of came out and was a relatively tough game without all the fucking pomp in the marketing, and the (admittedly much smaller and niche) fandom for that game never had any of the toxicity you see from souls fans now.

I guess... but they would still be able to inflate their ego even if they allowed people with different types of impairments and disabilities to play and enjoy the games. The ego inflating feature of the Souls games would be left untouched!

I guess... but they would still be able to inflate their ego even if they allowed people with different types of impairments and disabilities to play and enjoy the games. The ego inflating features of the Souls games would be left untouched!

Yeah, of course. It is the same game if you play it without the easy options turned on as if there were no easy options. There is no legitimate reason to oppose settings that allow more people to experience the game when they are not mandatory.

But marketing has convinced the worst of the souls fandom that they are part of some kind of exclusive club for having played and beaten the games, and people are insecure about losing that made up exclusivity. To be clear I'm not trying to defend this kind of fandom, I am criticizing it by way of saying where I think it comes from.

Just remember Koei-tecmo were the people who thought "Hey lets give a character the outfit they wore during a rape scene for a preorder bonus" -_- ..... I'm still confused how anyone thought that was a good idea in any way.

Wasn't the rape horse one of the DLCs?

Had to Google to make sure I wasn't misremembering, but equally as bad is the fact that the rape scene in question is what is shown/alluded to in the announcement teaser trailer for the game.

And that's the entirety of the trailer. CG snippet of the manga's infamous rape scene - get excited for Berserk Musou!

And that's the entirety of the trailer. CG snippet of the manga's infamous rape scene - get excited for Berserk Musou!

Just remember Koei-tecmo were the people who thought "Hey lets give a character the outfit they wore during a rape scene for a preorder bonus" -_- ..... I'm still confused how anyone thought that was a good idea in any way.

God, yeah. That was the Berserk game. It was, thankfully, REMOVED from the western release. I'm not gonna say Berserk handles everything with sensitivity, from the source to anime adaptations, but them having Casca have that as a costume option was beyond fucked up. I remember seeing that was either DLC or an unlockable, and the Berserk fandom was absolutely in uproar against it (as was I). I will never understand how they thought it was a good idea, at all.

Wait THAT was in one of the DLCs? How far into the story did Berserk Musou go again?

Edit: I understand keeping that scene from the manga in there, but I don't understand having that one specific horse, who only appears for like two panels, as a DLC. Checking the Steam page now I see a piece of "warhorse" DLC that includes some extra horses but I don't know if horses are an important part of Musou games. They certainly aren't THAT important a part of Berserk. Casca's costume DLCs though seem really specifically targeted at certain scenes.

Last edited:

To be clear I'm not trying to defend this kind of fandom, I am criticizing it by way of saying where I think it comes from.

Oh yeah, I get it don't worry :)

They'd use those accessibility options to make it easier for themselves, then project that into other people.I guess... but they would still be able to inflate their ego even if they allowed people with different types of impairments and disabilities to play and enjoy the games. The ego inflating feature of the Souls games would be left untouched!

S'like when people pretend to have read Nineteen-Eighty-Four or The Great Gatsby, but really they've only read the TV Tropes page - and then go around shitting on other people for not being as literate as they are.

Finally getting around to playing Last Remnant and Emma's design is neat, though for some reason, the eyes in the game have this darkened layer around them (sorry I don't know the proper term :s) but for Emma it fits IMO

Really the 4 generals of Athlum and David are far more interesting characters than generic shithead shonen protag Rush Sykes (yes that is his name...).

Really the 4 generals of Athlum and David are far more interesting characters than generic shithead shonen protag Rush Sykes (yes that is his name...).

I quite like the design. Lots of cool detail and a nice mix of fantasy with practical stuff with the layers. It just looks like a compromise between comfort for adventuring all day in it, mobility and protection.Finally getting around to playing Last Remnant and Emma's design is neat, though for some reason, the eyes in the game have this darkened layer around them (sorry I don't know the proper term :s) but for Emma it fits IMO

Really the 4 generals of Athlum and David are far more interesting characters than generic shithead shonen protag Rush Sykes (yes that is his name...).

The kind of thing I'd draw tabletop d&d characters wearing, where I imagine them over the years gradually ending up in a mix of "what's a reasonable compromise for 'I'm going hiking with a fair chance of a fight, but don't want to clank up the mountain in full heavy armour" :D

Boobplate aside, if I were to make a change I think I'd want a scabbard too, but that's just personal preference when seeing people holding a four foot bit of steel and wondering whether they just hold it all day!

I figure you folks might be interested in this video about the Witcher's sexualization -

Interesting points about the editing and the treatment/framing of lesbian characters, and the problems that can arise from the jank of reusing models.

I dunno how last gen we got two games back to back with the character names Rush Sykes and Edge Maverick

I really do need to play Last Remnant for real one of these days though

Interesting points about the editing and the treatment/framing of lesbian characters, and the problems that can arise from the jank of reusing models.

Finally getting around to playing Last Remnant and Emma's design is neat, though for some reason, the eyes in the game have this darkened layer around them (sorry I don't know the proper term :s) but for Emma it fits IMO

Really the 4 generals of Athlum and David are far more interesting characters than generic shithead shonen protag Rush Sykes (yes that is his name...).

I dunno how last gen we got two games back to back with the character names Rush Sykes and Edge Maverick

I really do need to play Last Remnant for real one of these days though

Just remember Koei-tecmo were the people who thought "Hey lets give a character the outfit they wore during a rape scene for a preorder bonus" -_- ..... I'm still confused how anyone thought that was a good idea in any way.

Casca's wet shirt (from when she's cleaning herself in the waterfall post-eclipse) was pre-order DLC, and the possessed horse was a promo DLC that came with the guide in Japan. Casca wearing only Guts' black shirt was also a costume.

Really that game was a mess from beginning to end. Having Wyald as a playable character was another mistake instead of chars like Skull Knight, Silat, Grunbeld, Locus or Irvine.

Also I just remembered that the trailer for the rape horse skin featured Schierke riding it...

Last edited:

Finally getting around to playing Last Remnant and Emma's design is neat, though for some reason, the eyes in the game have this darkened layer around them (sorry I don't know the proper term :s) but for Emma it fits IMO

Really the 4 generals of Athlum and David are far more interesting characters than generic shithead shonen protag Rush Sykes (yes that is his name...).

To think she was designed by the same dude who came up with Cindy for FFXV.

This is quite true.honestly I'm fine with discussing that kind of thing here bc feminism that isn't intersectional is bullshit

Wasn't Cindy a group effort of some sort. I kind of remember some interview snippets that were all "the guys who designed her were really proud of her" or something.To think she was designed by the same dude who came up with Cindy for FFXV.

I guess... but they would still be able to inflate their ego even if they allowed people with different types of impairments and disabilities to play and enjoy the games. The ego inflating feature of the Souls games would be left untouched!

The issue is that part of the fandom (as said by posters on ERA funny enough) that they couldn't control themselves from not touching those options and making things easier.

(I don't get that logic at all, to be honest...)

Which is silly because they're somehow able to avoid using summons without issue, when it's an option given that typically trivialises the boss in question.The issue is that part of the fandom (as said by posters on ERA funny enough) that they couldn't control themselves from not touching those options and making things easier.

(I don't get that logic at all, to be honest...)

The idea is so laughable that people who are in it for some intense challenge to break themselves against couldn't handle the challenge of not selecting "easy" at the start of the game.

y'all have brought the sexist mess what was ffxv back to the forefront of my memory. that game's treatment of its female characters was legit offensive

I remember living in South Korea for a year, while I was still in college, and asked a local college professor why there was so much sexist advertising, and was told that it helped empower women to look their best. I wonder if it's the same in Japan and China because that response was just... Was it just that the college professor was an asshole? Is the society just ignorant of how that shit sounds or? And it seems like it's getting worse, like some entrenched extremism lately, where the attitudes have turned towards the same crazy conservatism of forcing people into gender roles and stereotypes. It's like somehow oppression that's shared is fine even if it's clearly oppression.

I remember living in South Korea for a year, while I was still in college, and asked a local college professor why there was so much sexist advertising, and was told that it helped empower women to look their best. I wonder if it's the same in Japan and China because that response was just... Was it just that the college professor was an asshole? Is the society just ignorant of how that shit sounds or? And it seems like it's getting worse, like some entrenched extremism lately, where the attitudes have turned towards the same crazy conservatism of forcing people into gender roles and stereotypes. It's like somehow oppression that's shared is fine even if it's clearly oppression.

When I worked in Japan one of the principles told me a story about how bad the inequality used to be. Then he ended the story by saying... "but then we fixed it". After that he smiled, stood up, and left the office. I was stunned.

My sides. lolWhen I worked in Japan one of the principles told me a story about how bad the inequality used to be. Then he ended the story by saying... "but then we fixed it".

I find it's a general common psychological statement. Given a social norm, each generation thinks the ones before them were stuck in their old fashioned ways and their generation is the one that got it right.That's unfortunately a common sentiment in a lot of places right? "Things used to be REALLY bad, but now its better, we fixed it."

I think it's a way of explaining the problems of a Just World fallacy. The world IS fair, it's just some old timey folks still have some of their backwards ideas here and there.

It's the same thing that happened when Obama became president. "Racism is fixed now right?" It's like... no, you fucking clod. Racism isn't something you fix. It's not a leaky faucet. You gotta deal with it all the time. The reality of systemic oppression for hundreds or thousands of years doesn't go away.That's unfortunately a common sentiment in a lot of places right? "Things used to be REALLY bad, but now its better, we fixed it."

I know that's hard to hear for lots of people, but sexism is the same. It's something everyone has to work at getting better at. And it's clear that's not a satisfying answer to a lot of people, but that's life; it's tough, and you gotta learn to deal with it.

At the same time though, working towards a better future means a better world for everyone. So while it's tough, everyone comes out the other end better for it.

Casca's wet shirt (from when she's cleaning herself in the waterfall post-eclipse) was pre-order DLC, and the possessed horse was a promo DLC that came with the guide in Japan. Casca wearing only Guts' black shirt was also a costume.

Really that game was a mess from beginning to end. Having Wyald as a playable character was another mistake instead of chars like Skull Knight, Silat, Grunbeld, Locus or Irvine.

Also I just remembered that the trailer for the rape horse skin featured Schierke riding it...

Fucking Wyald of all characters?

Jesus christ the game's marketing was obsessed with rape. There's more to Berserk, but the goddamn rape horse had to appear in the marketing material.

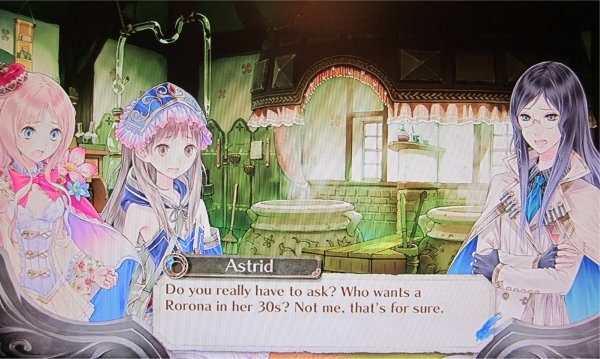

Every time I see Ryza I just wonder how in the hell do people find this kind of balloon legs appealing. They look like they will explode. Especially the new design.

"Something something EXTRA THICC"Every time I see Ryza I just wonder how in the hell do people find this kind of balloon legs appealing. They look like they will explode. Especially the new design.

I think it's some kind of weird attempt at body diversity. Like, "See? Not all these girls are have impossibly huge breasts while being twig thin, they actually have some fat on them. Were so progressive!"

I guess anime fans find leg tourniquets really sexy or something.

They probably thought this was really sexy then:

So instead of pants you just cover every gap in your shorts with straps:

This one is just hilarious:

If there's even a slight breeze..

The sexualization aside, I'm a fan of seeing more diverse body types. The idea of Ryza seems like a good thing that just went off the rails.

That's not really an example of positive representation, though, is it? So let's not get too excited about that...

Ryza clearly suffers from severe edema.Every time I see Ryza I just wonder how in the hell do people find this kind of balloon legs appealing. They look like they will explode. Especially the new design.

It's not even that diverse, the rest of the body is the same thin size you normally get, the thighs are just slightly larger than normal.That's not really an example of positive representation, though, is it? So let's not get too excited about that...

*Slightly larger than the average women's thighs would be, they seem larger in that drawing cus the rest of the body has the weird proportions which so many cartoons do where the waist is absurdly small

That's not really an example of positive representation, though, is it? So let's not get too excited about that...

It's a tiny celebration that Ryza at least looks like she can fit all of her organs in her body in their correct locations? It is still a sexist trainwreck beyond that.

That's something always weird me out when I see these anime girls. Shiny knees/elbows/etc.all these anime girls apparently have really greasy skin. thighs don't shine like that.

Character designs like Atelier Ryza aren't a diversity thing, they're just the latest trend in sexualized body parts on women. Thighs have joined the ranks of breasts and butts in being allowed to have fat on them because now nerds think it's sexy when an anime girl is wearing stockings so tight they look like they're cutting off her blood circulation.

Every time I see Ryza I just wonder how in the hell do people find this kind of balloon legs appealing. They look like they will explode. Especially the new design.

When your typical frame of reference is inflatable or plastic..all these anime girls apparently have really greasy skin. thighs don't shine like that.

Character designs like Atelier Ryza aren't a diversity thing, they're just the latest trend in sexualized body parts on women. Thighs have joined the ranks of breasts and butts in being allowed to have fat on them because now nerds think it's sexy when an anime girl is wearing stockings so tight they look like they're cutting off her blood circulation.

Yep! Very well said.