This should be obvious to anyone.

Graphics cards make things shiny. CPU affects performance.

Now of course it's more nuanced than that, but generally it's a lot easier to turn down the visual quality/resolution to improve performance than optimize for a slower CPU.

For example:

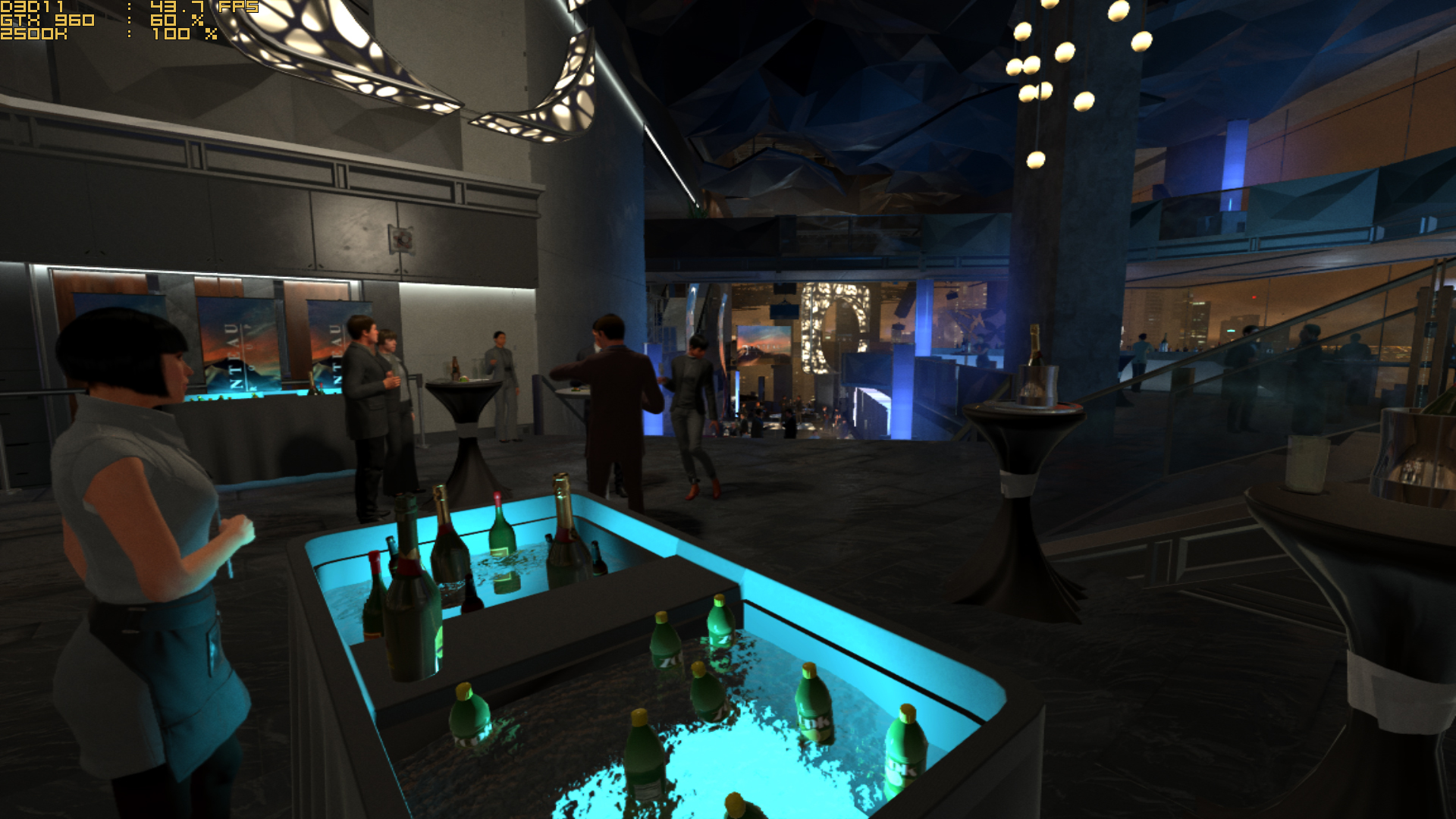

Deus Ex: Mankind Divided on an old i5-2500K, with an ~2.5 TFLOP GTX 960:

This scene (the most CPU-intense one I have found in the game) is running at ~43 FPS with only 60% GPU utilization, because the CPU is maxed-out at 100% and holding it back.

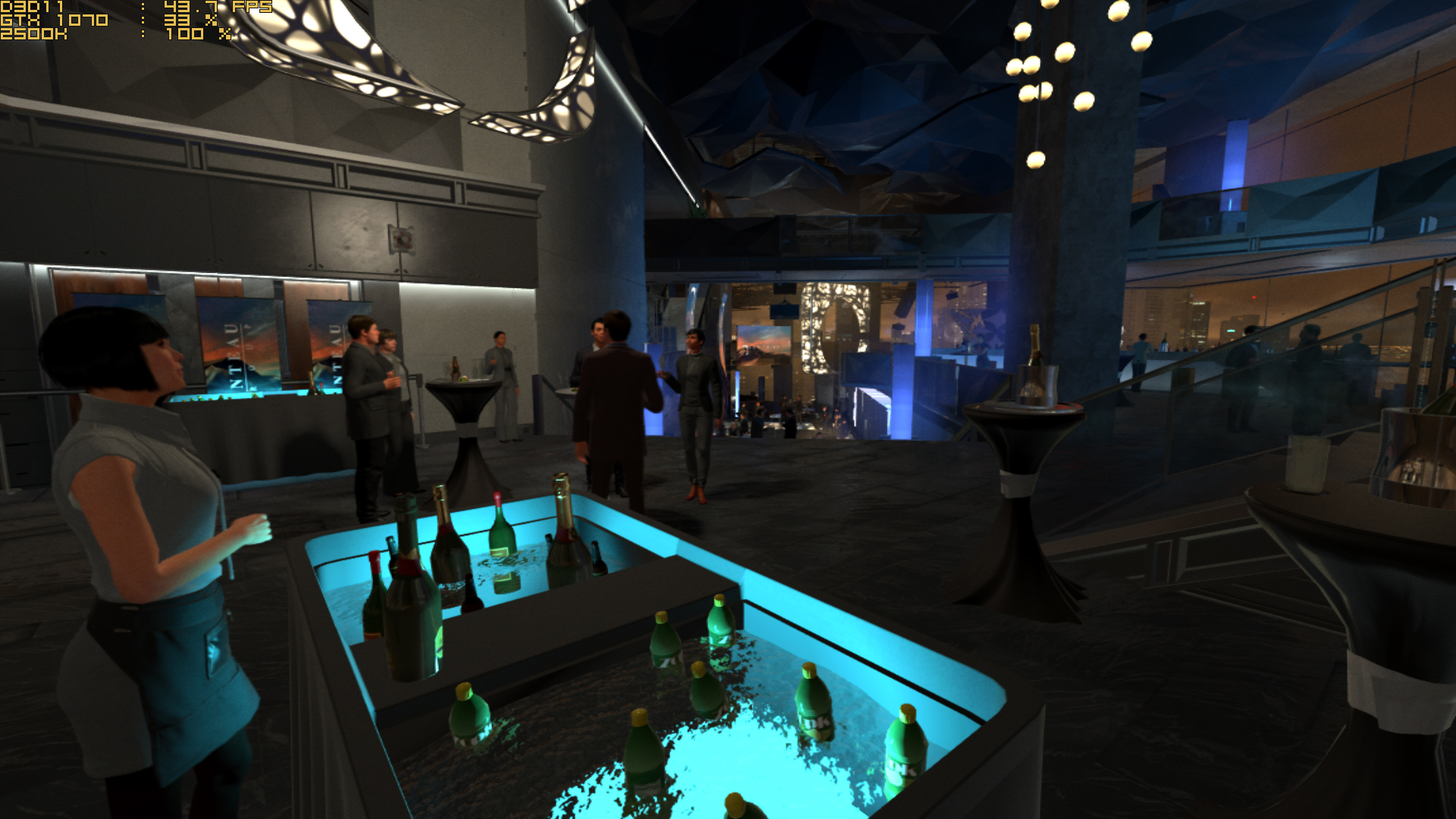

Let's upgrade the GPU to an ~6.5 TFLOP GTX 1070:

Oh, it's the

exact same 43.7 FPS; but since the GPU is faster it's only running at 33% utilization now.

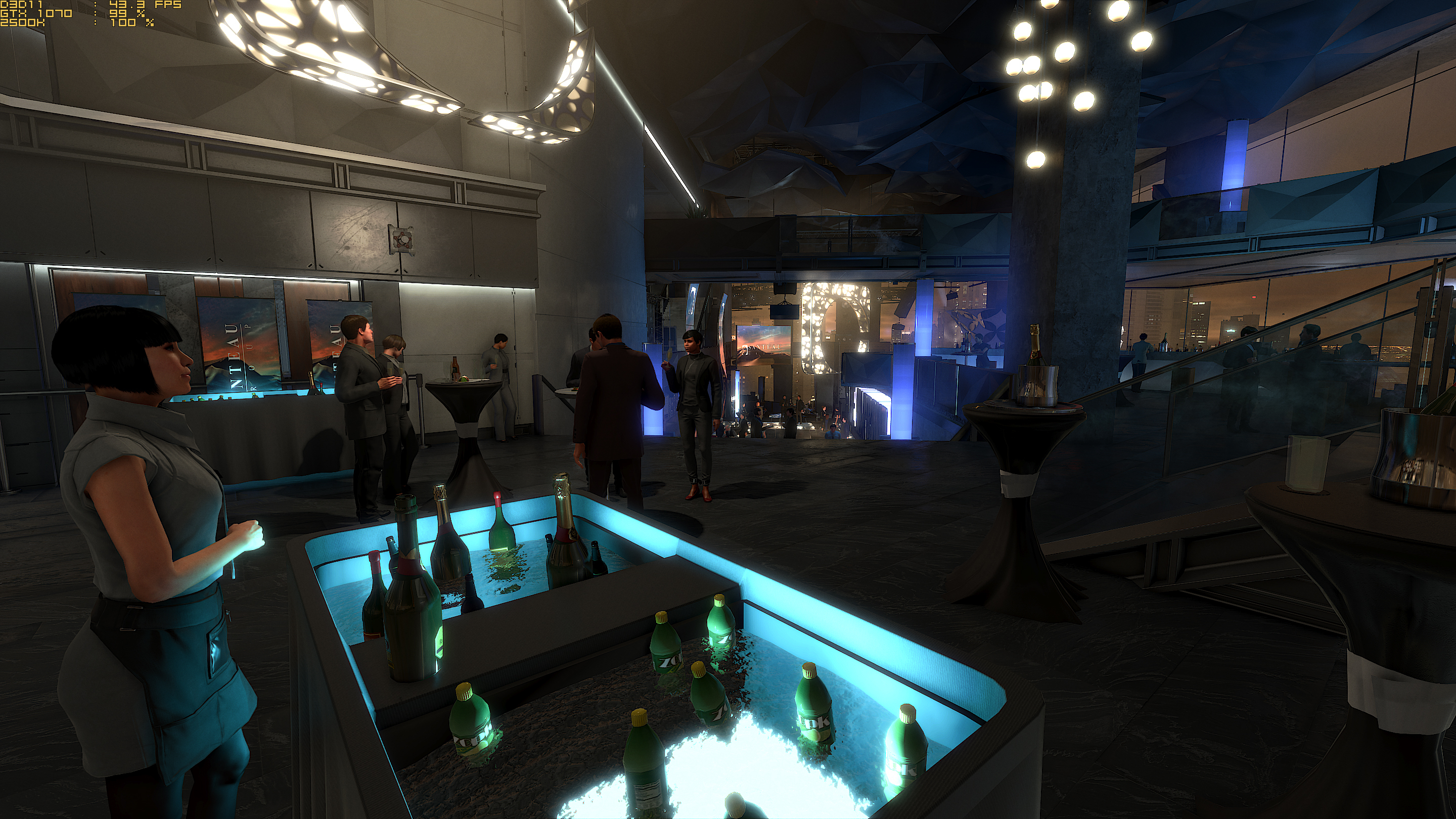

We can of course do more with a faster GPU: increasing the resolution and graphical fidelity:

We get the same ~43 FPS, but now the GPU is at 99% utilization and it looks a lot better.

No matter how much you turn down the graphical settings or increase the performance of the GPU, it won't run any faster because the CPU is the limiting factor here.

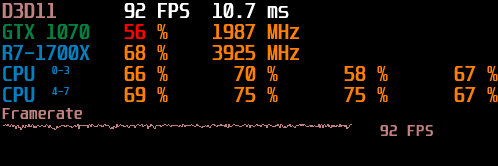

Upgrade the CPU from that old i5-2500K to a Ryzen 1700X and performance in the above scene now reaches 92 FPS instead of 43 FPS:

But we are still limited by our CPU, as the GPU utilization is still only reaching 56%.

With a fast enough CPU, that scene could theoretically be running at 164 FPS on the GTX 1070 - though I'd prefer to tune it for ~90 FPS with better graphics.

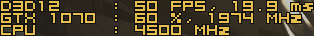

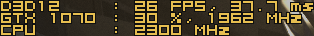

In another set of tests, if we have the CPU running at 4.5 GHz, it runs at 50 FPS:

If we roughly halve the CPU clockspeed to 2.3 GHz, it now runs at 26 FPS:

Performance in this test scales almost linearly with CPU performance, since we are not limited by our GPU.

PS5 GPU is 20% slower than XSX? You can just drop the resolution by 20% to make up for it.

CPU performance is what matters more.

Though while Teraflops aren't and never were the single most important measurement for hardware power, it is difficult to believe that a 4 TF console would not hold back a 12TF machine.

After all, all games must be designed to be scalable between 4TF and 12TF, which is a limitation in itself.

4K is 4x the resolution of 1080p.

The performance difference is 3x.

Why would the 12TF system be holding back the 4TF system?

If all else was equal, that would actually make Lockhart more powerful relative to the output resolution, since it is 1/3 the power for 1/4 the resolution.

I'm quite sure that it will be scaled back in other ways, but if we're purely comparing 4TF to 12TF, I don't see the issue. You could run games at 1200p and still have a slight performance advantage.