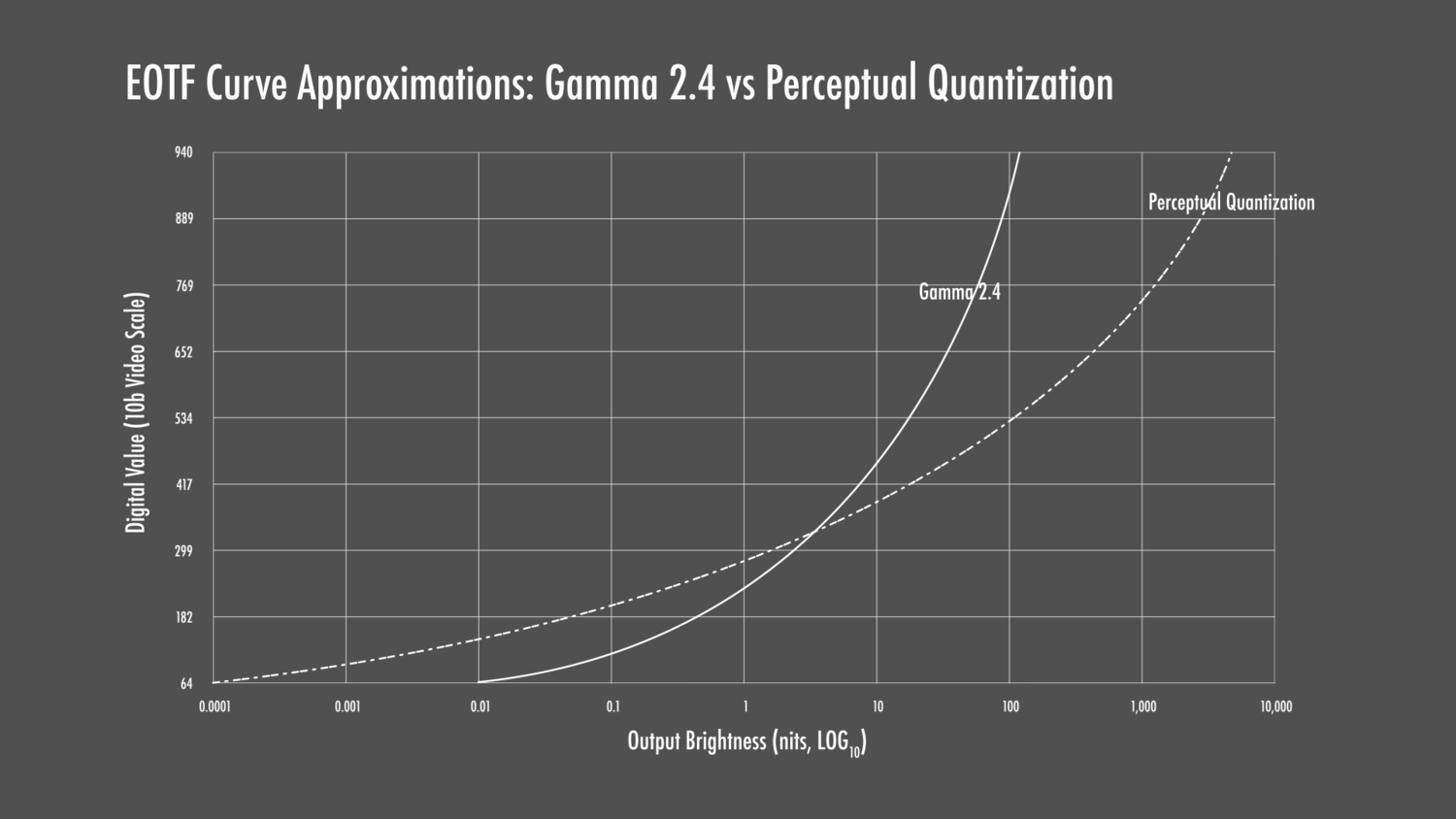

SDR uses Gamma-encoded values, while HDR uses PQ EOTF instead.

PQ allocates more bits to the darker parts of the image, where they are needed the most.

SDR also uses relative brightness values; e.g. 80% brightness, while HDR uses absolute values; e.g. 80 nits.

80% brightness is 80 nits if the display is set to the proper value of 100 nits

* but the user may choose to set their display to 700 nits instead - which would raise "80% brightness" to 560 nits.

But in HDR, a value of 80 nits is 80 nits if the display is accurate, whether its maximum brightness is set to 100 nits or 10,000 nits brightness.

*This is not technically correct since we're talking about gamma-encoded values, but just go with it. The technically correct explanation is far more complicated.

PQ is a fixed transfer function in HDR, while gamma is

expected to be 2.4 for accurate reproduction.

But most displays have a gamma adjustment option, often letting you set it as low at 1.8 - which results in much brighter shadow details, and that can be useful in a bright room.

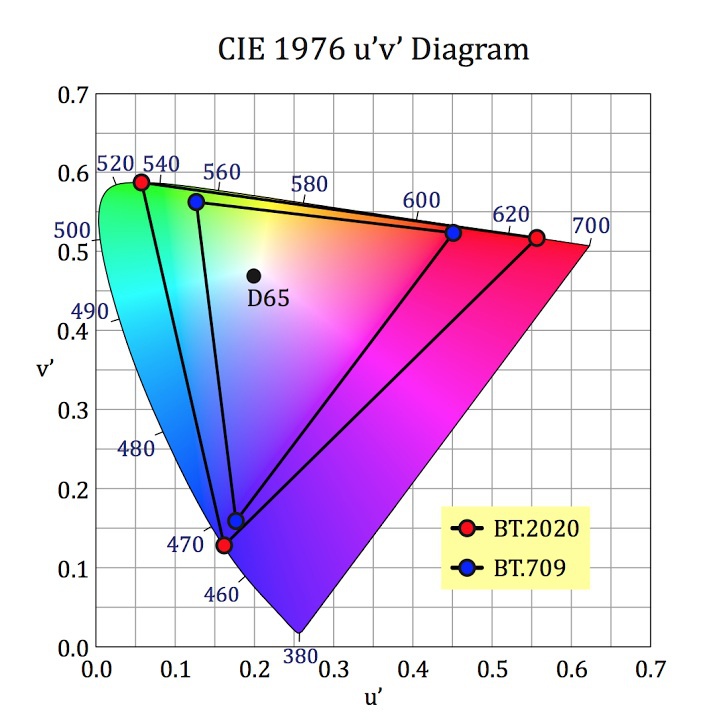

SDR uses the BT.709 color space, while HDR uses the Display-P3 or BT.2020 color space, both of which are much wider gamut.

If you simply assign the BT.709 color values to BT.2020 without correction, this results in an oversaturated image.

Look at where BT.709 red is (upper right corner of the triangle) compared to the BT.2020 red.

You need to convert the values so that "100% red" in BT.709 appears the same color in BT.2020; perhaps "80% red" instead.

But if the system is doing that conversion, it prevents the user from

choosing the oversaturated colors, if that's what they prefer.

And while it's possible to do this accurately by converting SDR to a 100 nit HDR image with the new EOTF and remapping the colors, the end result relies on the display itself being perfectly-calibrated for HDR.

No display is currently capable of 100% color accuracy in HDR - so each has its own compromises; i.e. inaccuracies, to make HDR look good.

But most displays are now capable of extremely good color accuracy in SDR.

The inaccuracies of HDR reproduction don't show themselves that much when watching actual HDR content, because the errors there are relatively minor. But when you are trying to display an accurate SDR picture inside of an HDR container, you are compounding inaccuracies which can make it look noticeably worse than sending the display a native SDR signal.

On top of that, many people do not want an accurate SDR image, and an HDR signal locks them out of those processing options.