This is a great post.An SE stands for Shader Engine. It is something that has been part of AMD's graphic cards since GCN second gen. Here is the wikipedia entry:

" GCN 2nd generation introduced an entity called "Shader Engine" (SE). A Shader Engine comprises one geometry processor, up to 44 CUs (Hawaii chip), rasterizers, ROPs, and L1 cache."

The PS4 had one single SE with 18 CU running at 800Mhz, while the PS4 Pro had two shader engines with 36 CU running at 911Mhz. When the PS4 Pro was playing original PS4 games that had not received a enhanced patch it disabled one of the SE to emulate the actual hardware of the PS4 so that there wouldn't be any bugs or glitches.

For this generation (NAVI, instead of GCN) AMD is still using shader engines (SE) and at this point all of their graphic cards have either 1 SE or 2 SE, but there are rumors that some of the upcoming big Navi GPUs will have 4 SEs. At this point we are pretty sure that Arden is the Series X GPU and it uses 2 SE with 56 CU running at approximately 1.675 Mhz. For Sony the really old info on Ariel said it used 2 SE with 36 CU, and then the later backwards compatibility testing that was tied to PS5 also showed 2 SE with 36 CU at 800Mhz, 911Mhz, and 2.0 Ghz. This shows that the PS5 will probably use the same method of backwards compatibility, running 1 SE with 18 CU for PS4 games, running 2 SE with 36 CU for PS4 Pro games, and then maybe running 2 SE with 36 CU at 2.0 Ghz for games that have an engine that can take advantage, or maybe for PS5 games.

We don't have any idea if that is what the final chip is though because that leak did not show RT and VRS, while it did show those things for Arden (the XSX GPU). Now that we know PS5 also has RT and VRS it makes the Github leak look like it may have only been testing for BC because it is missing final details. The thought is then that maybe PS5 has 3 SE with 54 CU, and that the Github data only showed backwards compatibility testing.....

Edit: a word

-

Ever wanted an RSS feed of all your favorite gaming news sites? Go check out our new Gaming Headlines feed! Read more about it here.

PS5 Speculation |OT12| - Aw hell, Transistor's running this again?

- Thread starter Mecha Meister

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

- Status

- Not open for further replies.

Threadmarks

View all 7 threadmarks

Reader mode

Reader mode

Recent threadmarks

Thread rules + Contest Image of Phil Spencer's twitter profile picture with 10 memory chips Transistor vs. the World Xbox Series X chip size estimation Series X chip comparison (illustration vs photo) Xbox Series X Official Specs NEW DISCUSSION GUIDELINESI think secretly Microsoft is hoping that PS5 will either be more similar to XSX or more expensive than $399. Sony is likely hoping to have the PS5 be around $399 and in order to do that they have to accept being behind XSX but it means they will have the middle ground between XSS and XSX, cheap enough but powerful enough, while there will be people who are absolutely price sensitive and those who prefer more performance, Sony probably has accepted that they will loose those users to the Xbox Ecosystem, but there are users who want the best of both worlds.

If Sony went with a more powerful system, it would significantly drive up cost and will cede the mainstream, low end and casual market to Xbox Series S.

If Sony went with a more powerful system, it would significantly drive up cost and will cede the mainstream, low end and casual market to Xbox Series S.

The fact that we have a Bloomberg report saying BOM of PS5 is currently $450... it will be a $500 console. Just like the Series X.

The difference in BOM (Series X being higher) is the fact Sony manufactures a fair bit of internals themeselves, while MS has to source these from other vendors. (Sony sound chip, blu ray player, Im sure theres more .etc) Both are going to be aiming 100% at the same target market, especially at launch, as Sony has already said PS5 is aimed at serious buyers intetested in the latest tech. It's also who MS is aiming Series X at. Im with Klee, Jason, Reiner on this still. The consoles will be close, within 10% of each other.

The difference in BOM (Series X being higher) is the fact Sony manufactures a fair bit of internals themeselves, while MS has to source these from other vendors. (Sony sound chip, blu ray player, Im sure theres more .etc) Both are going to be aiming 100% at the same target market, especially at launch, as Sony has already said PS5 is aimed at serious buyers intetested in the latest tech. It's also who MS is aiming Series X at. Im with Klee, Jason, Reiner on this still. The consoles will be close, within 10% of each other.

500 is reasonable. I'd get both within 3-4 months of launch at that price. Curious if we see a marked difference in gaming as a whole because of the technological changes. I doubt it. But we will see.The fact that we have a Bloomberg report saying BOM of PS5 is currently $450... it will be a $500 console. Just like the Series X.

The difference in BOM (Series X being higher) is the fact Sony manufactures a fair bit of internals themeselves, while MS has to source these from other vendors. (Sony sound chip, blu ray player, Im sure theres more .etc) Both are going to be aiming 100% at the same target market, especially at launch, as Sony has already said PS5 is aimed at serious buyers intetested in the latest tech. It's also who MS is aiming Series X at. Im with Klee, Jason, Reiner on this still. The consoles will be close, within 10% of each other.

$500 is very reasonable I feel. I mean.. the Zen CPUs and HW RT justifies it to me already before we even start talking TF. For $500, if one like's MS's first party games, I would say the buying the Series X is a no brainer for that price.500 is reasonable. I'd get both within 3-4 months of launch at that price. Curious if we see a marked difference in gaming as a whole because of the technological changes. I doubt it. But we will see.

I got a feeling we will get a $500 PS5 after the first Wired article with Cerny.

At one point they ask him about price.. and nothing he says sounds like $399.. He basically says "when we show it to consumers, when we show them what it can do, they will see the price is justified". For PS4, everyone took $399 as some sort of sweetspot. But I feel $500 is perfectly acceptable for what we're getting.

i hate working from home. forced to work on a laptop instead of a three monitor setup. kids running and screaming all around the house. wife thinking i can be helping her with chores like im on vacation or something. no, i cant go to kroger to get you cilantro.

AMEN. Same.

I just hope whatever the case may be, expanded storage is available day one.

Indeed. 1 TB is just pure suicide.

And I hope it support SATA hard drive.I just hope whatever the case may be, expanded storage is available day one.

I just have a different standard for who I consider an insider, but we can disagree on that.

What does this even mean?

klee made lots of claims that have all turned out to be true aside from PS5 Feb event which easily could have been cancelled internally due to coronavirus.

He confirmed the leaked specs of XSX before they were official.

Schreier is literally the most respected journalist in the industry and is almost always right. Had also made claims about XSX and PS5 and all of his claims about XSX has also turned out to be completely correct.

So what on earth does "I have a different definition of insider and I'll refuse to believe these people for... reasons" mean?

Last edited:

Great post.I think secretly Microsoft is hoping that PS5 will either be more similar to XSX or more expensive than $399. Sony is likely hoping to have the PS5 be around $399 and in order to do that they have to accept being behind XSX but it means they will have the middle ground between XSS and XSX, cheap enough but powerful enough, while there will be people who are absolutely price sensitive and those who prefer more performance, Sony probably has accepted that they will loose those users to the Xbox Ecosystem, but there are users who want the best of both worlds.

If Sony went with a more powerful system, it would significantly drive up cost and will cede the mainstream, low end and casual market to Xbox Series S.

Timdog and Friends = insider.What does this even mean?

klee made lots of claims that have all turned out to be true aside from PS5 Feb event which easily could have been cancelled internally due to coronavirus.

He confirmed the leaked specs of XSX before they were official.

Schreier is literally the most respected journalist in the industry and is al. Had also made claims about XSX and PS5 and all of his claims about XSX has also turned out to be completely correct.

So what on earth does "I have a different definition of insider and I'll refuse to believe these people for... reasons" mean?

I have not followed too much this thread, specially when it gets very technical talking about CUs and stuff.

I read Klee posts in the thread mark of OT7 and so far he has been right about the specs. I don't really know where this "PS5 is going to be < 10TF" is coming from. You don't have to be an expert, a spy, an insider or a wizard to deduce that there is no way that the PS5 is going to have less than 10 TF.

However, I also don't see it being more than 12 TF. It is very likely (personally, a certainty) that the difference between the PS5 and Xbox is not going to be more than 10% as Klee wrote in OT7.

About the reveal, it is going to be very hard to pinpoint the date, because all of the Coronavirus happening. They could even cancel E3 of this thing keeps going, so even Microsoft will have to do something different (It's clear that MS will focus on games 80%, game pass/services 10% and specs 10%), so they could do a Direct style if things go wrong.

Sony, on the other hand, has it more difficult because how can they announce it (and where)? When everybody is cancelling events. Also, California government is asking companies not to hold large scale events with many people.

With that said, I think the announcement is getting closer (however it may be), because they already announced release dates for their upcoming games (only GoT was missing) and there is no way that the PS5 will not be revealed with at least 6 months in advance of launch. (I personally think that it should be 9 months in advance, but that would put the reveal this month, but it's unlikely)

I read Klee posts in the thread mark of OT7 and so far he has been right about the specs. I don't really know where this "PS5 is going to be < 10TF" is coming from. You don't have to be an expert, a spy, an insider or a wizard to deduce that there is no way that the PS5 is going to have less than 10 TF.

However, I also don't see it being more than 12 TF. It is very likely (personally, a certainty) that the difference between the PS5 and Xbox is not going to be more than 10% as Klee wrote in OT7.

About the reveal, it is going to be very hard to pinpoint the date, because all of the Coronavirus happening. They could even cancel E3 of this thing keeps going, so even Microsoft will have to do something different (It's clear that MS will focus on games 80%, game pass/services 10% and specs 10%), so they could do a Direct style if things go wrong.

Sony, on the other hand, has it more difficult because how can they announce it (and where)? When everybody is cancelling events. Also, California government is asking companies not to hold large scale events with many people.

With that said, I think the announcement is getting closer (however it may be), because they already announced release dates for their upcoming games (only GoT was missing) and there is no way that the PS5 will not be revealed with at least 6 months in advance of launch. (I personally think that it should be 9 months in advance, but that would put the reveal this month, but it's unlikely)

We'll have to wait and see. There's no rdna2 gpus even out yet. 2ghz shouldnt be an issue for rdna2 dgpus, but we're working with cpu/gpu combined in a single, really large chip. Which is why we see cpu speeds less than 4ghz and the gpu speed is unknown at the moment. Having everything in a single chip just makes it more complex along with the thermal envelope of a console. we just have to wait and see. it would be great if they can squeeze as much as possible out of the gpu side so long as yields arent affected.That makes sense for the way we traditionally thought about GPU speeds, but then I'm hearing about how 2GHz is not that crazy when it comes to RDNA 2 specifically. Is that kind of misinformation and 2GHz would still be a hurdle for RDNA 2 GPUs?

Hope you're right, although I can't imagine what type of hellscape this thread would become right after we'd get that type of official confirmation.Look - Matt said it isn't reliable. This little community went in circles on that stupid little leak for far too long even after it was called out.

If you're still choosing to believe in that leak, good for you, but clear your plate for the crow.

Hope you're right, although I can't imagine what type of hellscape this thread would become right after we'd get that type of official confirmation.

It would be beautiful.

We're not allowed to know anything about PS5. My guess is June.

I'll start with a question, Considering that Claybook is probably the most GPGPU intensive game this whole generation, do we know how many ms of GPU time it dedicates to GPGPU per frame?

Regarding GPGPU in general, this generation had the worst CPU to GPU ratio ever by a country mile compared to previous generations. If this generation hardly used GPGPU, what are the chances that next-gen will have heavy GPGPU usage considering the new and powerful Zen 2 CPU? I doubt how much gameplay-related GPGPU use we will see, especially Claybook level of GPGPU use. And even if a game uses GPGPU, usually these uses are very scalable. Resolution will probably do the trick for most games but it doesn't mean that other games won't need to scale back some things in order to run well on XSS. For instance, SVOGI, People really like the SVOGI example. SVOGI is based on a voxel grid, developers can easily use a less detailed voxel grid that uses bigger voxels. So yeah, SVOGI doesn't scale with resolution, but it is scalable and you can say that on basically any graphical effect.

Re. Claybook, I believe it spent a quarter of its frametime on gpu physics, whether that was the extent of its GPGPU processing I don't know. Switch was 4x slower for them - the challenge was not architectural difference but the performance delta. They addressed it by dropping the framerate in half on Switch and spending months on optimisation to claw back the other 2x improvement needed.

My point about raising Switch ports in this argument is just the above - that kind of effort isn't something that will fit costlessly into a day and date pipeline, which is where we presume Xbox/Lockhart will reside. Now Lockhart isn't quite 'that kind of effort' - but the point is that reaching to Switch examples as an extreme that covers the more moderate situation in general on Lockhart, doesn't convince IMO. Switch level efforts - or even half that - I think it's doubtful they'd be costless integrations into development schedules of the kind of games looked at in those examples, wrt impact on development or even perhaps the scope of the game.

Re. GPU usage trends, I think it's a function of more than relative CPU power - compiler/tools support being another - and as I said earlier, I'd by no means argue that we'd suddenly othewise have an explosion of worldspace GPGPU processing. But what I would say is, whatever about any other factors, Lockhart will weigh against that possibility fairly heavily now, even in the relative minority of cases where it might have happened.

We're left to speculate why, if per Jason Schreier, 'some devs' think Lockhart would hamper them next gen. A change in GPU usage trends is one possibility. Maybe memory is another? I don't know. For those 'converting' folks, I don't think it's a conversation that can have a consensus outcome at this point ;)

With a potential recession on the horizon launching a $500 console might not be such a good idea. No one can or could predict the future though.

I'd that instead of worrying about Lockhart holding next gen games back people should be more worried about a recession significantly slowing down the adaption of next gen. In a recession people are very price sensitive and generally don't spend much on luxury goods. A $500 console might be a hard sell. I fear that if we really hit a hard recession because of COVID-19 we're going to see a very long cross gen period.

I'd that instead of worrying about Lockhart holding next gen games back people should be more worried about a recession significantly slowing down the adaption of next gen. In a recession people are very price sensitive and generally don't spend much on luxury goods. A $500 console might be a hard sell. I fear that if we really hit a hard recession because of COVID-19 we're going to see a very long cross gen period.

This is shitWe're not allowed to know anything about PS5. My guess is June.

You definitely seem to have greater technical knowledge than I do. What do you mean by "at runtime" here? Would a restart of the process be required? Would a hardware reboot be required?At runtime it's possible for AMD to switch to Wave64 or Wave32 for Shaders, use WGP or CU mode and to reserve CUs and lock out CUs for a certain task.

I think the question is rather if AMD can power gate a Shader-Array per SE on RDNA1/2, so it wouldn't burn extra power for bc.

Welcome to the club. Nobody is happy about it. Sony won't even afford people a generic PR statement or any kind of update. My brother personally thinks something might be wrong at Sony and that is why they've remained dead silent month after month if there is something going on behind the scenes but the end result is that nobody knows what is happening with PS5. Not even a single good leak or anything. It's like the thing don't even exist at this point. lol

This is kind of a circular argument especially when we are talking about multi platform games. You couldn't program use of GPGPU into your game for obvious reasons if you are designing for Xbox and PS4. So it would also stand to reason that we would see a significant difference in exclusive titles which is what we do see. Titles like DriveClub used the extra power in various ways, we also saw Killzone Shadowfall and Infamous which used it to perform sound reflections. I think the point I'm making, even with Claybook, is that the extra performance doesn't "have" to be allocated visuals so that it can scale down to a lower system. I do think the CPUs this time around are much better but we also have 7 years to go with the hardware and whatever baseline we establish upfront.

We went into the generation with a low baseline for CPU that really began to hurt towards the end of this generation, we will be starting a whole new 7 year period with a 4Tflop GPU baseline.

edit: actually as I'm thinking about it we are essentially talking an exact replay of how it worked last gen. Games were designed for the Xbox base system and scaled up, not the other way around. We already have an example with the current gen where if we have similar CPU but different GPUs that most will simply be resolution difference and maybe a frame rate increase with some other added effects and call it a day. We see this already with the Xbox One OG up to the X1X being 5x more powerful.

This generation we have examples of the jaguar CPU being the "baseline" for core game system development. What are your examples of the xbox GPU holding back core game systems? All it did was hold back visuals on XB1 as resolutions and various graphical effects needed to be toned down or removed in order for games to run on the system.

Visual assets assets and systems are created for max settings, then downscaled for weaker hardware.

Again I'm arguing that non-scalable elements don't get designed into most games because of the very fact that they are not scalable. Most elements being scaleable is strictly because the platform design dictates that to be so, not because the elements are always going to be scalable. You're taking the fact that things are designed to be GPU scalable as evidence that most anything you want to run on GPU will be.

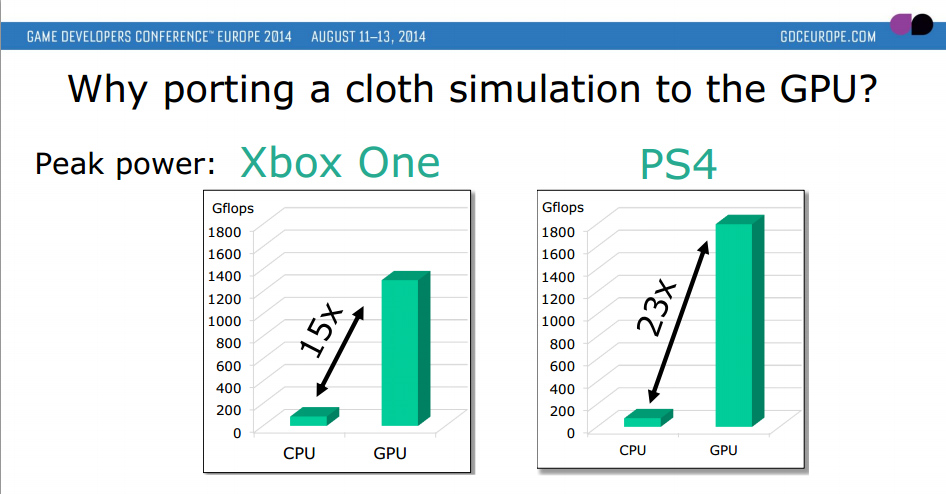

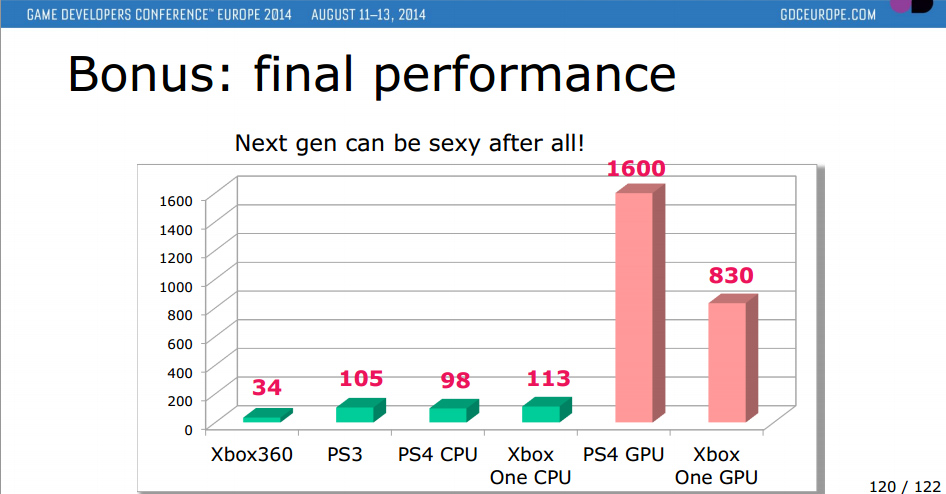

I think I'll just link this UBIsoft test on GPGPU cloth simulation:

Ubisoft GDC Presentation of PS4 & X1 GPU & CPU Performance

Ubisoft's GDC 2014 document provides insights into the performance of the Playstation 4 and Xbox One's CPU and GPU and GPGPU compute information from AMD and other sources.www.redgamingtech.com

We can see there are distinct workloads that benefit from GPGPU, but this also means we cannot plan a gameplay mechanic around the higher level of GPGPU compute of this cloth simulation. The obvious reason being because of the vast gap in those workloads. Therefore any use of cloth simulation would have to NOT affect gameplay or design and be relegated to be visual and scalable only.

The only reason these effects are, as you put it, sliders on a scale is because developers know up front they cannot rely on the higher levels of performance because of the vast disparity between systems and design their gameplay elements around the limitations of their lower baseline systems.

Isn't this just revealing the fallacy of your arguement? The article you posted explicitly mentions porting the cloth physics task to GPU in part because CPUs since were too weak this gen and would handle such a task inefficiently.

Cloth physics are a superficial visual element, as such it's most prudent to use a GPU to handle them, especially now with how powerful GPUs have become. The result is you have a feature that can be scaled down or removed without any impact on core gameplay. Developers arent designing cloth physics around a weak gpu and scaling up. They are designing this cloth physics system for the strongest hardware, then toning it down as necessary for weaker hardware.

Your reasoning is circular. Cloth physics are a perfect candidate to offload to GPUs BECAUSE they aren't something devs typically intended to impact core systems anyway. As such, When we don't see cloth physics effect gameplay, its not because of limitations of weaker GPUs in the hardware target - it's mostly likely because no one wants to make cloth physics a core gameplay system.

A dev that wants cloth physics to be a part of core gameplay system (if such a developer exists) is limited by this gens weak CPUs, since that's where it makes the most sense to handle physics that have a game-state effect.

Offloading such tasks to GPUs means that they would inherently be scalable, and either wont impact core systems or the impact on core systems will be scalable in nature. The improved CPUs on next gen hardware will allow such core physics systems to move back to CPU if necessary.

I see we aren't going to make headway on this so I will just draw your attention to the bolded, explain the core difference between our arguments, and leave it at that:

I'm arguing because GPUs have wide variances in performance, gameplay elements are not run on the GPU.

You're arguing gameplay elements are not run on the GPUso there is no reason to worry about wide variances in performance.

While both are true, my proof is that when exclusives are made, gameplay elements are offloaded to the GPU which happens in a uniform closed system or in the case of the PS3, gpu elements are offloaded to the CPU. In fact, even in this generation we've seen things such as AI and physics offloaded to the GPU. It's not a rarity at all.

if viewing the XSS/XSX through the view of multiplatforwhich are designed with wide variance in mind then no it wouldn't matter as the game wouldn't be designed like that in the first place.

if viewed through the lens of platform exclusives games, then a closed system without performance variance will make better use of its guaranteed resources than a game designed to scale up and down based on performance variances.

How prevalent we will see either of these scenarios is up for debate, maybe only one or two PS5 exclusives take advantage of the higher baseline for gameplay elements, maybe they all do, maybe none of them do, and I guess we won't know until the generation and games release.

I'm glad to see the argument slowly shift away from the notion that the Lockhart GPU will be generally informing baseline core systems or that devs design GPU functions for the worst hardware then scale up. The reality is that with multiple hardware targets, tasks that are scalable and nonessential to core to gameplay systems, are offloaded to the component that has the widest variance between player systems - the GPU. This allows devs to scale down without impacting gameplay.

It's possible that some PS5 exclusive(s) will utilize the GPU in some un-scalable fashion, and depending on how much frametime this function requires, this system wouldn't be replicable in Microsoft's ecosystem, but this is highly unlikely and stands to be incredibly rare if it ever happens - it's certainly wont be prevalent enough to dictate hardware strategy.

Last edited:

Transistor would finally get to activate the moderation super-weapon known only as "Death Blossom".

Look - Matt said it isn't reliable. This little community went in circles on that stupid little leak for far too long even after it was called out.

If you're still choosing to believe in that leak, good for you, but clear your plate for the crow.

June sounds like the less likely month now, with TLOU2 being released on 5/29 and GOT on 6/26

More chances for March, the period between 4/20 and 5/15 and, less likely, July

Yup you would think but week after week, month after month, Sony keeps proving again and again that they are hellbent on the "no news" bandwagon. Not even a hint of an upcoming event. For all we know, maybe they are considering postponing the system into 2021 due to the perceived upcoming hardships with the Coronavirus. Who knows?

i dont expect huge PS5 blowout from Sony this month (we would have probably heard if there was an event), so i am currently 50/50 between the time period you listed of april to may and july. Its going to be either one of those.June sounds like the less likely month now, with TLOU2 being released on 5/29 and GOT on 6/26

More chances for March, the period between 4/20 and 5/15 and, less likely, July

In my mind, I see TLOU2 and Ghost of Tsushima as really well known upcoming titles, so would their sales really be hurt because of next gen talk? especially in the case of TLOU2 because of its fan following, but even Ghost of Tsushima looks next gen to me at points visually, so I dont think that consumers will hold off on it because of a new console.

But what would be the point in waiting until June to have a reveal that close to your exclusives when you can just have it a month before that?i dont expect huge PS5 blowout from Sony this month (we would have probably heard if there was an event), so i am currently 50/50 between the time period you listed of april to may and july. Its going to be either one of those.

In my mind, I see TLOU2 and Ghost of Tsushima as really well known upcoming titles, so would their sales really be hurt because of next gen talk? especially in the case of TLOU2 because of its fan following, but even Ghost of Tsushima looks next gen to me at points visually, so I dont think that consumers will hold off on it because of a new console.

Also, are they going to talk about BC and upgrade patches during the PS5 reveal? That would be pretty awkward to say there are better versions of their games in the very same period they are trying to sell them

Yup you would think but week after week, month after month, Sony keeps proving again and again that they are hellbent on the "no news" bandwagon. Not even a hint of an upcoming event. For all we know, maybe they are considering postponing the system into 2021 due to the perceived upcoming hardships with the Coronavirus. Who knows?

PlayStation will not participate in E3 2020

The company will instead attend 'hundreds of consumer events across the globe'

"We will build upon our global events strategy in 2020 by participating in hundreds of consumer events across the globe."

Their plans have definitely changed.

Why not!Sorry I'm late everyone! I got hung up at the bus stop in an absolute downpour. I made some new friends, though! Say hi everyone!

Well, it's a simple night tonight. JamboGT and EsqBob, please step forward. For the crimes of failing to guess the PlayStation 5 reveal date, I hereby sentence you to the following avatars:

JamboGT, this avatar is courtesy of a certain Era staff member. I just couldn't say no when I saw it.

EsqBob, I think it's time to get yourself a new ride.....

Well, that's it folks. Like I said, easy night. Come back tomorrow for more fun. And now, I sleep.

Aged like fine milk.

PlayStation will not participate in E3 2020

The company will instead attend 'hundreds of consumer events across the globe'www.gamesindustry.biz

"We will build upon our global events strategy in 2020 by participating in hundreds of consumer events across the globe."

Their plans have definitely changed.

Okay just caught up with the thread still seems we have the github is it real or not bloodbath going down so hmmm my stupid 5am and I've driven all night theory. The github leak has some form of data to it but it's super hard to sift through because the same intern who leaked it, also seemed to have put things in the wrong place and it's all a kerfuffle. So were all going in circles fighting about it and then somehow you have to either pick a side between no github and github.

Seems to me we're kind of acting like the AMD intern who made the github doc.... in other words we're sloppy as hell. We don't need to say "I cant wait for it to be disproven, so I can laugh" or "i believe github over Jason S. Or Klee" it isn't that simple. Yes, they're both shooting to beat stadia's tf count (which let's be honest stadia is on life support so ya know everything beats stadia at this point), the Bloomberg report said the BOM has increased to 450 which maybe means they were hoping for 400 and it shot up to 450 or maybe Bloomberg is pulling a Bloomberg and doesn't know what they're talking about, maybe team 14tf w/hbm2 is the truth, or finally maybe yo cerny built a box that was around 9-10 tf of rdna2 to hit an internal price target from his corporate overlords.

What it all comes down to is.... both boxes are RDNA2 and no matter what box you grab you'll be in for a treat. So yo let's all not catch a ban before the actual reveal because it's not worth sitting a month out over speculation.

Seems to me we're kind of acting like the AMD intern who made the github doc.... in other words we're sloppy as hell. We don't need to say "I cant wait for it to be disproven, so I can laugh" or "i believe github over Jason S. Or Klee" it isn't that simple. Yes, they're both shooting to beat stadia's tf count (which let's be honest stadia is on life support so ya know everything beats stadia at this point), the Bloomberg report said the BOM has increased to 450 which maybe means they were hoping for 400 and it shot up to 450 or maybe Bloomberg is pulling a Bloomberg and doesn't know what they're talking about, maybe team 14tf w/hbm2 is the truth, or finally maybe yo cerny built a box that was around 9-10 tf of rdna2 to hit an internal price target from his corporate overlords.

What it all comes down to is.... both boxes are RDNA2 and no matter what box you grab you'll be in for a treat. So yo let's all not catch a ban before the actual reveal because it's not worth sitting a month out over speculation.

Matt is a mod, if I recall correctly and he said something to the extent of "I'm glad we all moved past github"I have one question: who is Matt? What did he leak?

Thank you in advance.

Which team anti github said "SEE WE KNEW IT WAS A LIE" and then team github said "WE NEED CONTEXT ON WHAT THAT MEANS, SO IT STILL COULD MEAN GITHUB SPEAKS THE TRUTH"

Edit: also matt is the terror that flaps in the night and seems to have his ear to the streets. I do totally know he is a mod and his avatar pic is darkwing duck.

He's a mod but he knows things if everyone though he meant something other than "finally this thread can be more than GitLul"', right?Matt is a mod, if I recall correctly and he said something to the extent of "I'm glad we all moved past github"

Which team anti github said "SEE WE KNEW IT WAS A LIE" and then team github said "WE NEED CONTEXT ON WHAT THAT MEANS, SO IT STILL COULD MEAN GITHUB SPEAKS THE TRUTH"

I still think they won't say anything until September full reveal wise then launch in November

I think that would be insane, but I'd love to see them try.

To be honest friend, I dont quite know and I wouldn't want to speak for him because that's how misinformation and rumors become the next hot YouTube "SPEC LEAK." I would fathom that a person who rocks darkwing duck wouldn't muck about.He's a mod but he knows things if everyone though he meant something other than "finally this thread can be more than GitLul"', right?

Edit: Everything that gets stated in these threads or on podcasts tend to get a tad misconstrued into what ever suits their narrative. So I would keep what matt said in mind but I don't feel comfortable elaborating on his statement because like everything else in this thread a one off comment can be turned into whatever the reader wants to believe it means.

Matt is a mod, if I recall correctly and he said something to the extent of "I'm glad we all moved past github"

Which team anti github said "SEE WE KNEW IT WAS A LIE" and then team github said "WE NEED CONTEXT ON WHAT THAT MEANS, SO IT STILL COULD MEAN GITHUB SPEAKS THE TRUTH"

Edit: also matt is the terror that flaps in the night and seems to have his ear to the streets. I do totally know he is a mod and his avatar pic is darkwing duck.

Being more clear, he said github was the truth in May 2019 but it is not the final truth.

Thank you so much I knew there was like a confirmation of yeah the data sheet is real but like after that the great github vs anti-github war kicked off and everything moves rather rapidly. That being said always enjoy your posts, they're always a good read.Being more clear, he said github was the truth in May 2019 but it is not the final truth.

Edit:OH GOD OH GOD PLEASE DON'T THINK THIS IS ME SAYING IM TEAM GITHUB AND IT'S THE GOSPEL! I'm just saying it was confirmed that an intern screwed up and up to may 2019 it had some information but not the final.

I am the equivalent of Switzerland in the github wars.

PS5 reveal as soon as MS open preorders or suddenly early.....absolutely not later!!

It's beginning to feel like Sony won't budge no matter what Microsoft does. They aren't making any sense at all.

I hope Sony talks soon because it's all just getting a bit shit now.

Until this situation with the virus is contained we have to accept PS5 might be delayed so revealing it before that point just isn't very likely.

Given the great lineup we have if ever there was an ideal year to delay a console this would be it.

I wonder is there any chance that Zen2 SMT will be disabled when handling PS4 titles? I don't think that those old [unpatached] titles have the capability to automatically utilize all the cores/threads present in the PS5.PS4 Pro has a 2.13ghz CPU and a 36CU 911mhz GPU.

When playing unpatched base PS4 games with Boost Mode disabled the CPU runs at 1.6ghz and the GPU only actives 18CUs and runs them at 800mhz to achieve practically perfect compatibility.

When Boost Mode is enabled the CPU runs at the full 2.13ghz speed and the GPU runs at the full 911 mhz speed, but the number of active CUs remains at 18.

When a patched game is played the CPU runs at the full 2.13ghz and all 36 CUs are active and run at 911mhz.

The only logical conclusion to make is, and I dare say Occam's Razor says, that any information about the PlayStation 5 utilizing any of the aforementioned numbers, whether clock speeds or CU counts, is only and purely indicative of compatibility modes for PS4 and PS4 Pro games.

PreachhhhhhUntil this situation with the virus is contained we have to accept PS5 might be delayed so revealing it before that point just isn't very likely.

Yeah I thought when I put my avatar bet for may 1st, I was "playing the long game" but turns out with the outbreak and such I don't have any clue of a reveal or a launch date besides "holiday 2020".... which if not contained i can see both boxes being delayed to q1 2021 or a super small launch in key markets.

Threadmarks

View all 7 threadmarks

Reader mode

Reader mode

Recent threadmarks

Thread rules + Contest Image of Phil Spencer's twitter profile picture with 10 memory chips Transistor vs. the World Xbox Series X chip size estimation Series X chip comparison (illustration vs photo) Xbox Series X Official Specs NEW DISCUSSION GUIDELINES- Status

- Not open for further replies.