-

Ever wanted an RSS feed of all your favorite gaming news sites? Go check out our new Gaming Headlines feed! Read more about it here.

(NVIDIA) Cyberpunk 2077: Ray-Traced Effects Revealed, DLSS 2.0 Supported, Playable On GeForce NOW

- Thread starter kostacurtas

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Is there any chance DLSS will one day be applicable to any game without specific development ? The same way you can force antialiasing in the Nvidia settings for example ? Unless all future games will systematically implement it ?

If you take DLSS 1.0 and use a generic neural network, it could be applied to any game at the driver level. The problem is that DLSS 1.0's image quality made the usefulness questionable, even when using a game-specific model.

I think that integrating it into the engine is probably going to always be more efficient that a post-processing solution, simply because the network has access to more information. But if we get to a situation with future hardware where they have tons of tensor cores that are otherwise going unused, maybe we would see a return of the post-processing approach.

It really comes down to what kind RT performance RDNA 2 packs. Those cards can run some of those RT effects, but lack of scaling tech like DLSS 2 will limit choices.

That seems almost impossible because there's no way a software solution can match a solution that is run by dedicated hardware. That wouldn't make any sense. DLSS isn't magic it's run by Tensor cores who are otherwise doing nothing.New upscaling techniques could be in the works. I'm not saying we're getting DLSS, but maybe something that can achieve a similar boost.

It seems impossible because it is. nVidia has dedicated tons of R&D into getting DLSS running on hardware (Tensor cores), which leaves the CUDA and RT cores free to focus on graphics processing instead of upscaling. A software solution would just be what we have now with checker boarding, and not at all the same thing.That seems almost impossible because there's no way a software solution can match a solution that is run by dedicated hardware. That wouldn't make any sense. DLSS isn't magic it's run by Tensor cores who are otherwise doing nothing.

This is once again nVidia using its position as market leader and having something like 10x the R&D spend AMD has to simply be ahead of the game and untouchable.

It really comes down to what kind RT performance RDNA 2 packs. Those cards can run some of those RT effects, but lack of scaling tech like DLSS 2 will limit choices.

Hmm ok I'm super new to PC gaming so excuse me if this is obvious, but I'm using an RX 5700XT. Does that support RDNA 2?

Hmm ok I'm super new to PC gaming so excuse me if this is obvious, but I'm using an RX 5700XT. Does that support RDNA 2?

No. RDNA 2 architecture cards aren't out yet, they will release bit later this year. They will be going head to head with NV's 3000 -series that also releases later this year.

RDNA 2 architecture cards will be AMD's first GPU's to support ray tracing.

Ray tracing features in Cyberpunk 2077 can be ran on those cards because they use standardized implementation through DirectX 12, so they aren't some exclusive NV stuff.

Microsoft, NV and AMD together developed ray tracing solution for DX 12.

Edit: DLSS 2 is exclusive to Nvidia as it's their proprietary reconstruction tech

Thanks. GeForce Now it is. Control with full RT looks great on the service.

What are you talking about, the 2080Ti was running it at 60+ fps with DLSS2 at 1080p with all the RT effects and graphics settings cranked up at the local events, not ideal, but it is achievable on today's hardware.....This game requires a 3080Ti or higher, clearly!

And even then it will be barely enough for 1080p@60 FPS w/ DLSS2 apparently.

nVidia is going to sell lots of cards bundled with this game. Lots and lots and lots.

What are you talking about, the 2080Ti was running it at 60+ fps with DLSS2 at 1080p with all the RT effects and graphics settings cranked up at the local events, not ideal, but it is achievable on today's hardware.....

Ray traced reflections were disabled.

So few games support it right now, why even bother. I love the tech too but it is definitelly not the reason why I hate my 1080. (I actually hate my 1080 because I want more VRAM).I have a 1080 Ti and I'm growing to resent it purely for the lack of DLSS hahaha. God I want that feature so bad. But I absolutely cannot justifying upgrading my GPU when next gen consoles are a few months from release.

Pcgh had the same build which did not have RT reflections, but I do not doubt even that it looks dramatically better and different with the other RT effects on.SkillUp claims he had RT in the demo he played (and that it's the " best implementation he's ever seen")

I hope (dream) that when they announce the 3000 series NVIDIA just drops a bomb at the end and tells us: oh btw, DLSS 2.0 is now available for every game, like, right now. New driver is up for download, it's part of the Control Panel.

Because that's what needs to happen, every single game needs this and hopefully they are pushing real hard to implement it in everything.

Because that's what needs to happen, every single game needs this and hopefully they are pushing real hard to implement it in everything.

Pcgh had the same build which did not have RT reflections, but I do not doubt even that it looks dramatically better and different with the other RT effects on.

Yeah that was pointed out to me. I didn't even consider that it could be only partial RT effects. With a 2080S, I'm a little more apprehensive than I was before hearing that, but sure I'll be fine with your optimised recommendations.

I really hope my poor 2070 can run this at 60fps with everything on. Give me those futuristic puddle reflections!

With DLSS 2.0 you maybe could, just not a crazy high resolution.I really hope my poor 2070 can run this at 60fps with everything on. Give me those futuristic puddle reflections!

With DLSS 2.0 you maybe could, just not a crazy high resolution.

That's fine, my monitor is 1080p and far from getting retired. Heck I may even try 720p with DLSS 2.0 if it looks passable.

Last edited:

get a crt, they look good at lower resolutions and get crazy fps. You just have to have a giant monitor on your desk.I'm gunning for twice that framerate, at least. I'm probably not going to get what I want, but damn it I'm gonna try. After playing DOOM Eternal and Gears 5 at 120fps+, super high framerate is the thing I prioritize above all else these days.

I'm okay if my 2060 does 540p with DLSS 2 and 60fps mix of medium high

No ultra in my future lol

No ultra in my future lol

If I could find a high quality widescreen CRT for my PC, locally, I'd be on it no matter the price. That shit is my white whale.get a crt, they look good at lower resolutions and get crazy fps. You just have to have a giant monitor on your desk.

See, when I was younger and my older brother moved out, I gave him my 26" widescreen Trinitron WEGA so he'd have a TV to use. I of course chose to keep my shitty LCD because it was bigger.... I didn't understand, back then! Wii and PS2 games never looked as good on any other screen I owned after that.

Took me almost 10 years to find another one locally, but last year I finally did it. Someone nearby was giving theirs away, with the stipulation that whoever took it had to bring the equipment needed to physically move that 300-pound beast out of their home. 34" widescreen Trinitron WEGA in my basement right now cuz that shit is way too heavy to put on any desk, but I love it, it's the TV I use to play Smash, pre-gen-8 fighting games, and anything retro. Doesn't play very nice with PCs though, unfortunately.

Last edited:

I have never used streaming - so is the game Geforce Now max of 1080p, but runs at 60FPS with what I'm assuming is all the settings cranked including the raytracing etc?

I could forgo getting a 3080 this fall and just play on this? I have gigabit internet I'm assuming that will work good enough for this.

I could forgo getting a 3080 this fall and just play on this? I have gigabit internet I'm assuming that will work good enough for this.

You can try 540p with DLSS and it will look just as good as native 1080p.That's fine, my monitor is 1080p and far from getting retired. Heck I may even try 720p with DLSS 2.0 if it looks passable.

3440 x 1440 / (Very) High settings / RT / DLSS 2.0

Yeah... I think 2080Ti is toast, give me that 3090.

Control hovers around 60-70fps with this setup, though dips into high 40s in select spots. It's fortunate DLSS 2.0 is as good as it is.

My hope is to find an affordable 3070 and use 1080p---DLSS2---> 4k. I'll tweak the RT and graphic settings from there to get 60fps.

I want to see DLSS in every big release.

I want to see DLSS in every big release.

In theory yes. Although from what you have mentioned, it seems like you haven't used a service like this before?I have never used streaming - so is the game Geforce Now max of 1080p, but runs at 60FPS with what I'm assuming is all the settings cranked including the raytracing etc?

I could forgo getting a 3080 this fall and just play on this? I have gigabit internet I'm assuming that will work good enough for this.

In practice, there are quite often issues with these streaming services. Whether that be degraded quality (macroblocking etc.) stuttering or heavy latency.

Connection quality often makes surprisingly little difference. I advice testing the waters first, to check you would actually be happy with the experience. Native hardware definitely still has quite the edge for me.

In theory yes. Although from what you have mentioned, it seems like you haven't used a service like this before?

In practice, there are quite often issues with these streaming services. Whether that be degraded quality (macroblocking etc.) stuttering or heavy latency.

Connection quality often makes surprisingly little difference. I advice testing the waters first, to check you would actually be happy with the experience. Native hardware definitely still has quite the edge for me.

In practice most people make streaming services suck by never altering their own settings to handle them better.

You can't do much about your bandwidth fluctuating but most never turn off, tune, enable, or disable at least 4-7 settings that utterly crap on their input latency until it's balanced for their environment.

While you don't need AQM for streaming services but geforcenow and stadia improved once I dealt with interrupt moderation and busy polling. The services are fine if you can keep a solid connection and get rid of laggy mechanism that are present on your end. Everyone has them considering most people use linux routers with older kernels, which aren't all that great compared to 4.x or 5.x kernels that have much better network subsystems.

CP2077 very well may end up the Geforce NOW poster child.

Will be very interesting to see if it is the better option than my gaming laptop, graphics setting and performance wise.

I have a desktop i7-9700K (95W TDP), RTX 2080 (200W TDP) and 16GB RAM. My internal screen is only 1080p.

Get those Ampere farms up and running, Nvidia. The world demands it.

Will be very interesting to see if it is the better option than my gaming laptop, graphics setting and performance wise.

I have a desktop i7-9700K (95W TDP), RTX 2080 (200W TDP) and 16GB RAM. My internal screen is only 1080p.

Get those Ampere farms up and running, Nvidia. The world demands it.

Watching the trailer really made me wanna pick up a 3840 x 1600 ultrawide to play it. I hope I'll be able to get at least close to 100fps with RTX and DLSS 2.0 on maxed settings with a 3080ti but I'm doubting even that will be able to run cyberpunk at that resolution lol.

DLSS and integer scaling too. I want that clean 1080p 120hz on my 4K TV while I wait for HDMI 2.1 sets to arrive.

Oh I'm actually not familiar with integer scaling. Could you tell me a bit about it?

So few games support it right now, why even bother. I love the tech too but it is definitelly not the reason why I hate my 1080. (I actually hate my 1080 because I want more VRAM).

Hahahaha valid. I guess part of this is the promise of DLSS 2.0. Since they're working to make it compatible with every game even if not specifically trained on that title. Although who knows when it will get there and how successful it will be.

I guess I just suspect we'll see a lot more DLSS adoption in the upcoming years because of how integrated reconstructed upscaling will be in next gen consoles. I mean, what do I know? But I feel like with reconstruction techniques being integral to enabling their advanced ray tracing features on consoles, devs will seek out those solutions on PC as well.

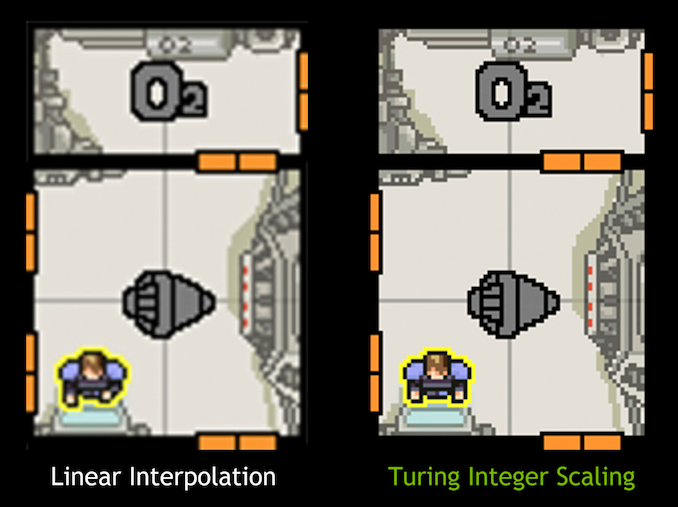

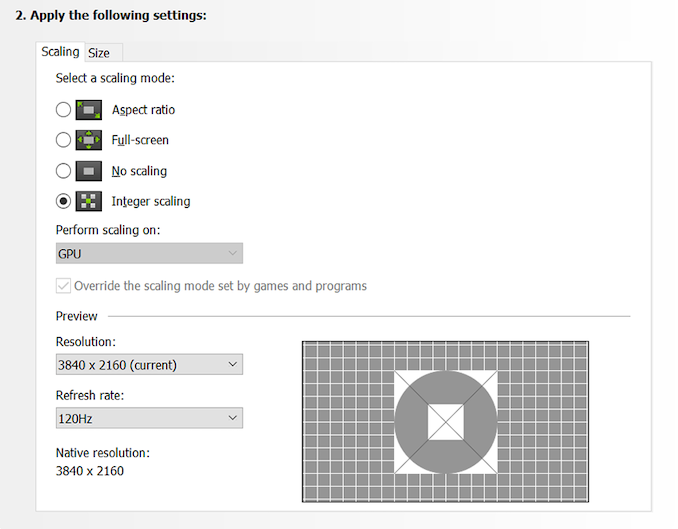

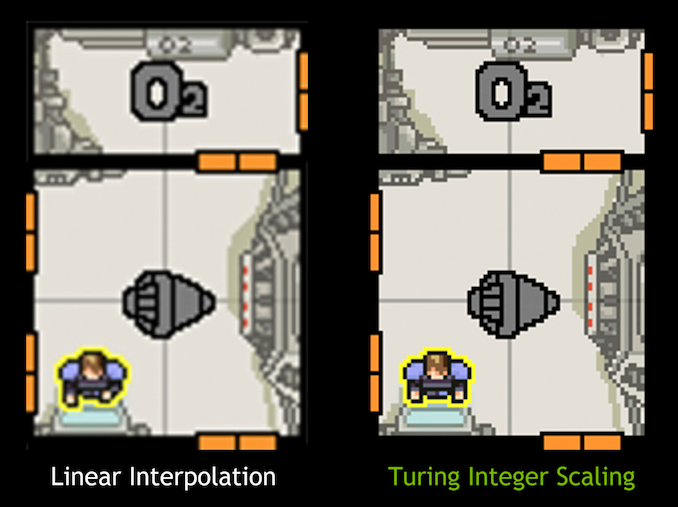

In a nutshell, integer scaling makes it so that 1080p looks native on a 4K display. Every 1 pixel becomes 4 pixels so it looks exactly like a native image without any upscaling blur. It's a feature exclusive to RTX cards (why this wasn't possible before, I don't know).Oh I'm actually not familiar with integer scaling. Could you tell me a bit about it?

For people like me who like to run at 1080p/120fps on 4K TV's, it's a game changer. I've just been hesitant to upgrade my GPU since the 3000 series has been right around the corner for seemingly forever.

Holy shit, I had no idea it didn't already work like that. I've always felt 1080p looked especially blurry on my 4k display. Like, more so than it would have on a 1080p one. Thought it was just my imagination though.In a nutshell, integer scaling makes it so that 1080p looks native on a 4K display. Every 1 pixel becomes 4 pixels so it looks exactly like a native image without any upscaling blur. It's a feature exclusive to RTX cards (why this wasn't possible before, I don't know).

For people like me who like to run at 1080p/120fps on 4K TV's, it's a game changer. I've just been hesitant to upgrade my GPU since the 3000 series has been right around the corner for seemingly forever.

So would this also be applied after DLSSing from 720 to 1080 on a 4k display? ("Applied" might the wrong terminology if this is just the integrated output method, but I don't know.)

It's a feature exclusive to RTX cards (why this wasn't possible before, I don't know).

It uses the tensor cores pretty extensively which aren't available on pre-RTX cards.

As long as your output resolution is 1080p it'll evenly multiply to a crisp image on a 4K display. Same thing for 720p image on a 1440p display.Holy shit, I had no idea it didn't already work like that. I've always felt 1080p looked especially blurry on my 4k display. Like, more so than it would have on a 1080p one. Thought it was just my imagination though.

So would this also be applied after DLSSing from 720 to 1080 on a 4k display? ("Applied" might the wrong terminology if this is just the integrated output method, but I don't know.)

I don't know why it wasn't possible before, but it's a new setting on RTX cards.

Right, but... why? I don't understand why it's so intensive. Seems like it should be easier and cheaper than literally any other upscaling method.It uses the tensor cores pretty extensively which aren't available on pre-RTX cards.

It uses the tensor cores pretty extensively which aren't available on pre-RTX cards.

Why does it need tensor cores for this task? I'm genuinely curious as to how the tech works.

And if it uses the tensor cores extensively, what's the impact on performance? (If you were simultaneously running other tensor core tasks like DLSS and RT.)

As long as your output resolution is 1080p it'll evenly multiply to a crisp image on a 4K display. Same thing for 720p image on a 1440p display.

I don't know why it wasn't possible before, but it's a new setting on RTX cards.

Right, but... why? I don't understand why it's so intensive. Seems like it should be easier and cheaper than literally any other upscaling method.

Why does it need tensor cores for this task? I'm genuinely curious as to how the tech works.

And if it uses the tensor cores extensively, what's the impact on performance? (If you were simultaneously running other tensor core tasks like DLSS and RT.)

I actually need to correct that. The integer scaling is done in an updated hardware accelerated scaling filter that is available in the RTX line of cards as well at the GTX 16 series.

Gamescom Game Ready Driver Improves Performance By Up To 23%, And Brings New Ultra-Low Latency, Integer Scaling and Image Sharpening Features

Apex Legends, Battlefield V, Forza Horizon 4, Strange Brigade and World War Z now run even faster, retro and pixel games look better with Integer Scaling, modern games look sharper with NVIDIA Freestyle, and input responsiveness can be improved with our new low latency modes.

www.nvidia.com

Something to note with integer scaling is that if a game isn't resolved at a multiple of your screen's resolution, you end up with black bars around the image.

For some comparisons check out:

Integer Scaling Explored: Sharper Pixels For Retro And Modern Games

Retro gaming is back with a vengeance, and new driver features from Intel and Nvidia fix the blur and let you get the sharpest image possible from

I've always wondered for like 4 years now why it wasn't doable. Seems like a rather straightforward way as you are effectively making 1 pixel the size of 4 pixel by duplication rather than approximation like linear scaling (which seems less straightforward in comparison), at the very least when talking about 2x and 4x scaling. And today I find out that it's doable but only in Turing.In a nutshell, integer scaling makes it so that 1080p looks native on a 4K display. Every 1 pixel becomes 4 pixels so it looks exactly like a native image without any upscaling blur. It's a feature exclusive to RTX cards (why this wasn't possible before, I don't know).

For people like me who like to run at 1080p/120fps on 4K TV's, it's a game changer. I've just been hesitant to upgrade my GPU since the 3000 series has been right around the corner for seemingly forever.

Last edited: