It depends on the usecase but in this example to a certain extend yes.

Let's say we have a 60 FPS game and we do the rasterization and pixel shading at 1920x1080p but the HW is not fast enough to keep 60 FPS in all cases, so we implement a dynamic resolution scaling option which currently several games use.

If we go under a certain threshold then our Raster/Shading-Rate goes down from 1920x1080 to 1440x810 to maintain 60 FPS.

This will lead to more pixelization in general, the polygons will appear more pixelated and the detail of the surfaces will also appear more blurry/pixelated.

With VRS you could go for a nicer comprimise.

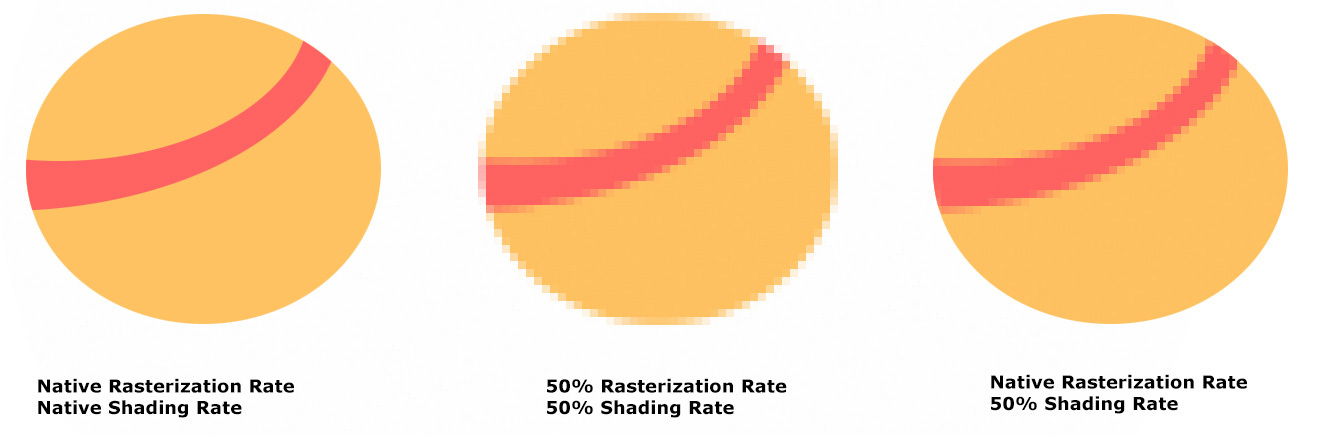

You may still rasterize in 1920x1080, so your polygon edges are not getting more pixelated and your geometry details stays sharp but you reduce the Shading-Rate which makes only the surface of the polygons more pixelated/blurry.

Or as a simple illustration:

In most cases in regards to VRS it's about saving performance with lowering the Shading-Rate without too much visual impact.

You could go also the other way around if the HW and API is flexible enough and increase the Shading-Rate for fine details while keeping the Rasterization-Rate lower.

In the best case that's just a very flexible tool which allows the developer to increase or decrease the shading rate based on numerours factors.

You could couple the shading rate to a certain region (useful for VR rendering, where the focus point stays sharp, while saving performance on regions where the user wouldn't notice it anyway), to certain objects/surfaces (for very homogeneous color surfaces, like the blue sky or dark ground, where a lower S-Rate wouldn't be so visible) , to a certain movement speed (lower the shading rate when motion blur kicks in, so you get the effect for free and the user doesn't notice), to distance etc.

Nvidia has nice comparison pictures for Wolfenstein and how fine and selective it's applied:

A full comparison guide from Nvidia here:

https://www.nvidia.com/en-us/geforc...olfenstein-youngblood-nvidia-adaptive-shading