We've recently seen games like Halo Infinite and Battlefield 2042, to name a few, crash and burn after going through a very tough development cycle.

What does it actually take as a team to convince those in power to delay a major release so a game can avoid these types of issues?

It just seems this continues to happen over and over again which ultimately hurts the staff that worked so hard on making these games a reality.

I will be quite frank, the core of the problem here is poor management, planning, and lack of a proper direction at the beginning of a problem. You won't believe how many creative leads I have seen/heard about who are 'good talkers' but are horrible at actually leading project development in terms of direction. This leads to things like weird priorities, focusing on changes that would be less important than iteration on other things, etc. etc. And this is because a lot of people get to lead positions precisely because of being good talkers rather than showcasing leadership skills. Not saying that I worked on a project with such leadership (in fact, I would say that this didn't happen that much in my personal experience), but this happens very often.

Ultimately that's the root of all tough development cycles. Delays are just an attempt to fix that, but in 80% of the time wouldn't have been necessary with proper clarity in direction.

The thing about delays though is that they increase a game's budget, which means they increase the amount of copies that have to be sold in a fast enough amount of time to be considered a profitable endeavor. Sometimes from that perspective it is better to just get the game out of the door first.

For touch game devs: the best active action experiences on iPads have by far been Bastion, Geometry Wars 3 and the most intuitive controlling one was Wayward Souls. Broken down it's left pane for movement and right pane for taps and slides, using one or two fingers.

Barring the question of accessibility and dexterous movement with two hands:

Why didn't we get the Halo console FPS paradigm shift in touch games with, I guess, something like Wayward Souls? Move, attack, fire projectiles, open doors and other environmental actions and interactions without having to move ones eyes to look at the screen controls for the required action button or hope blind tapping hits the correct action button.

Why are we still met with a ridiculous number of action icons which will never feel intuitive, and actually may be a different form of gaming input prowess and ability significantly/entirely until some incredible microhaptic feedback is invented for consumer products?

Wayward Souls is a game where you move on one half and do actions on another half. FPSes on touch screens are usually games where you move on one half and aim/rotate camera with another half - the most important actions. So in this case it will be very easy for actions like moving the right finger up to look up be confused with a, let's say, grenade throw action.

I think it's possible to have an FPS game without action buttons, but it has to be EXTREMELY specifically designed for that and require a lot of testing and iterations to make it right, because the core problem still remains - you already have two constantly used functions on two halves of the screen, how do you separate those from other actions? That's the challenge.

Have there been times where you've seen a story altered because of what would be required to program or create? As in simplified or removed, as an aspiring writer i do often wonder how much creative control a writer can have within the gaming industry among others.

Oh for sure. This relates to both story and gameplay, when story has to be altered because of gameplay and vice versa. There are so many times where something sounds like it is reasonable to do, and then for one reason or another it's not.

Wow, I greatly appreciate this thread.

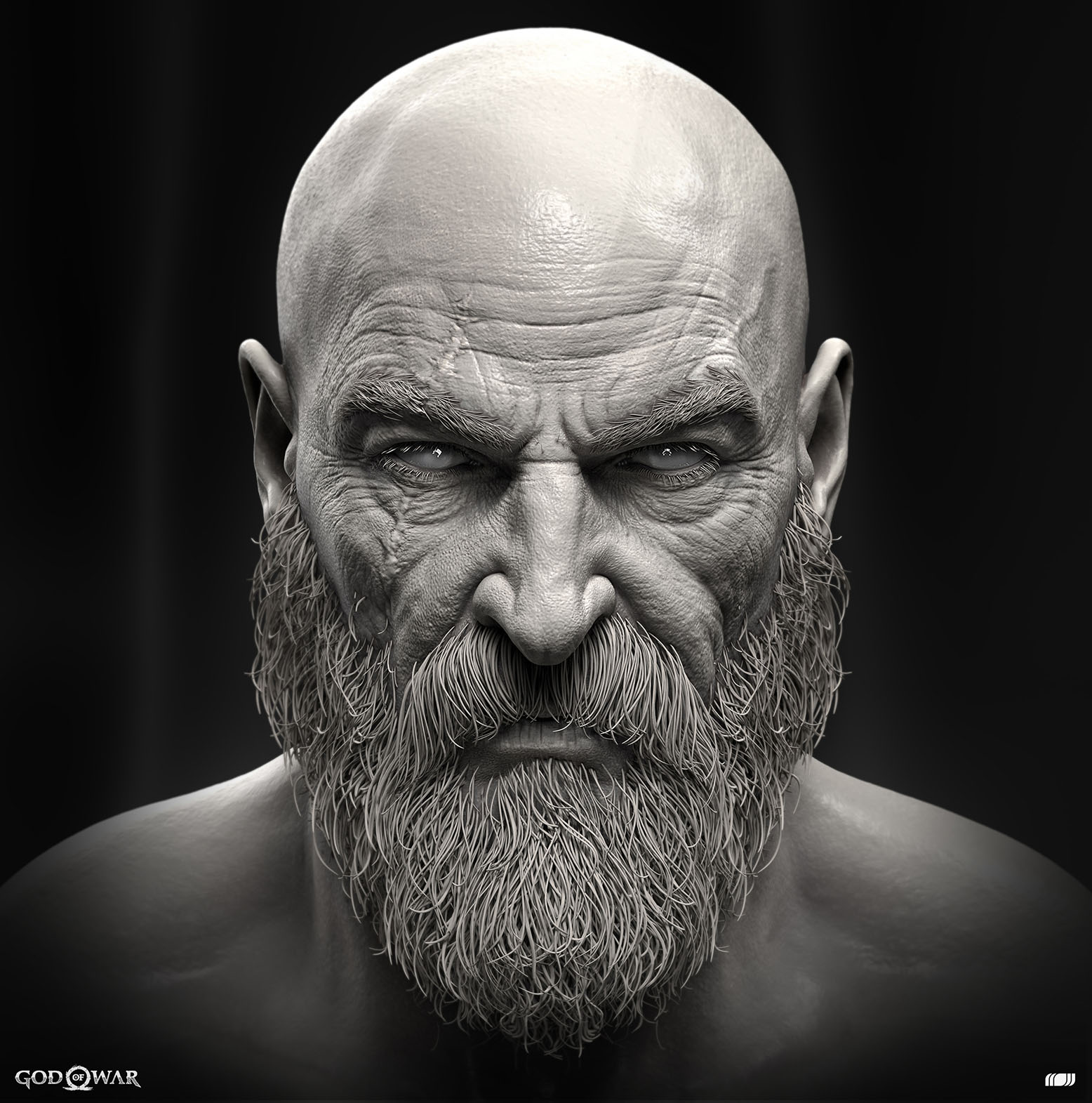

One thing I really wanted to know. I've seen a lot of people say next-gen exclusives will be more costly and time-consuming to make sure to the increase in required asset quality. However, I've also seen and heard a lot about how assets for last-gen games were already created at very high levels of detail and then brought down with decision and retopology - not just for PC ports and such but things like these Zbrush models for Nathan Drake and Kratos:

So if that's the case, I would've assumed the work going into these assets wouldn't actually increase, and the only change would be not needing to cut their detail as much. Can you comment on this in any way?

Besides what others have said, there's a lot more to graphical fidelity and manpower for it than the quality of the model itself - animations, amount of environmental assets, physics, etc.

Couple of questions about one subject:

Is there always a Game Design Document (GDD) when developing a game?

How detailed and big are these documents?

Does everyone in the team have access to it?

How much does a team rely on such a document?

I'm sure some parts of (even the core) design will change during development. How is this communicated normally to the team? Will the Game Designer alter the GDD and then point to these changes or does he present it in a big meeting?

It's not as much a single GDD for the whole game, as much as it is a bunch of smaller GDDs for smaller games. Regarding details, usually it's as detailed as needed - here's the thing, designing EVERYTHING in advance consumes a lot of time and is usually not useful, because then when you will have to iterate you will essentially throw out a bunch of design work, so usually GDDs have flows, mock-ups, required variables etc., but some very little details are not part of it - they're usually tracked through task lists or JIRA, not through GDDs.

Good documentation is imperative for everyone to be on the same page, and of course if there are big changes then the team must be made aware of that through a call or presentation.

One thing that has been bugging me for a while is: why is there still in this day and age a need to have a 'Press start' screen in so many games? I mean, yes, it can look nice, and I think I read sometime ago that it is necessary to see which controller will be the primary or active controller (?), but if the latter is a reason, then why doesn't the OS send the 'this controller started the game' property along in the startup args or something?

If it's just because it looks nice: please, for the love of god, do the actual game loading before or while showing the 'Press start' screen, because why would you not?

I mean, on the latest consoles, load times are no issue anymore I guess, but I remember on PS3 I would sometimes start a game, walk away to the bathroom or something, and then return, expecting a game to be loaded, but nooo, I see the press start screen and then the loading starts, WHYY? And I still notice it in games nowadays: first the Press Start screen and then some logos popping up or something, so annoying!

If you have to or want to use a Press Start screen, make sure a single press shows the menu instantly after.

Console manufacturer requirements really. For Microsoft specifically that's needed to know which user is using the console (since different controllers can be assigned to different users), then it is a safety measure to avoid TV burn in. And for example while on a PlayStation you select the user before starting the game, any console-specific feature is a pain to keep track of, even a small one, so it sort of done on all platforms even if some don't need it.

Hi!

How accurate is a video game color work? And how often do you feel that is misrepresented on certain screens, videos, screenshots etc. is that an issue at all in game development? Is there a consideration on how people will look at your game? Do find yourself looking at stuff you worked on and finding that something looks off?

I also have kinda of the same questions on the sound department, specially the mix.

Being a film colorist myself sometimes I feel like dying when I watch certain theatrical releases of work I did on certain theatres/projectors/broadcast or even home releases.

Another thing I would like to kindly ask you, is why do games come with so different sound levels between them? Like if I want to have a session on game A I know that I will need to rank up the overall volume of my speakers quite a bit…but then if I jump to game B than I will need to bring it down considerably….shouldn't there be an default sound mix value to avoid this issue?

Sorry for so many questions!

Thank you for your time!

Color work is tricky, but at least internally all artists need to have their screens calibrated the same way to ensure consistency. I'm not an artist myself though so they would be able to give more info on that.

Regarding the sound level differences between games - there's no required standards related to that, so every developer follows their own principles.

Great thread! Who are the unsung heroes of game development? Also, what is something in a specific game that you were really impressed with and gave you a 'why didn't I think of that?!' moment - even if it's a very small thing that only people in your speciality would appreciate? Thanks

Unsung heroes are testers and community managers. Testers and quality analysts usually are the lowest paid jobs and whenever people complain about issues in a game they usually unfairly blame QA ("how did QA not see this?" is a question I HATE). And community managers have to deal with shittons of toxic shit and honestly protect a lot of developers from having to deal with that.

does anyone do automated tests? i imagine they must but do they really?

Yep, automated tests are definitely done.